A futuristic city map showing distributed edge computing nodes connected by glowing data lines to a central cloud data center, dark blue background with neon highlights

What Is Edge Cloud?

Content

Content

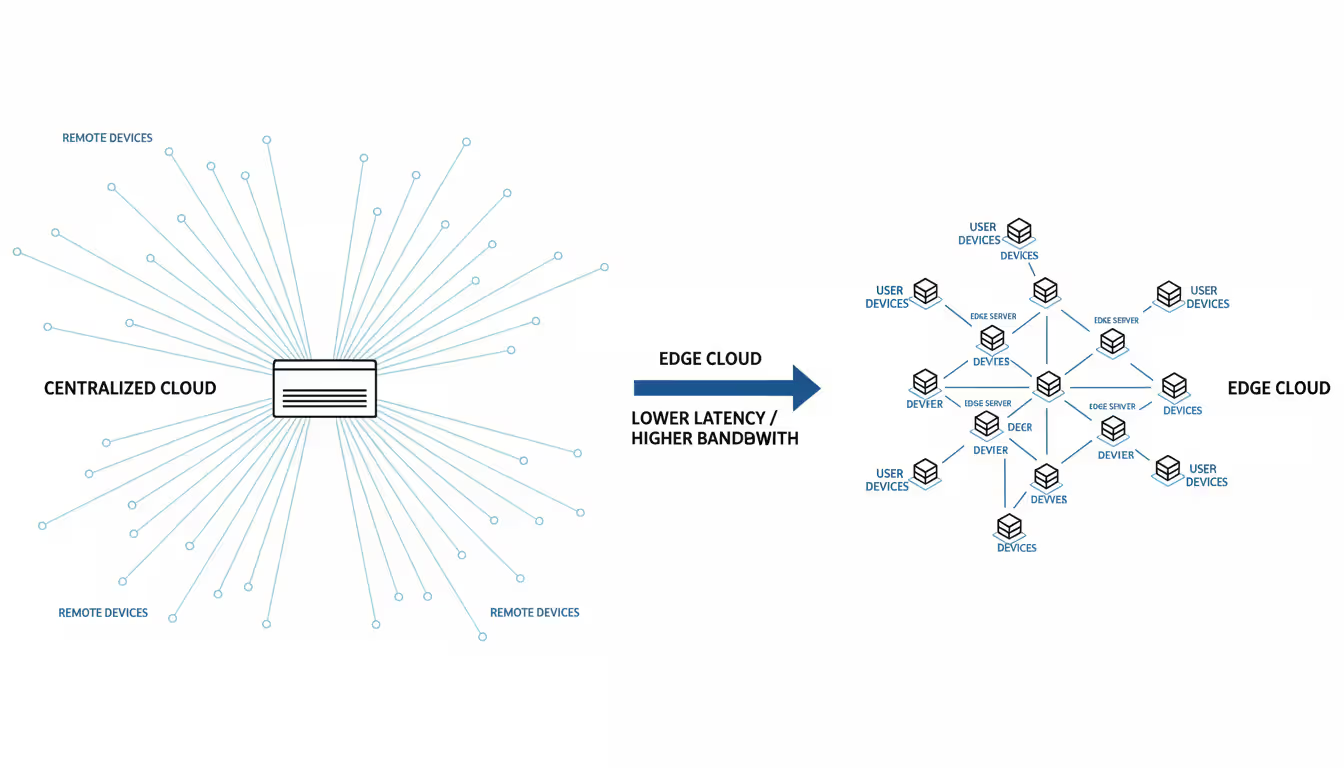

Edge cloud brings data processing capabilities directly to the locations where information originates, eliminating the need to route every transaction through distant centralized facilities. This distributed computing model positions servers, storage systems, and network equipment at strategic points close to users, sensors, and connected devices—dramatically shortening the path data travels and accelerating response times.

Think of it this way: traditional cloud computing is like having one massive library in a distant city where everyone must travel to access books. Edge cloud creates smaller branch libraries throughout neighborhoods, so people get what they need immediately without the commute. The branch libraries stay connected to the main facility, sharing resources and information as needed, but most interactions happen locally.

In practice, this means installing computing infrastructure at cell towers, retail storefronts, factory floors, hospitals, and thousands of other locations. These installations might be ruggedized servers in weatherproof enclosures, containerized micro data centers, or purpose-built industrial equipment. Each site runs applications tailored to local requirements—a grocery store might process video analytics to track customer movements, while a manufacturing facility runs machine learning models to detect product defects.

What separates edge cloud from simple content caching systems is its ability to execute complex, dynamic workloads. These aren't just static file servers. They operate databases, analyze streaming sensor data, run artificial intelligence models, and make split-second decisions based on current conditions. When a drone needs to avoid an obstacle, it can't wait for instructions from a server 2,000 miles away—the edge computing system onboard makes that decision in microseconds.

Author: Caleb Merrick;

Source: clatsopcountygensoc.com

Edge Cloud Architecture and Core Components

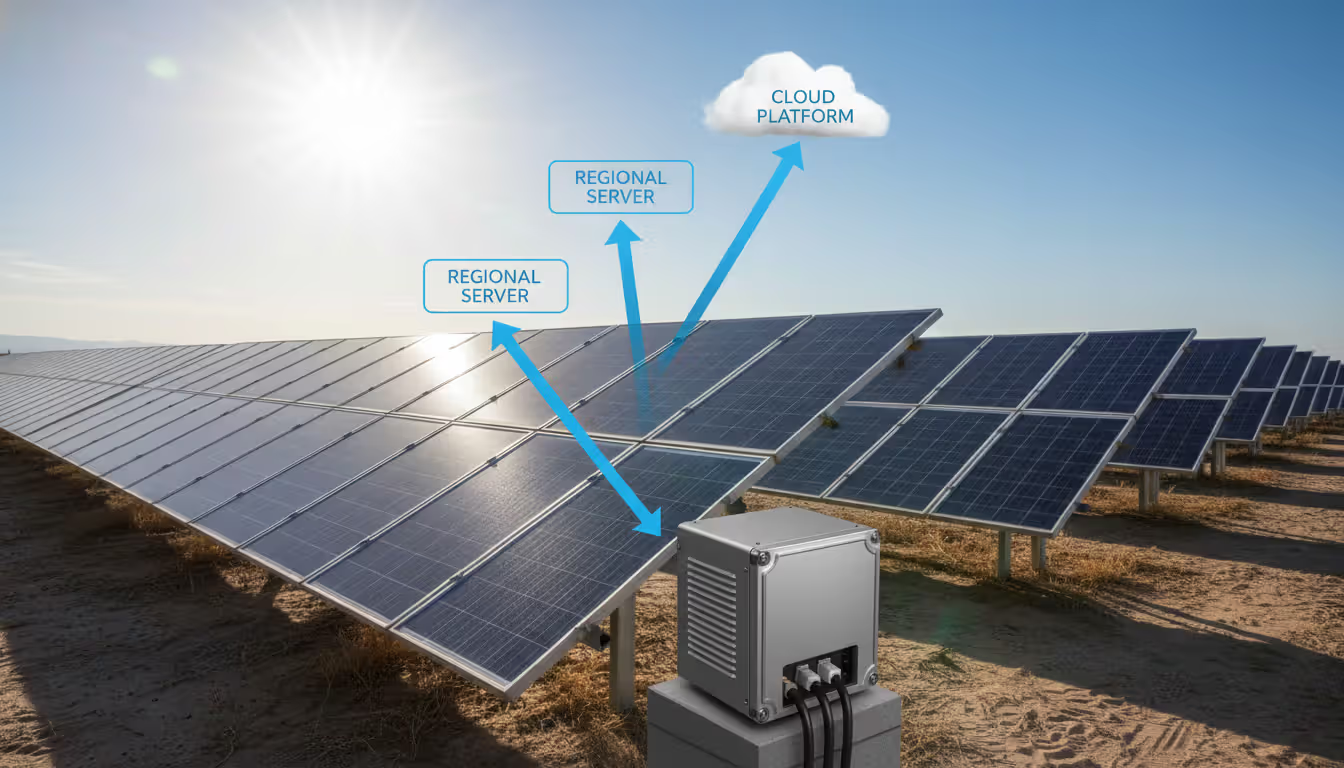

The architecture functions in distinct layers, starting with the devices and sensors that generate data. Above this sits the first processing tier—edge nodes deployed as close as possible to data sources. A telecommunications provider might install these at every cell tower. A retail chain positions them in individual stores. An energy company places them at wind turbines and solar arrays.

These edge nodes pack considerable computing power into compact, environmentally hardened packages. Inside you'll find processors optimized for specific tasks (general-purpose CPUs, graphics processors for AI workloads, or specialized accelerators for video encoding), solid-state storage for local data and application caching, and network interfaces connecting to both local devices and upstream infrastructure. The equipment must withstand temperature extremes, vibration, dust, and other environmental challenges that data center hardware never encounters.

Software orchestration represents the brain coordinating everything. Modern systems leverage Kubernetes and similar container platforms to manage application deployment across thousands of locations simultaneously. These orchestration layers decide which applications run where, monitor resource utilization, detect failures, and redistribute workloads when problems occur. If an edge node at a particular retail location fails during business hours, the orchestrator might temporarily shift its workloads to a nearby store or escalate processing to regional facilities.

Between individual edge sites and centralized cloud data centers sits a middle tier—regional aggregation points. These intermediate facilities handle workloads too demanding for individual edge locations while maintaining much lower latency than full round-trips to distant cloud regions. They serve as concentration points where data from dozens or hundreds of edge sites flows together for additional processing before selective forwarding to cloud storage and analytics platforms.

Network connectivity binds these layers together. 5G cellular networks, fiber optic cables, and private networks carry data between tiers. The network design must account for bandwidth constraints that dictate processing placement—analyzing a 4K video stream locally generates far less traffic than uploading every frame for remote processing. Network architects must also plan for degraded connectivity scenarios where edge nodes operate autonomously until connections restore.

Security components permeate every layer. Hardware-based trusted platform modules verify that systems boot only authorized software. Encrypted storage protects data at rest. Certificate-based authentication ensures only authorized applications and users access resources. Network segmentation isolates different functions and tenants. Physical security becomes paramount since edge equipment sits in locations ranging from locked telecommunications facilities to public retail spaces.

How Edge to Cloud Computing Differs from Traditional Cloud

Traditional cloud computing concentrates massive resources in a small number of strategically located data centers. These facilities benefit from economies of scale—cheaper electricity, specialized cooling systems, bulk hardware purchases, and concentrated expertise. Users access shared resources through internet connections, accepting whatever network latency results from geographic distance.

Edge to cloud reverses this model, distributing smaller computing installations across numerous locations. Organizations trade operational simplicity for performance gains that physical proximity provides. An application request processed by traditional cloud infrastructure might traverse a dozen network routers and travel hundreds of miles, accumulating 80-120 milliseconds of latency. Edge processing happens within a few miles of the user or device, completing the same request in under 10 milliseconds.

This proximity fundamentally changes what's possible. Augmented reality applications projecting information onto physical environments need response times under 20 milliseconds—any slower and users experience disorienting lag between head movements and display updates. Industrial robots coordinating movements on assembly lines cannot tolerate variable latency that causes timing mismatches. These applications simply cannot function with traditional cloud architectures.

| Feature | Edge Cloud | Traditional Cloud | Hybrid Edge-to-Cloud |

| Response Time | 1-10 milliseconds | 50-120 milliseconds | 5-35 milliseconds depending on workload |

| Best-Fit Applications | Autonomous systems, real-time IoT analytics, AR/VR experiences, robotics control | Web hosting, data warehousing, batch analytics, model training | Connected manufacturing, vehicle telematics, remote healthcare, distributed retail systems |

| Pricing Model | Higher equipment costs per location, minimal data transfer charges | Lower per-unit compute costs, substantial bandwidth fees | Mixed model balancing edge hardware and network expenses |

| Where Processing Happens | At source or within one network hop | Centralized regional facilities 500-2000 miles away | Intelligent workload distribution across multiple tiers |

| Growth Strategy | Add nodes to new locations, limited capacity per site | Nearly unlimited vertical and horizontal expansion | Application-specific scaling at appropriate tier |

Data movement economics shift dramatically between models. A factory floor generating 8 terabytes of sensor readings daily cannot economically upload everything to remote data centers. Beyond prohibitive bandwidth costs, network infrastructure physically cannot sustain those transfer rates across hundreds of sites. Edge processing filters and aggregates data locally, forwarding perhaps 20 gigabytes of processed insights rather than raw measurements.

Management complexity represents a significant tradeoff. Centralized cloud computing means managing applications across perhaps a dozen regional data centers. Edge deployments might span 5,000 retail locations or 50,000 cell towers. Software updates, security patches, configuration changes, and troubleshooting must happen through automated systems—manual intervention becomes physically impossible at this scale.

Traditional cloud provides effectively elastic capacity. Need 1,000 additional servers? Click a button and they appear within minutes. Scaling edge infrastructure requires physical hardware deployment to specific locations, involving procurement, shipping, installation, and network provisioning. Planning cycles extend from minutes to months. However, each edge site typically requires less absolute capacity since it serves specific local needs rather than global user populations.

Author: Caleb Merrick;

Source: clatsopcountygensoc.com

Key Benefits of Edge Cloud Services

Immediate response capabilities enable entirely new application categories. Consider surgical robotics where a specialist in New York guides an operation in London. The robotic instruments must respond to control inputs within 10 milliseconds to maintain the natural feel surgeons need for precise movements. Only edge processing positioned at both locations can deliver this responsiveness—routing control signals through traditional cloud infrastructure introduces intolerable delays.

Bandwidth optimization delivers both cost savings and operational benefits. A smart city deployment with 10,000 traffic cameras cannot upload continuous video feeds from every intersection. The network costs alone would consume most municipal budgets. Edge computing analyzes video locally, detecting accidents, tracking traffic flow, and monitoring pedestrian crossings. Only event notifications and anonymized statistics flow to central systems—reducing bandwidth requirements by 95% while providing better real-time situational awareness.

Privacy preservation comes naturally when sensitive information stays local. Healthcare facilities process patient vital signs through edge infrastructure, keeping detailed medical data within facility boundaries. Only anonymized statistical summaries reach centralized analytics platforms. Manufacturers analyze production processes locally without exposing proprietary techniques to third-party cloud providers. Certain regulatory frameworks mandate this data locality, making edge processing a compliance requirement rather than just an optimization.

Operational resilience improves when applications don't depend entirely on network connectivity. A warehouse management system running on edge servers continues directing robotic pickers and tracking inventory during internet outages. Retail point-of-sale systems process transactions locally when network connections fail. Once connectivity restores, systems synchronize accumulated data with central databases. Applications architected for traditional cloud deployment simply stop functioning when networks fail.

By 2026, more than 50% of enterprise-managed data will be created and processed outside the data center or cloud, up from less than 10% in 2020. Edge computing is not just an architectural choice—it's becoming a business necessity for organizations requiring real-time insights and actions

— Thomas Bittman

Total cost advantages emerge despite higher per-unit hardware expenses. A video analytics deployment processing 80TB monthly might incur $2,400 in cloud bandwidth charges alone—that recurring expense disappears with edge processing. Eliminating the need to store massive volumes of raw data in expensive cloud storage, avoiding over-provisioned central resources for peak loads, and reducing latency-related performance problems all contribute to better economics for specific workloads.

Energy efficiency gains result from eliminating unnecessary data transmission. Network equipment consumes substantial power moving data between locations. Processing information once, where it originates, reduces overall energy consumption. Edge facilities can also leverage local renewable energy sources or schedule intensive processing during off-peak electricity rate periods more flexibly than centralized data centers serving global audiences.

Common Use Cases for Edge Cloud Platforms

Manufacturing environments demonstrate the most mature edge deployments. Factories install computing infrastructure that monitors equipment health, predicts maintenance requirements, optimizes production parameters, and validates quality standards. An aerospace parts manufacturer might deploy computer vision systems that inspect thousands of welds per hour, immediately flagging any defects rather than discovering problems during batch quality reviews hours later. Predictive maintenance algorithms analyze vibration patterns, thermal profiles, and acoustic signatures in real-time, identifying bearing wear or alignment issues before machinery fails and halts production lines.

Autonomous vehicle operations require extensive edge processing. Self-driving cars generate approximately 4,000 gigabytes of sensor data per hour from cameras, lidar units, radar systems, and inertial sensors. No network infrastructure could transmit this volume, and even if possible, the latency would make remote processing useless for immediate driving decisions. Primary computing happens within vehicles themselves, with supplementary edge infrastructure at intersections and along roadways providing additional sensing capabilities and coordination services. This distributed system enables vehicles to share information about road conditions, hazards, and traffic patterns through low-latency vehicle-to-infrastructure communication.

Author: Caleb Merrick;

Source: clatsopcountygensoc.com

Retail operations leverage edge computing for customer behavior analysis, inventory tracking, and personalized engagement. Intelligent shelving systems with weight sensors and cameras monitor stock levels continuously, automatically generating restocking orders when supplies run low. Computer vision analyzes customer traffic patterns, measuring how long shoppers examine specific products and which displays attract attention—all without uploading raw video footage beyond store boundaries. Point-of-sale systems maintain transaction processing during network disruptions, queuing data for synchronization when connectivity returns.

Healthcare settings process patient monitoring information through edge infrastructure to detect critical changes immediately. Hospital edge systems analyze continuous vital sign streams from dozens of patients simultaneously, alerting clinical staff within seconds when conditions deteriorate. Remote patient monitoring devices worn at home process physiological data locally, protecting privacy while forwarding relevant health indicators to care coordination teams. Robotic surgical systems depend on ultra-low latency that only local edge processing provides.

Smart city initiatives coordinate traffic signals, monitor environmental conditions, manage parking availability, and optimize energy distribution through distributed edge platforms. Traffic management systems analyze intersection camera feeds locally, adjusting signal timing based on actual vehicle volumes rather than following fixed schedules. Environmental monitoring sensors throughout urban areas measure air quality locally, generating detailed pollution maps without overwhelming central systems with continuous raw measurement streams.

Entertainment and gaming services deploy edge infrastructure to minimize latency for interactive experiences. Cloud gaming platforms position servers in metropolitan areas, rendering games close to players to eliminate input lag that ruins gameplay. Streaming video services handle adaptive bitrate encoding at the edge, optimizing quality for local network conditions and reducing buffering.

Telecommunications carriers build edge computing capabilities into their 5G network infrastructure, offering edge-as-a-service to enterprise customers. A logistics company might run route optimization and fleet tracking applications on carrier-provided edge infrastructure, benefiting from proximity to cellular networks and GPS positioning services without building proprietary edge deployments.

How Edge to Cloud Platforms Work Together

Data moves through edge-to-cloud systems in a carefully orchestrated flow. Raw information originates at sensors, connected devices, or user interactions at edge locations. Local computing resources perform immediate processing—filtering noise, aggregating measurements, analyzing patterns, and making real-time decisions. Results, detected anomalies, or data requiring deeper analysis move to regional aggregation facilities for additional processing. Finally, refined datasets, long-term trend analysis, and machine learning training data flow to centralized cloud platforms.

Consider a solar farm installation: each solar panel has sensors measuring voltage, current, temperature, and light exposure multiple times per second. Edge controllers attached to panel strings analyze these measurements locally, adjusting inverter settings to optimize power output based on current conditions. These controllers forward performance summaries to a facility-level edge server every 10 seconds. The facility server aggregates data from thousands of panels, identifies array-wide patterns affecting generation efficiency, and sends hourly summaries to cloud infrastructure. Cloud systems store historical performance data, train machine learning models using years of operational experience, and distribute updated optimization algorithms back to edge controllers.

Author: Caleb Merrick;

Source: clatsopcountygensoc.com

Workload placement requires careful analysis of application requirements. Latency-critical operations must execute at the edge—the laws of physics allow no compromise. Computationally intensive but latency-tolerant tasks often run in centralized cloud environments where massive compute resources are more economical. Machine learning model training typically happens in cloud facilities with specialized hardware accelerators, while inference operations run at edge locations using trained models. Application developers build and test software in cloud environments with familiar tools, then deploy production versions to edge infrastructure.

Synchronization approaches balance consistency requirements, performance needs, and bandwidth constraints. Critical information synchronizes immediately using event-driven mechanisms—a security camera detecting unauthorized facility access pushes alerts instantly regardless of bandwidth costs. Operational data follows scheduled synchronization—every minute, hour, or day depending on business requirements. Historical information uses eventual consistency models, synchronizing when bandwidth is available and network rates are lowest. Edge systems maintain independent local databases that operate autonomously, implementing application-specific conflict resolution when reconnecting to central systems after network partitions.

Orchestration platforms manage workload distribution and migration across tiers. When edge site capacity reaches limits, orchestrators shift non-critical workloads to regional facilities or cloud resources. During network congestion periods, systems prioritize critical data flows and defer less urgent synchronization. These platforms also coordinate software updates, deploying new versions gradually across edge locations while monitoring for problems and maintaining rollback capabilities.

Failure handling mechanisms differ substantially from traditional cloud approaches. Edge infrastructure must continue operating during network partitions, making decisions based on local state rather than waiting for central coordination. When an edge node fails completely, neighboring nodes or upstream resources temporarily assume its workload until repairs complete. Data durability mechanisms protect locally generated information during failures—edge nodes maintain local persistent storage and opportunistically forward data when connectivity permits.

Choosing an Edge Cloud Platform for Your Business

Provider ecosystem capabilities vary substantially across available options. Hyperscale cloud operators like AWS, Microsoft Azure, and Google Cloud offer edge extensions that integrate tightly with their core cloud services, providing consistent APIs, management interfaces, and security models across distributed and centralized resources. Telecommunications carriers provide edge computing integrated directly with 5G networks, offering exceptionally low latency for mobile devices and IoT sensors. Specialized edge platform vendors focus on specific industries—industrial automation, retail operations, or content delivery—providing purpose-built solutions with vertical-specific features and optimizations.

Geographic footprint matters enormously for edge computing. The entire value proposition depends on physical proximity to data sources and users. A provider with edge presence in major metropolitan areas provides no benefit if your operations span rural regions, secondary cities, or international markets. Map your current facilities and planned expansion locations against provider coverage areas. Consider both immediate availability and expansion roadmaps—a provider planning edge sites in your target markets within 12 months might warrant consideration.

Integration requirements with existing systems often determine platform suitability. Organizations already committed to specific cloud platforms benefit from choosing edge infrastructure that integrates seamlessly with current investments. Multi-cloud strategies require edge platforms supporting connections to multiple upstream cloud providers simultaneously. Legacy application compatibility deserves careful evaluation—can existing software run on edge infrastructure without modifications, or does deployment require extensive refactoring?

Performance specifications directly impact application viability. Understand precise latency requirements, compute capacity needs, storage demands, and network bandwidth constraints for your specific workloads. Edge platforms offer wildly different hardware configurations—some provide GPU acceleration for artificial intelligence processing, others deliver specialized industrial-grade equipment for harsh environments, still others optimize for density in space-constrained locations. Match platform capabilities precisely to application requirements rather than over-provisioning expensive distributed infrastructure.

Cost structures demand careful analysis beyond simple per-hour pricing. Edge platforms typically charge for hardware capacity (reserved long-term or pay-as-you-go), data transfer between edge and cloud (often with tiered pricing based on volume), and management services (monitoring, orchestration, support). Calculate total cost of ownership including bandwidth savings from local processing versus hardware deployment costs. Workloads with predictable capacity needs usually achieve better economics through reserved capacity commitments; highly variable workloads benefit from flexible pricing despite higher per-unit costs.

Security capabilities must address both your risk tolerance and compliance obligations. Evaluate physical security provisions for edge locations—how does equipment in retail stores or on utility poles stay protected from tampering? Assess data encryption implementations for information at rest and in transit, network isolation capabilities between tenants and applications, access control mechanisms, and audit logging comprehensiveness. Regulated industries require platforms with appropriate certifications—HIPAA for healthcare, PCI-DSS for payment processing, ISO 27001 for information security. Consider threat models specific to edge environments rather than assuming data center security paradigms apply.

Management and operational tools determine ongoing costs and complexity levels. Platforms offering robust automation, comprehensive monitoring, and effective troubleshooting capabilities reduce operational overhead substantially. Remote management capabilities become essential—physically visiting thousands of edge locations for routine maintenance is economically infeasible. Evaluate update mechanisms and rollback procedures, performance monitoring granularity, and incident response workflows.

Support quality and service level commitments matter more for edge infrastructure than traditional cloud. When an edge node fails, local business operations may halt completely until repairs complete. Understand provider response time commitments, support availability hours, and hardware replacement procedures. Some providers pre-position spare hardware at edge locations for immediate swap; others ship replacement equipment to failed sites, requiring days for restoration.

Vendor lock-in risks intensify with edge platforms due to hardware dependencies and specialized software stacks. Evaluate migration paths and data portability before making long-term commitments. Applications built using standard containers and Kubernetes orchestration provide more flexibility for future provider changes than those depending on proprietary platforms, though standardized approaches may sacrifice some edge-specific performance optimizations.

Frequently Asked Questions About Edge Cloud

Edge cloud transforms how organizations architect distributed applications by delivering cloud computing capabilities directly to network edges. Combining local processing power with reduced latency and bandwidth optimization enables applications that traditional cloud-only approaches cannot support. As IoT deployments proliferate, autonomous systems advance, and real-time processing demands intensify, edge cloud infrastructure transitions from competitive advantage to operational necessity for many organizations.

Successfully implementing edge-to-cloud platforms requires matching architectural patterns to specific business needs. Not every application belongs at the edge—careful evaluation of latency requirements, data volumes, processing demands, and cost tradeoffs determines optimal placement strategies. Organizations should begin with clearly defined, high-value use cases demonstrating measurable benefits, then expand edge deployments as operational expertise develops.

The edge cloud market continues evolving rapidly with improving management tools, expanding geographic coverage from major providers, and maturing best practices making adoption progressively more accessible. Organizations planning digital transformation should evaluate how edge platforms might enhance their architectures, particularly for applications involving connected devices, real-time analytics, or geographically distributed operations. The relevant question has shifted from whether edge computing matters to determining how best to integrate it with existing infrastructure—delivering superior performance, reduced costs, and new capabilities that centralized cloud infrastructure alone cannot provide.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.