Modern network operations center with large monitor wall displaying global cloud network traffic maps and performance dashboards

Cloud Network Monitoring Guide

Picture this: your development team just deployed a new feature across three different cloud providers. Within minutes, customers in Europe report slow checkout times. Your ops team scrambles through AWS Console, then Azure Portal, then Google Cloud—each showing different metrics with no clear connection between them. By the time you identify the culprit (a misconfigured load balancer in Frankfurt), you've lost $50,000 in abandoned carts.

This scenario plays out daily at companies running distributed cloud workloads. When your infrastructure spans multiple providers, regions, and services, traditional monitoring approaches fall apart. You need visibility that moves as fast as your infrastructure does—tracking ephemeral containers, auto-scaling groups, and serverless functions that exist for seconds before disappearing.

That's where effective monitoring comes in. It's not about collecting more data; it's about connecting the dots between your fragmented cloud environments before small issues become expensive outages.

What Is Cloud Network Monitoring

Think of cloud network monitoring as a real-time map of everything happening inside your virtualized infrastructure. It continuously collects performance data, tracks traffic patterns, and flags security anomalies across all your cloud-based systems. But here's the key difference from old-school monitoring: it doesn't assume anything stays put.

Remember when your data center had physical servers bolted into racks? You knew exactly where Server-23 lived, what IP address it used, and which switch it connected to. That world made monitoring straightforward—set up SNMP polling, configure some threshold alerts, call it a day.

Cloud infrastructure laughs at that simplicity. Your application server might run on instance i-0abc123 right now, but in ten minutes, that instance could vanish when traffic drops. Auto-scaling launches new instances with different IPs. Kubernetes pods restart and get assigned new addresses. Load balancers shuffle traffic between availability zones. Your monitoring system either adapts to this chaos or becomes useless.

Modern cloud network technology operates through several interconnected layers:

Collection agents pull metrics from virtual network interfaces, hypervisor telemetry, and cloud provider APIs. Some run as lightweight processes on your instances. Others query cloud APIs remotely, avoiding the need to install anything. Both approaches have trade-offs—agents see deeper but require maintenance; API polling stays hands-off but misses some details.

Normalization engines translate different data formats into consistent structures. A transaction might start in your AWS VPC, cross into Azure through a VPN tunnel, and finish at your on-premises PostgreSQL database. Each segment generates logs in different formats with different timestamp conventions. Your monitoring platform needs to stitch these fragments together into one coherent story.

Pattern recognition systems learn what "normal" looks like for your specific environment. Is 10,000 requests per second high or low? Depends entirely on context—maybe that's your typical Monday morning load, or maybe it's a DDoS attack. Machine learning models establish baselines and sound alarms when reality diverges from expectations in meaningful ways.

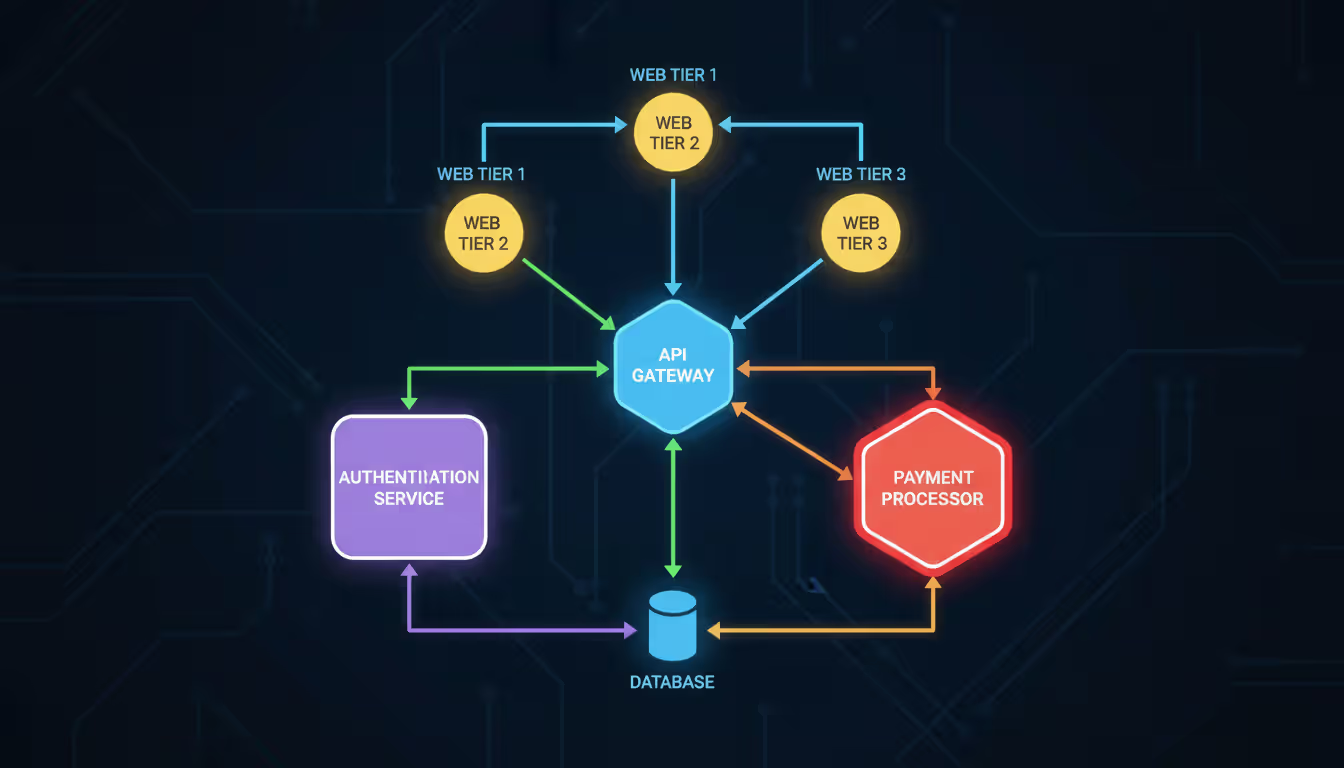

Visual interfaces show how services connect and where problems hide. When checkout latency spikes, you need to instantly understand the dependency chain: web tier → API gateway → authentication service → payment processor → database. Topology maps reveal which link in that chain broke.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Here's a concrete example: An online retailer's auto-scaling group launches 50 new web servers during a flash sale. Legacy monitoring tools would require manual configuration for each instance—adding them to dashboards, setting up alerts, defining threshold values. By the time you finished configuration, the sale would be over and the instances terminated. Cloud-native monitoring discovers these instances automatically, applies the right tags based on their role, and incorporates them into existing visualizations within seconds.

How Cloud Network Monitoring Works

Let's talk about how monitoring actually captures data from cloud environments, because the mechanics matter more than you'd think.

Two main collection strategies dominate: agent-based and agentless. With agents, you install a small program on each virtual machine or container. That agent watches everything happening at the OS level—which processes open which network connections, how many bytes travel through each interface, whether packets are getting dropped. It's like having a dedicated observer inside each instance reporting back continuously.

The agentless approach skips installation entirely. Instead, it queries cloud provider APIs to pull metrics from the control plane. AWS CloudWatch, Azure Monitor, and Google Cloud Operations all expose performance data through REST APIs. Your monitoring system just asks "Hey AWS, what's the network throughput on these 200 instances?" and processes the response.

Which works better? Depends what you're optimizing for. Agents provide richer detail—you can track individual process network activity, capture packet-level statistics, and measure application-layer metrics. But they consume CPU and memory, require updates, and occasionally crash. API polling avoids operational overhead but offers coarser granularity and introduces slight delays as metrics propagate through the provider's collection systems.

Most sophisticated setups use both. Agents handle detailed application telemetry while API calls track infrastructure state changes—when someone modifies a security group, updates a route table, or resizes a subnet.

What specific data matters? Here's what actually helps troubleshoot problems:

Throughput numbers tell you how many bytes flow through network interfaces, load balancers, and NAT gateways each second. A sudden jump from 100 Mbps to 10 Gbps either means your product went viral or someone's exfiltrating your database. Context determines which.

Latency percentiles reveal more than averages ever could. Median response time might look great at 50ms, but if your 95th percentile sits at 5 seconds, 5% of users are having terrible experiences. That's the difference between acceptable performance and customer churn. Track P50, P95, and P99 religiously.

Packet loss percentages expose network congestion or misconfigured routing. Even 1% packet loss crushes application performance because TCP backs off and retransmits, turning small hiccups into massive delays.

Connection metrics count active TCP sessions, UDP flows, and HTTP requests. You might have gigabits of available bandwidth but still fail requests if you've exhausted your connection pool. A database proxy limited to 1,000 concurrent connections will reject request #1,001 regardless of network capacity.

Error counts document failed handshakes, timed-out requests, and rejected connections. A spike in TLS errors might mean your certificate expired, or maybe a deploy pushed incompatible cipher configurations.

Flow logs deserve special mention. They capture metadata about every network conversation without storing full packet contents: source IP, destination IP, ports, protocol, byte count, whether the firewall allowed or blocked it. Think phone records, not wiretaps. You can't read the conversation, but you know who talked to whom, for how long, and whether firewalls permitted it.

A financial services company used flow log analysis to discover that their fraud detection service was making 10,000 API calls per second to a third-party risk scoring service—generating $25,000 monthly in unnecessary API charges. They implemented client-side caching and dropped that cost to $800. Nobody noticed the API calls until monitoring made them visible.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Key Benefits for Cloud Infrastructure Management

Let's cut through the abstract benefits and talk about real operational wins.

Performance stops being guesswork. You know exactly where delays happen and why. A streaming video platform discovered through monitoring that 70% of buffering events originated from a single CDN point-of-presence in Mumbai that was peering poorly with local ISPs. They rerouted traffic to a different POP and buffering complaints dropped 85%. Without detailed network monitoring showing per-POP latency distributions, they'd still be blaming their encoding pipeline.

Resource utilization becomes transparent. Most organizations vastly over-provision because they're afraid of running out of capacity. Better safe than sorry, right? Except "safe" means wasting 40% of your cloud budget on unused resources. One healthcare SaaS company monitored their actual traffic patterns for 30 days and discovered that most application servers peaked at 15% CPU and 20% network utilization. They downsized instances and cut their monthly AWS bill from $180,000 to $105,000 with zero performance impact.

Security threats get caught early—sometimes before they cause damage. Monitoring establishes behavioral baselines for each service. Your PostgreSQL database normally accepts connections from exactly three application server IP ranges between 8 AM and midnight. At 3 AM, it starts receiving connection attempts from a developer laptop. That's weird. Monitoring flags it immediately. Turns out an intern's laptop got compromised and the attacker was attempting to access production data. Because monitoring caught this within 90 seconds, security team isolated the laptop before any sensitive data leaked.

Cost optimization becomes data-driven rather than politically driven. Arguments about whether you "need" that extra NAT gateway or larger load balancer end when monitoring shows exactly how much traffic flows through them during peak hours. An e-commerce company discovered they were paying $3,000 monthly for inter-region data transfer that served no purpose—services in us-east-1 were querying a cache in eu-west-1 because someone misread a configuration parameter. Monitoring made the waste visible; fixing it took 10 minutes.

Compliance auditing happens continuously instead of during panic-inducing annual reviews. Your monitoring system tracks which services access databases containing protected health information. It logs every connection, measures encryption status, and verifies that only authorized applications ever query sensitive tables. When auditors ask "Can you prove that test environments never accessed production patient data?" you generate a report showing every database connection for the past year. Done.

Cloud Network Security Best Practices

Access Control and Authentication

Forget the old "castle and moat" security model where you trusted everything inside your network. Cloud environments require assuming everything is hostile until proven otherwise.

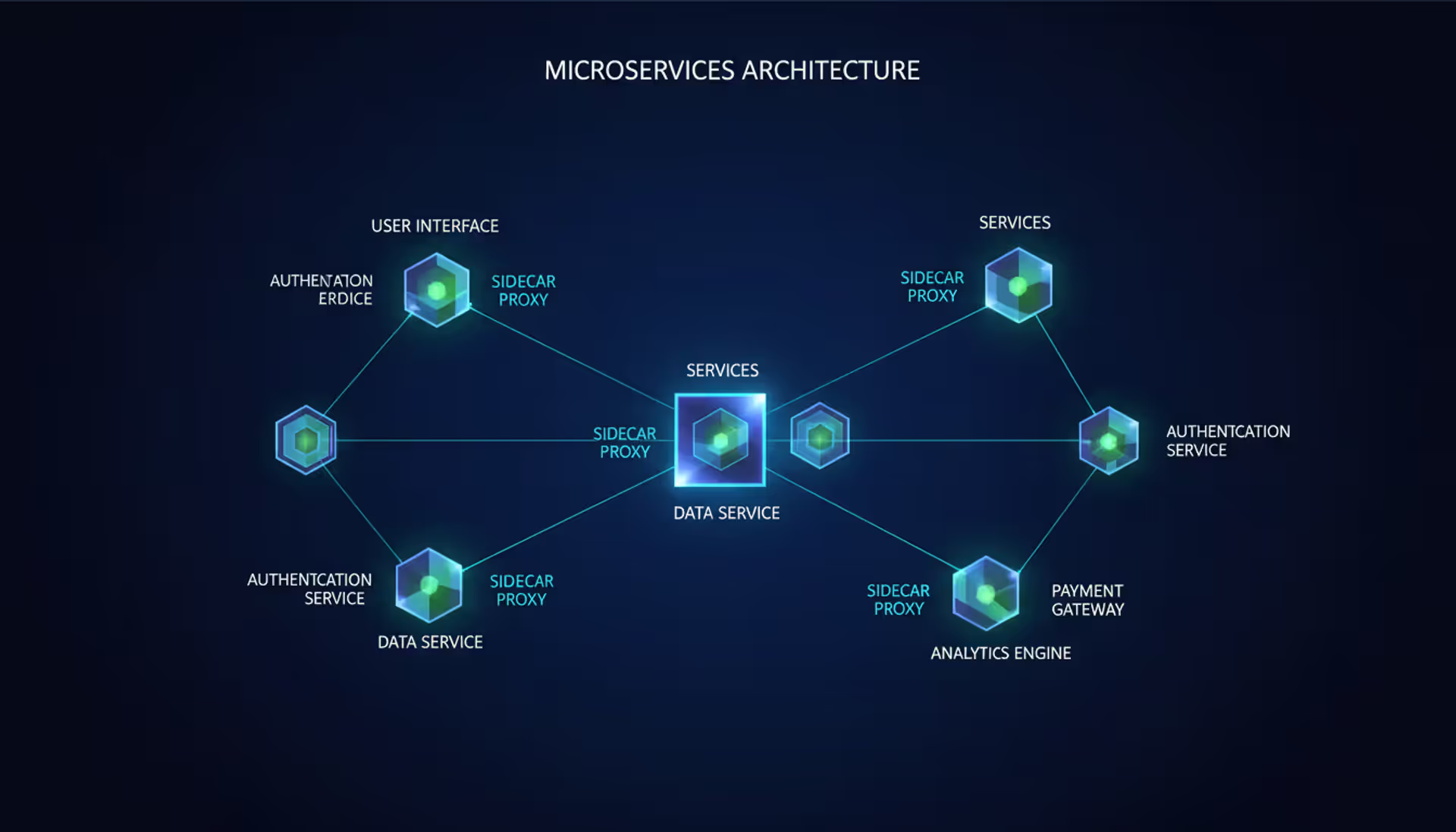

Every service-to-service connection should require authentication—not just from external users, but between your own microservices. When your checkout service calls your inventory service, both sides verify identity using short-lived credentials that expire after hours, not years. Long-term API keys stored in configuration files are security disasters waiting to happen.

Service mesh technologies like Istio, Linkerd, or AWS App Mesh enforce mutual TLS between services automatically. Your payment processor can't communicate with your inventory system because they're never supposed to interact—network policies explicitly define the allowed communication patterns. Even if an attacker compromises the payment processor, they can't pivot to other services because authentication fails.

Network segmentation in Kubernetes uses network policies that specify exactly which pods can reach which services on which ports. Default-deny everything, then explicitly permit only necessary paths. An e-commerce company implemented this after a penetration test showed that a compromised frontend container could directly access their audit logging database—a connection that should never exist. Network policies blocked that path. When they ran the pen test again, the simulated attacker got stuck in the frontend with nowhere to go.

Link network access to your central identity provider. When someone leaves the company, revoking their SSO account automatically terminates all their network access across every cloud environment without requiring manual firewall updates across 50 different AWS accounts and Azure subscriptions.

Encryption and Data Protection

TLS 1.3 should encrypt everything moving between services. Not just externally-facing APIs—internal service calls too. Network monitoring should alert immediately when any service attempts unencrypted HTTP connections or falls back to ancient protocols like TLS 1.0 that have known vulnerabilities.

A financial technology startup found through monitoring that a legacy batch process was still transferring transaction data over unencrypted HTTP to a partner API because "it's on a private network." Private networks aren't magic safety zones. They implemented TLS and discovered the "performance penalty" they'd worried about added less than 5ms to request times—completely imperceptible to users.

Protect stored data using your cloud provider's key management services. Azure Key Vault, AWS KMS, and Google Cloud KMS all handle encryption keys with hardware security modules. Your monitoring should inventory every storage volume, database, and object storage bucket, flagging any that don't enable encryption. Automated workflows can enable encryption on new resources before they store any production data.

Use dedicated network connections like AWS Direct Connect or Azure ExpressRoute to avoid sending sensitive traffic over public internet paths when connecting on-premises systems to cloud environments. The bandwidth might cost more, but attackers can't intercept traffic that never touches the public internet.

Continuous Threat Monitoring

Deploy intrusion detection that analyzes actual traffic patterns, not just firewall logs. Cloud-native IDS solutions inspect traffic mirrored from load balancers and parse VPC flow logs looking for attack signatures—SQL injection attempts, directory traversal probes, credential stuffing campaigns.

Feed threat intelligence into your monitoring. When security researchers discover a new botnet command-and-control infrastructure, those malicious IP addresses should instantly appear in your monitoring system's blocklist. If any of your instances try contacting them, you know something's compromised.

Watch for subtle indicators that humans miss but algorithms catch. A database server typically receives queries from seven application servers in predictable patterns. Suddenly it starts accepting connections from a bastion host that normally only runs SSH sessions. That's anomalous. A content management system usually makes 3-5 S3 API calls per user request. Now it's making 300. That's anomalous. These deviations from baseline behavior often signal compromise, data exfiltration, or misconfiguration.

A manufacturing company's monitoring flagged an old development server making DNS queries to recently-registered domains with random-looking names at 2 AM—textbook command-and-control beacon behavior. Investigation revealed ransomware had infected the server three days earlier and was waiting for instructions. Because monitoring caught the communication pattern before the ransomware activated, they wiped and rebuilt the server with zero production impact.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Choosing a Cloud Network Monitoring Solution

You've got three paths forward, each with different trade-offs between convenience, capability, and cost:

| Approach | Features | Scalability | Cost Structure | Integration Capabilities |

| Native Cloud Tools | Flow logs, basic metric collection, provider-specific dashboards; can't see across cloud boundaries | Auto-scales with your infrastructure; you don't plan capacity | Metered per data volume or bundled with cloud services; costs balloon unpredictably at scale | Works seamlessly with the same provider's services; limited connection to outside tools |

| Third-Party Platforms | Unified cross-cloud dashboards, anomaly detection with ML, custom visualization, advanced correlation | Licensing tiers based on data volume; you estimate and purchase capacity | Subscription per host or per GB ingested; predictable monthly bills | Connects to hundreds of services via pre-built integrations; sometimes lags behind new cloud features |

| Hybrid Approach | Native collection keeps data local, third-party analysis provides unified intelligence | Native collectors auto-scale, central platform requires capacity planning | Pay both native metering and platform subscription; most expensive but most capable | Maximum flexibility at the cost of integration complexity |

Native tools like CloudWatch, Azure Monitor, and Google Cloud Operations get you started immediately. No procurement process, no separate login, no new infrastructure to manage. You're already paying for them (they're bundled into cloud costs), so why not use them? They work great for single-cloud deployments where everyone needs the same basic metrics.

The limitation hits when you go multi-cloud. Now you're logging into three different consoles with three different query languages to troubleshoot one transaction that spans AWS, Azure, and GCP. You can't correlate metrics across providers. Your application teams hate it because they need five browser tabs open just to understand system health.

Third-party platforms—Datadog, New Relic, Dynatrace, Splunk—consolidate everything into single dashboards. One query language, one alerting system, one place to investigate incidents. They add sophisticated features: distributed tracing that follows a request across cloud boundaries, AI-powered anomaly detection that spots patterns humans miss, customizable dashboards that show business metrics alongside infrastructure telemetry.

The downsides? Additional subscription costs, data egress charges from cloud providers (you pay to export metrics to external systems), and occasional feature lag when cloud providers launch new services that third-party tools don't support yet.

Hybrid setups try capturing the best of both. Use native collection mechanisms (they're efficient and included in your cloud spend), but export high-value data to centralized platforms for correlation and analysis. Process high-volume logs locally to avoid data transfer costs, then send only anomalies or aggregated summaries to your central system for long-term analysis.

What matters when choosing?

Query flexibility during incident response. Pre-built dashboards help for routine monitoring, but when systems break in novel ways, you need arbitrary metric correlation. Can you group by custom tags? Filter across multiple dimensions simultaneously? Zoom into specific time windows? Static dashboards become useless when troubleshooting complex failures.

Alert intelligence that reduces noise. Simple threshold alerts generate floods of notifications. Better systems understand temporal patterns (high CPU at 3 PM is normal; at 3 AM is suspicious), group related alerts together, automatically suppress notifications during maintenance windows, and incorporate multiple signals before alerting.

Retention that balances compliance against costs. Detailed flow logs consume massive storage. Keep raw data for 7 days, aggregated hourly summaries for 90 days, and daily rollups for 2 years. This tiered approach satisfies audit requirements without paying to store terabytes of second-by-second metrics forever.

API access for automation. Your monitoring system should trigger actions in PagerDuty, Slack, ServiceNow, Terraform, or Jenkins without manual steps. When monitoring detects problems, it should automatically open tickets, notify on-call engineers, or trigger remediation workflows.

Common Cloud Network Monitoring Challenges

Multi-cloud visibility creates the most immediate headache. Your web application runs on AWS, analytics pipeline on Google Cloud, and databases on Azure. Each works fine independently, but troubleshooting cross-cloud transactions becomes archaeological excavation. You're comparing timestamps across three different logging systems, converting between provider-specific metric names, and manually correlating events with different granularity.

Solving this requires standardized tagging across all clouds—but each provider implements tags differently. AWS limits keys to 127 characters and values to 256. Azure allows 512 characters for both. Google Cloud uses labels instead of tags with yet another set of constraints. Your monitoring platform needs to normalize these differences while respecting each provider's quirks.

Alert fatigue destroys monitoring effectiveness faster than missing features. A logistics company initially configured monitoring to alert on any metric deviation—CPU above 70%, latency above 100ms, error rate above 0.1%. Within a week, engineers were receiving 500+ alerts daily. Most meant nothing. A spike in error rate during a deploy was expected. High CPU at noon matched traffic patterns. Eventually teams just ignored all alerts, including the few that actually mattered.

The fix required rethinking alert logic. Alerts now incorporate multiple signals—don't alert on high CPU unless latency also rises. Use dynamic baselines that account for daily and weekly patterns instead of static thresholds. Group related alerts into single notifications rather than spamming separate messages. Suppress notifications during known maintenance windows. After restructuring, that same company gets 15-20 meaningful alerts weekly—a manageable volume where each notification receives proper attention.

Data volume scales faster than most teams anticipate. A mid-sized company generates 2-4 TB of flow logs daily. Storing this much data for compliance periods (often 90+ days) requires careful architecture. Stream processing aggregates high-volume metrics before storage. Tiered retention policies age out detailed logs while preserving summaries. Sampling techniques capture representative traffic without processing every single packet—monitor 1 in every 100 connections for most traffic, but capture 100% of traffic to sensitive services.

Skills gaps slow adoption more than technology limitations. Cloud network technology evolves constantly—new features ship monthly. Expertise in Kubernetes networking, service meshes, and cloud-native observability remains scarce. Teams trained on traditional network monitoring struggle adapting mental models to ephemeral, software-defined environments where resources constantly appear and disappear.

Some organizations address this through intensive training programs. Others hire cloud-native specialists to build initial implementations. Managed service providers offer another path—outsourcing monitoring operations to teams who specialize in multi-cloud observability.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Implementing Cloud Network Automation for Monitoring

Monitoring that just shows dashboards wastes most of its potential. The real power comes when monitoring data drives automatic responses.

Auto-remediation closes the loop between detection and resolution. Load balancer health checks fail on web-server-37? Monitoring detects the failure, triggers automation that terminates the unhealthy instance and launches a replacement, all within 90 seconds. Nobody pages an engineer at 2 AM because the system heals itself.

Auto-scaling uses monitoring metrics to adjust capacity dynamically. An online education platform monitors lesson enrollment patterns. When monitoring shows more than 5,000 students attempting to join concurrent video sessions, automation spins up 20 additional transcoding instances. When participation drops below 2,000, it scales down to 5 instances. This responsiveness keeps user experience smooth while minimizing costs during off-peak hours.

Deployment pipelines integrate monitoring feedback. Before promoting code to production, automated tests verify that new versions don't degrade network performance. If monitoring detects error rates above 0.5% or P95 latency increases more than 20% after deployment, automation automatically rolls back to the previous stable version. This fail-safe prevents bad deploys from affecting customers.

Infrastructure-as-code embeds monitoring alongside resource definitions. Developers define a new microservice using Terraform. The same Terraform code that provisions the EC2 instances also creates CloudWatch dashboards, configures alerts, sets up log forwarding, and establishes network policies. Monitoring configuration deploys automatically—no separate manual setup process that might be forgotten.

Chaos engineering validates resilience using monitoring as the measurement system. Netflix's Chaos Monkey randomly terminates instances in production. Monitoring confirms that applications handle failures gracefully—traffic shifts to healthy instances, latency stays acceptable, error rates remain low. When monitoring shows problems during chaos experiments, teams fix weaknesses before they cause real outages.

Security automation responds to threats detected through monitoring. When network analysis identifies an instance communicating with known malware infrastructure, automation immediately quarantines that instance by modifying its security groups to block all network traffic. The instance stays running (preserving evidence for forensics) but can no longer spread infection or exfiltrate data. Security teams investigate at their pace without racing against active attacks.

Reactive monitoring is dead.Modern infrastructure changes too fast for humans to manually respond to every alert. The only sustainable approach combines proactive monitoring with intelligent automation—detect anomalies instantly, then trigger automated responses that resolve common issues without human intervention. Reserve human expertise for the truly complex problems that automation can't handle yet

— Maria Chen

FAQ

Cloud network monitoring separates companies that thrive in distributed environments from those that struggle. Without visibility into your infrastructure's network layer, you're flying blind—troubleshooting becomes guesswork, optimization relies on intuition instead of data, and security threats hide until they cause damage.

Effective monitoring isn't about deploying the fanciest tools or collecting the most metrics. It's about gaining actionable insight into what's actually happening across your cloud infrastructure. That means instrumenting the right components, tracking meaningful metrics, alerting intelligently on genuine problems, and automating responses to common issues.

The monitoring approaches that worked in traditional data centers fail spectacularly in cloud environments. Resources come and go too quickly for static configuration. Multi-cloud architectures demand unified visibility that spans provider boundaries. The sheer volume of telemetry requires streaming analytics and intelligent sampling rather than trying to store everything.

Start with clear objectives—what problems are you trying to solve? Reduce incident response time? Control cloud costs? Improve security posture? Your monitoring strategy should directly support these goals, not just collect metrics because you can. Implement automation that transforms monitoring from passive observation into active management. And remember that monitoring is never "done"—it evolves continuously as your infrastructure changes and you learn which signals actually predict problems.

Organizations that master cloud network monitoring gain competitive advantages through faster troubleshooting, proactive optimization, and data-driven infrastructure decisions. As workloads continue migrating toward serverless computing, edge infrastructure, and increasingly complex multi-cloud architectures, these monitoring capabilities become even more critical. Investments you make today in robust monitoring foundations prepare your team for tomorrow's distributed computing challenges.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.