Modern network operations center with large wall-mounted displays showing network traffic graphs and topology diagrams in blue neon lighting

Network Analyzer Guide for IT Professionals

Content

Content

Last Tuesday, a Fortune 500 retailer I consult for lost $180,000 in four hours. Their payment gateway went down during peak shopping hours. The culprit? A misconfigured VLAN that nobody spotted until customers started complaining about failed transactions.

Here's the frustrating part: the problem had been building for three days. Packet retransmissions were climbing steadily. Response times were creeping upward. All the warning signs were there—if anyone had been looking at the actual network traffic.

Most IT teams wait until something breaks spectacularly before investigating. They'll check if devices are "up," maybe glance at bandwidth graphs, then shrug when everything looks normal. Meanwhile, the network is screaming for help in a language they're not listening to.

That's where network analyzers come in. These tools read the network's actual conversations—every request, every response, every error message buried in the traffic flow.

What Is a Network Analyzer and How Does It Work

Think of your network as a busy highway system. Traditional monitoring tools tell you whether roads are open or closed. Network analyzers actually record every vehicle that passes by, what they're carrying, where they're going, and whether they're obeying traffic laws.

At its core, this technology intercepts data packets as they traverse your infrastructure. Your network interface card normally ignores traffic not addressed to it—like tuning out conversations at a crowded party. Network analyzers flip a switch called promiscuous mode that makes the card eavesdrop on everything within earshot.

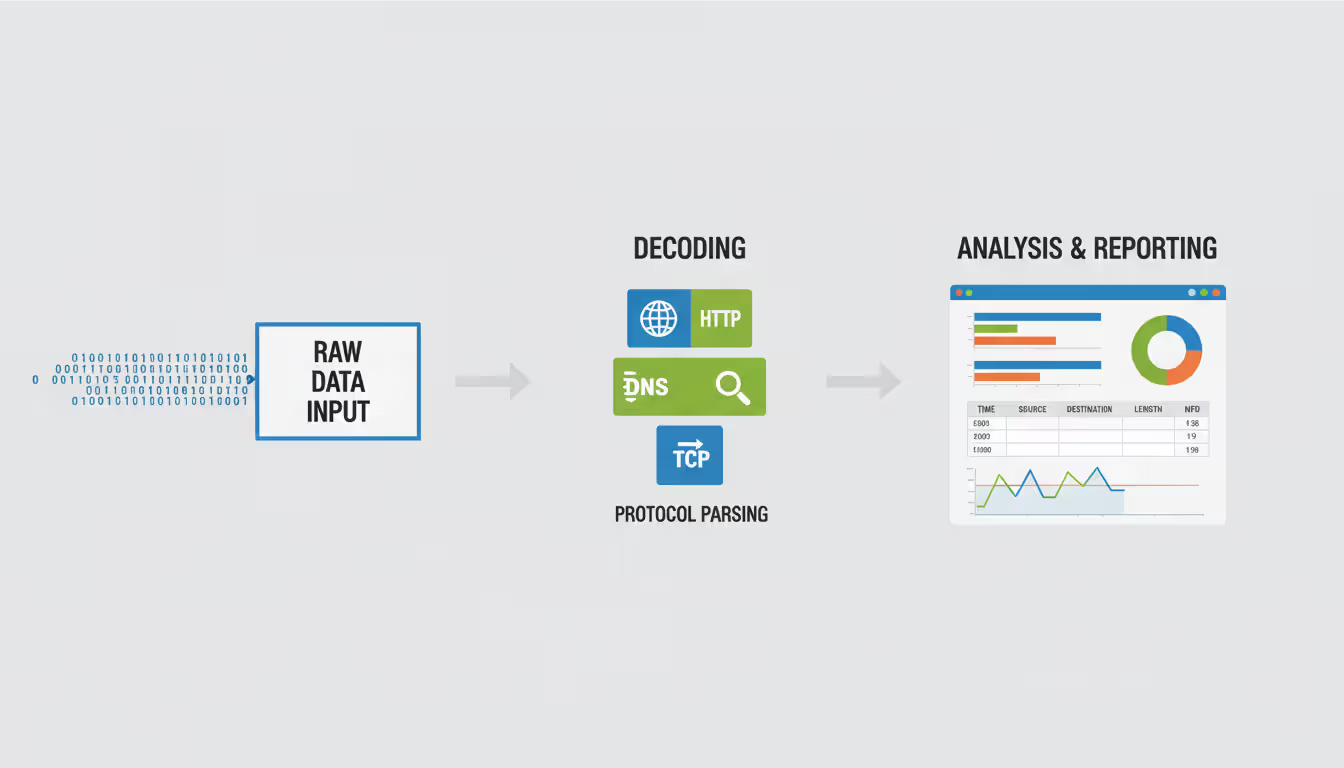

The process happens in three distinct phases. First comes collection: the tool grabs raw binary data streaming past at millions of packets per second. Next comes translation: those ones and zeros get decoded into recognizable protocols—HTTP requests, DNS lookups, database queries, whatever applications are actually saying to each other. Finally comes presentation: all that information gets organized into something humans can actually use—sortable tables, trend graphs, alert conditions.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Here's where things split into two camps.

Hardware-based units are specialized computers built for one job. They plug directly into your switches using mirror ports (SPAN) or inline monitoring devices (TAPs). Companies like VIAVI and Keysight manufacture these purpose-built boxes with custom chips that can process 100 gigabits per second without breaking a sweat. An enterprise data center might deploy one between core routers to capture every packet flowing north-south, guaranteeing zero packet loss even during traffic spikes.

Software versions run on regular computers—your laptop, a server, a virtual machine. Tools like Wireshark transform standard hardware into analysis workstations. You're limited by your computer's processing power and network card capabilities, but you gain flexibility and lower upfront costs. A network engineer might install Wireshark on their Windows laptop for troubleshooting user complaints, or deploy SolarWinds on a dedicated Linux server for continuous monitoring.

Real-world applications vary dramatically by industry. I worked with a hospital where surgeons control robotic equipment over the network. They need absolute certainty that command packets arrive within 50 milliseconds—every single time. Their network analyzer runs 24/7, triggering alarms if latency spikes above 30ms. Meanwhile, a software company I advise uses analyzers mainly for debugging API performance issues. When mobile apps start timing out, they capture the HTTPS handshakes to see whether problems originate in their application servers, CDN, or ISP connections.

Types of Network Analyzer Tools

The market offers specialized tools for different analysis challenges. Picking the wrong type is like bringing a stethoscope to diagnose a broken bone—technically medical equipment, but completely useless for the situation.

Hardware Network Analyzers

Dedicated appliances come from manufacturers who've spent decades perfecting high-speed packet processing. We're talking about devices with custom silicon chips (ASICs) that bypass normal computer operating systems entirely.

Walk into a telecommunications company's network operations center, and you'll find rack-mounted analyzers with blinking lights monitoring 40 Gbps fiber connections. These units might cost $80,000 apiece, but they deliver capabilities software can't match: nanosecond-precision timestamps, guaranteed full-duplex capture at maximum line rate, and physical layer measurements that detect problems in the actual electrical signals.

Fluke Networks dominates the portable hardware market. Their handheld units fit in a backpack and let field technicians test everything from office Wi-Fi to industrial Ethernet in manufacturing plants. You'll see them at construction sites where fiber optic cables are being installed—technicians use them to verify signal quality before signing off on installations.

The downside? Hardware analyzers are single-purpose investments. That $50,000 device that perfectly captures 10 Gbps copper Ethernet becomes obsolete when you upgrade to 25 Gbps fiber. You can't just download a software update—you need different hardware.

Software-Based Network Analyzers

Software tools transform general-purpose computers into analysis platforms. The heavyweight champion here is Wireshark—an open-source application that security researchers, network engineers, and developers worldwide use for free.

I've watched junior technicians master Wireshark basics in a week. Fire it up, click "Start Capturing," and within seconds you're viewing live traffic. Filter for "http contains password" and you'll immediately see if any applications are sending credentials in cleartext (happens more often than you'd think). The learning curve gets steep when you're troubleshooting complex issues, but for everyday problems, it's remarkably accessible.

Commercial software adds capabilities that matter in enterprise environments. PRTG, for instance, deploys remote probes across branch offices that feed data back to a central console. Network Performance Monitor from SolarWinds correlates packet captures with device configuration changes—showing you that application slowdowns started exactly when someone modified a QoS policy.

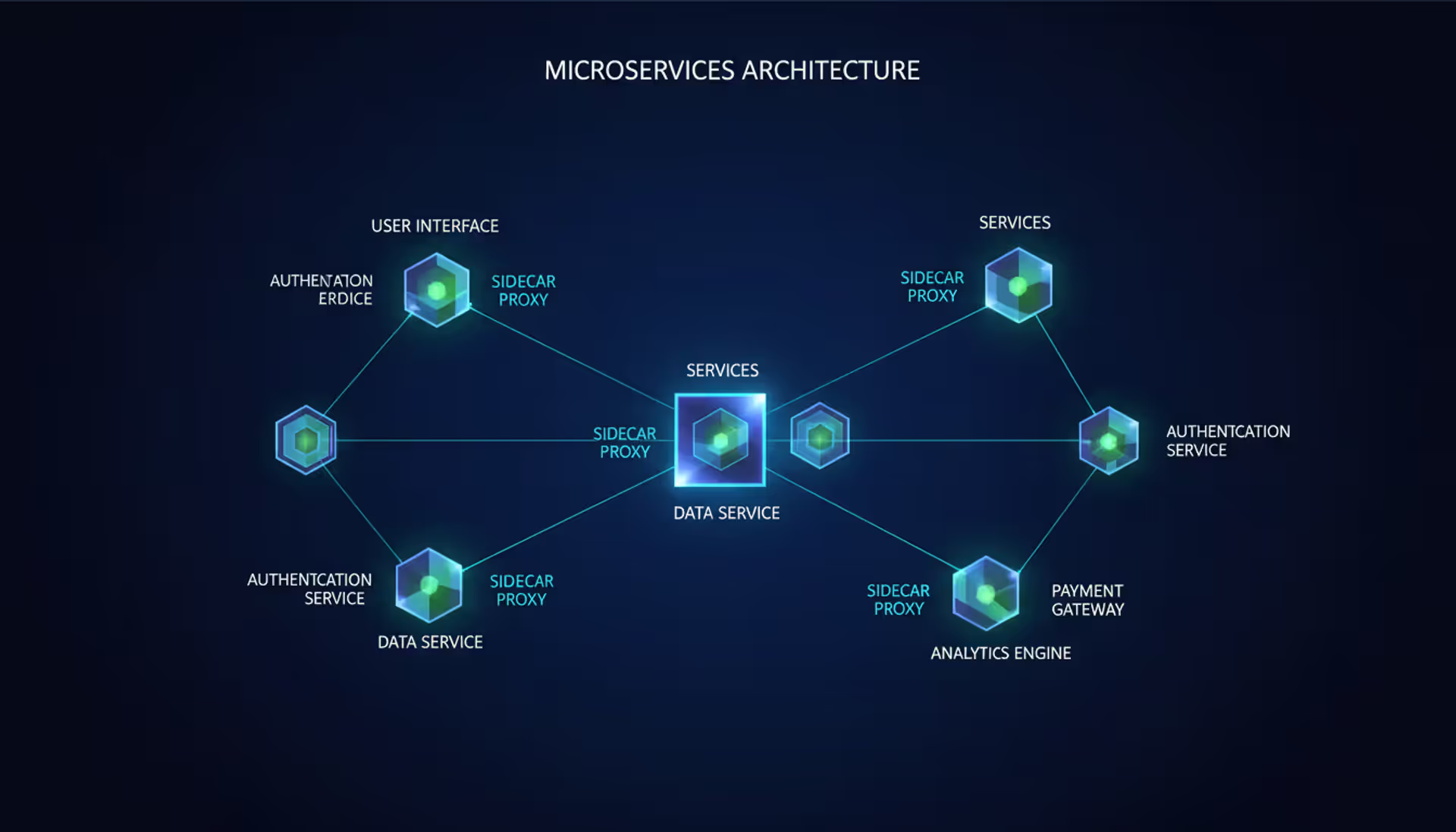

Then there's the cloud-native generation. As companies migrate to AWS and Azure, traditional network analyzers can't easily monitor traffic between virtual machines. Cloud-specific tools like VPC Flow Logs or third-party platforms such as Kentik integrate directly with cloud provider APIs, analyzing north-south traffic (to the internet) and east-west traffic (between cloud services) without needing agents on every VM.

Protocol analyzers represent a specialized breed focused on specific technologies. Telecom companies use SS7 analyzers to debug cellular network signaling. Manufacturing plants deploy OPC-UA analyzers to troubleshoot communication between industrial robots and PLCs. VoIP-specific analyzers like VQManager decode SIP call setup sequences and measure jitter on RTP voice streams—capabilities you won't find in general-purpose tools.

Wireless analysis requires fundamentally different approaches. Standard network analyzers capture traffic already connected to your Wi-Fi network. True wireless analyzers hop between channels, detect rogue access points broadcasting on nearby frequencies, and measure RF signal strength across physical locations. I recently used one to discover that a company's conference room Wi-Fi problems were caused by a neighboring tenant's microwave oven radiating interference on channel 11.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Key Features to Look for in Network Analyzer Software

Shopping for network analyzer software feels overwhelming when vendors list fifty features you've never heard of. Let me cut through the marketing fluff and focus on what actually matters in daily operations.

Capture filtering prevents drowning in irrelevant data. Without filters, capturing traffic on a busy corporate network generates gigabytes per minute—mostly HTTPS encryption you can't decode anyway. Berkeley Packet Filter (BPF) syntax lets you specify exactly what to capture: "tcp port 3306 and host 10.50.1.100" grabs only MySQL traffic to your database server. Pre-capture filtering happens at the kernel level, meaning unwanted packets never reach the application—saving CPU cycles and storage space.

One project I worked on involved tracking down a ransomware infection. We captured only DNS queries containing long random-looking subdomains, which is how certain malware exfiltrates data. That single filter reduced a 500 GB capture to 80 MB of highly relevant traffic showing exactly which workstation was compromised.

Real-time alerting catches problems before users notice. Threshold alerts are simple but effective: "Notify me when HTTP response time exceeds 2 seconds" or "Alert if any host generates more than 1000 DNS queries per minute" (possible DNS tunneling). Better platforms use statistical baselines—they learn that your e-commerce site normally serves 5,000 transactions per hour, and anything below 4,000 triggers investigation even if no hard threshold breaks.

I configure alerts differently for different teams. Network operations gets alerts about packet loss and high retransmission rates. Security operations gets alerts about port scans, unusual protocols, and connections to suspicious IP addresses. Application teams get alerts when their specific services exhibit problems—database query times, API response codes, authentication failures.

Protocol support depth determines what you can troubleshoot. Basic analyzers decode the big names: HTTP, DNS, DHCP. But what happens when your CRM application uses Oracle TNS protocol, your backup system uses proprietary replication, and your POS terminals use obscure retail protocols?

Wireshark includes dissectors for over 3,000 protocols, updated constantly by contributors worldwide. I've personally written custom dissectors for internal applications using Lua scripting—not trivial, but possible when you need it. Commercial vendors sometimes offer protocol packs for specialized industries: medical imaging (DICOM), financial messaging (FIX protocol), industrial automation (Profibus).

Reporting and retention capabilities support compliance and capacity planning. Healthcare organizations must retain certain network logs for seven years per HIPAA regulations. Financial firms need documentation showing proper network segmentation between trading systems and general corporate networks. Beyond compliance, historical data reveals growth trends—showing that your backup window has increased from 4 hours to 7 hours over six months, signaling time to upgrade storage infrastructure.

Cloud-based analyzers handle retention automatically, scaling storage as needed. On-premises deployments require planning: a 10 Gbps link with 30% utilization generates approximately 300 GB per day of full packet captures, or 3 TB of summary flow records per month.

Usability varies wildly between tools. tcpdump offers raw power through command-line syntax but assumes you memorize arcane filter expressions. Wireshark provides a graphical interface with dropdown menus, but you still need TCP/IP knowledge to interpret results. Enterprise platforms like ExtraHop or Riverbed offer guided troubleshooting workflows: "Click here to see slow applications, click here to identify top talkers, click here to export a report for management."

I recommend matching interface complexity to user expertise. Security analysts comfortable with packet-level detail can handle Wireshark. Help desk staff investigating "the internet is slow" complaints need simplified dashboards showing obvious problems in plain English.

Integration options extend value beyond standalone analysis. REST APIs export data to Splunk, ServiceNow, or Slack. Webhooks trigger automated responses—blocking an IP address in your firewall when detecting port scanning, or opening a trouble ticket when voice quality degrades below acceptable thresholds. The most powerful deployments combine network analyzers with SIEM platforms, configuration management databases, and orchestration tools for comprehensive visibility and response.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

How Network Analyzer Calibration Works

Calibration means completely different things depending on whether you're using hardware RF analyzers or software packet analyzers—a distinction that confuses many IT professionals.

Vector network analyzers (VNAs) used by RF engineers require meticulous calibration with physical reference standards. These devices measure electromagnetic wave behavior in antennas, amplifiers, and transmission lines. Calibration involves connecting precision components—open circuits, short circuits, matched 50-ohm loads—to teach the analyzer what "perfect" measurements look like.

The process corrects for systematic errors introduced by test cables, connectors, and the analyzer's own circuitry. A typical two-port calibration requires measuring twelve different reference conditions, generating error coefficients that the analyzer applies mathematically to subsequent measurements. Without calibration, an antenna might appear to have acceptable return loss when it actually has impedance mismatches causing packet errors on wireless links.

Aerospace contractors calibrate their VNAs monthly with standards traceable to NIST (National Institute of Standards and Technology). A defense contractor I worked with had calibration records dating back ten years—mandatory for proving that every component shipped to military aircraft met exact specifications when tested.

If your VNA hasn't been calibrated in a year, measurements might drift by several dB—enough to pass defective components or reject good ones. Environmental factors accelerate drift: temperature extremes, humidity, physical shocks from transportation. Field equipment used in cell tower installations often needs quarterly calibration versus annual cycles for lab instruments.

But here's what trips people up: software network analyzers capturing data packets don't have traditional calibration procedures. They're not measuring physical phenomena with potential sensor drift. They're recording digital data that's either captured correctly or not.

What software analyzers do need is accurate time synchronization and performance baselining.

Time synchronization ensures that distributed collectors timestamp packets consistently. Imagine troubleshooting a database timeout by comparing application server logs, database server logs, and captured network traffic—if their clocks are skewed by even 100 milliseconds, you can't determine the true sequence of events.

NTP (Network Time Protocol) synchronization is sufficient for most business applications, keeping clocks within tens of milliseconds. Financial trading platforms use PTP (Precision Time Protocol) for microsecond accuracy because regulatory compliance requires proving the exact sequence of transactions. I've seen regulatory audits where firms were fined because their network analyzers couldn't precisely timestamp whether a trade order arrived before or after a market data update.

Baseline establishment represents the software equivalent of calibration—defining "normal" so you can detect abnormal. Capture week-long samples during known-good operations: typical bandwidth consumption, average response times, normal protocol distributions, expected error rates.

A manufacturing client baselines their industrial network every quarter because production schedules change seasonally. Summer air conditioning increases electrical noise that slightly elevates packet error rates. Without updated baselines, their analyzer would constantly alert about conditions that are actually normal for that time of year.

Application deployments require new baselines. When that same manufacturer installed a new MES (Manufacturing Execution System), we captured two weeks of post-deployment traffic to establish the new normal before enabling alerting. Otherwise, the analyzer would flag perfectly healthy MES traffic as anomalous simply because it differed from the pre-deployment baseline.

Choosing the Right Network Analyzer for Your Business

I've helped dozens of organizations select network analyzers, and the biggest mistake is immediately jumping to feature comparisons. Start by honestly assessing your environment and capabilities.

Network scale determines architectural requirements. A dental office with 15 computers and two printers? Wireshark on the IT manager's laptop handles everything. Mid-sized manufacturing company with five plants in different states? You need centralized collection, probably agent-based or flow-based analysis since full packet capture across WAN links creates bandwidth problems.

Enterprise environments with thousands of endpoints, multiple data centers, and cloud infrastructure demand distributed architecture. One retail client has collectors in each store forwarding flow summaries to regional data centers, which aggregate to corporate headquarters. They capture full packets only when investigating specific issues, not continuously.

Budget constraints force realistic trade-offs. Free tools work remarkably well if you have skilled staff. I know security researchers who exclusively use Wireshark, tcpdump, and tshark scripts—zero software costs, but they're experts who've spent years mastering those tools.

Entry-level commercial platforms start around $3,000 perpetually licensed or $600 annually. You're paying for easier interfaces, automated reporting, vendor support. At the other extreme, enterprise deals can exceed $100,000 annually once you factor in unlimited collection points, advanced analytics, professional services for deployment, and 24/7 support with four-hour response SLAs.

Hardware analyzers represent significant capital expenditure. A portable Fluke LinkRunner for basic troubleshooting runs $3,000. High-end VIAVI Observer platform with 100 Gbps capture capability? North of $150,000. Most organizations rent hardware analyzers for specific projects rather than purchasing.

Existing staff expertise matters more than vendor marketing materials. I've watched companies buy sophisticated platforms with machine learning anomaly detection, then never use those features because nobody understood how to interpret the results. Meanwhile, a scrappy startup I advise does amazing work with Wireshark because their network admin spent six months learning it inside-out.

Assess whether you're complementing experienced engineers or empowering general IT staff. Experienced engineers want flexibility and depth—they'll tolerate steeper learning curves for more control. Generalists need guided workflows and contextual help. Some vendors offer tiered interfaces: simplified dashboards for Tier 1 support, detailed analysis views for specialists.

Training availability significantly impacts tool adoption. Wireshark has thousands of free tutorials, YouTube videos, and community forums. Niche commercial tools might offer one annual training class that costs $2,000 per attendee. Factor training into total cost calculations.

Scalability plans prevent expensive mid-flight tool changes. That perfect solution for your current 1 Gbps internet connection becomes a bottleneck when you upgrade to 10 Gbps next year. Cloud-native platforms scale horizontally—add more collectors, more storage, more processing power—without architectural changes.

Flow-based analysis (NetFlow, sFlow) scales better than full packet capture for monitoring large networks. You lose granular detail but gain manageable data volumes. One ISP I consulted for monitors 50,000 customer circuits using flow data, falling back to targeted packet captures only when investigating specific customer complaints.

Specific use cases determine must-have features. Security-focused analysis requires SSL/TLS decryption (complex to implement legally and technically), integration with threat intelligence feeds, and detection of encrypted tunneling protocols. Performance troubleshooting emphasizes transaction tracking across multi-tier applications, database query analysis, and synthetic transaction testing.

Regulatory compliance drives requirements in healthcare, finance, and government. You might need tamper-proof audit logs, role-based access controls, and automated reports proving network segmentation between different security zones.

Vendor viability and support quality affect long-term success. Established vendors provide guaranteed response times, regular updates, and professional services for complex deployments. Smaller vendors might offer innovative features but risk being acquired or ceasing operations. Open-source tools never disappear but rely on community support.

I recommend proof-of-concept trials before purchasing. Most vendors offer 30-day evaluations. Deploy on a representative network segment, test against actual problems you're experiencing, involve the people who'll use it daily.

Here's what nobody tells you in vendor demos: the fancy analyzer with AI-powered insights is worthless if your team doesn't understand basic packet analysis first. I've rescued three projects where companies bought enterprise platforms, couldn't get value, then blamed the tools. The real problem? They skipped fundamentals. Master packet capture and filtering before worrying about machine learning anomaly detection

— Marcus Chen

Common Network Analysis Mistakes to Avoid

Fifteen years of troubleshooting networks taught me that most analysis failures stem from process problems, not tool limitations. These mistakes appear repeatedly across organizations of all sizes.

Capturing everything hoping to figure it out later generates massive files nobody analyzes thoroughly. I've watched engineers capture 200 GB overnight, then spend hours just trying to open it in Wireshark (which struggles with files over 2 GB). Before clicking "Start," define your objective: slow web application performance? Filter for HTTP traffic to those specific servers. Email delivery problems? Capture only SMTP and DNS traffic.

Surgical captures beat shotgun approaches. Last month, an application timeout issue had me capturing just TCP SYN, SYN-ACK, and RST packets—zero payload data. That single filter showed the problem immediately: connection attempts were timing out during the three-way handshake because a firewall rule was blocking return traffic.

Skipping baseline documentation means interpreting measurements without context. Is 5% packet retransmission high? Depends whether your normal is 0.5% (yes, investigate) or 4% (within tolerance for your congested network). Document baseline metrics during known-good operations: typical bandwidth utilization, average latencies by application, normal protocol distributions, expected error rates.

One manufacturing plant I worked with thought they had network problems because monitoring showed 20% bandwidth utilization. Turns out 20% was their historical average—production had actually decreased due to supply chain issues, so "normal" traffic looked suspicious to new management who lacked baseline context.

Misreading TCP behavior causes false conclusions. Retransmissions don't automatically mean network problems. TCP retransmits for multiple reasons: genuine packet loss, duplicate ACKs from out-of-order delivery, or receiver window exhaustion. A handful of retransmissions during file transfers spanning hundreds of thousands of packets indicates healthy network behavior, not issues.

Window size adjustments often get misinterpreted as problems. Seeing the receiver advertise increasingly smaller windows? That's flow control working correctly—the receiving application can't process data fast enough, so TCP slows transmission to prevent overflow. The problem lives in application performance, not network delivery.

Relying exclusively on default configurations limits analysis effectiveness. Wireshark's default capture buffer on Windows is 1 MB—enough for a few seconds on busy networks before wrapping. Increase buffer size based on link speed and expected capture duration. Default column layouts might not show information relevant to your troubleshooting. Customize displays to show what you actually care about: TCP window sizes, response times, retransmission flags.

Time display formats matter more than you'd think. Wireshark defaults to "Seconds Since Beginning of Capture." Switch to absolute time when correlating with syslog entries, or use "Seconds Since Previous Displayed Packet" when measuring intervals between specific events.

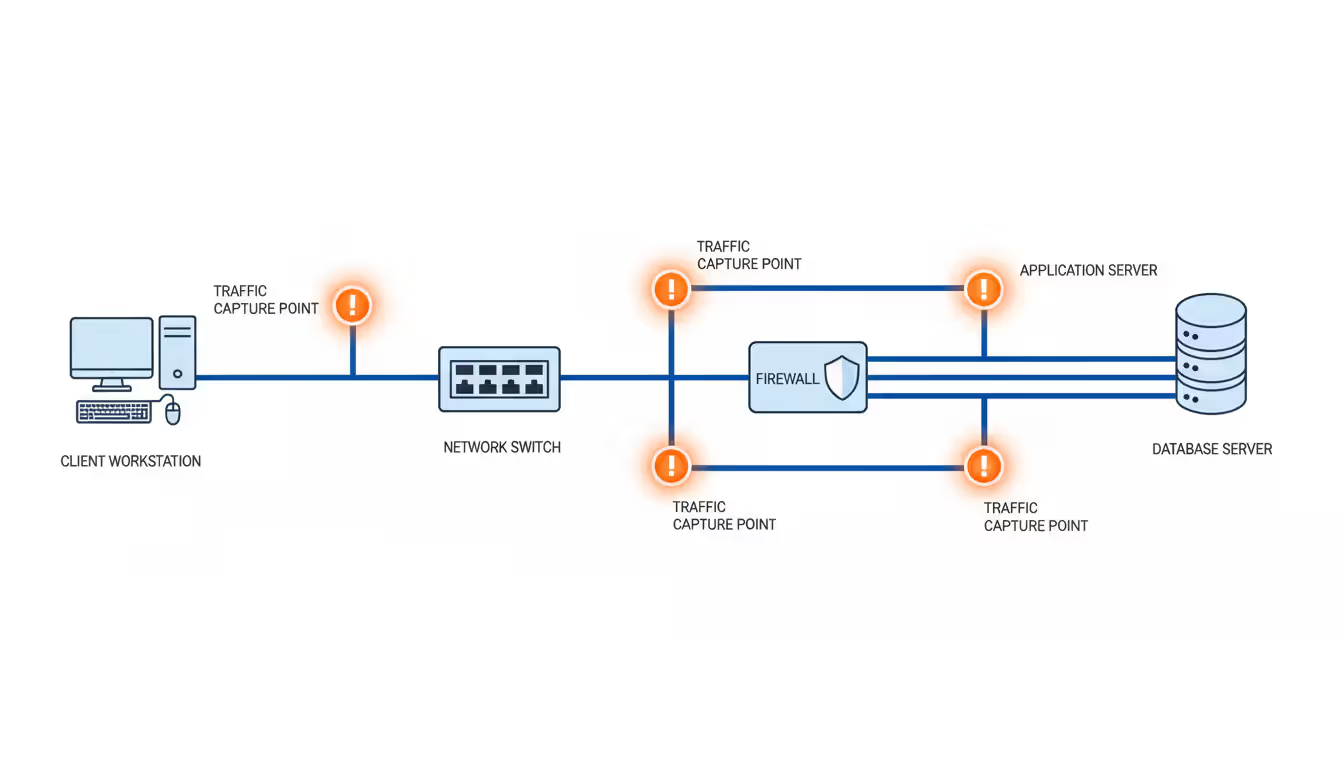

Analyzing traffic at suboptimal capture points produces incomplete pictures. Capturing on the client shows what the client sent but not what happened in transit. Capturing on the server reveals server behavior but might miss problems in the return path. For asymmetric routing networks, traffic flowing one direction might take completely different paths than return traffic.

Strategic capture locations matter. Investigating slow database queries? Capture between application and database servers, not at the user workstation three network hops away. Troubleshooting internet connectivity? Capture at the border router where traffic enters/exits your network, seeing exactly what your ISP receives and delivers.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Ignoring privacy and security considerations creates liability. Captured traffic contains usernames, authentication tokens, personal information, and proprietary data. One company I consulted for captured traffic on their wireless network without employee consent—exposed themselves to privacy lawsuits when the capture file was accidentally emailed to the wrong person.

Implement proper safeguards: encrypted storage for capture files, access controls limiting who can retrieve captures, defined retention policies, and documented legal authorization for monitoring. Some jurisdictions require posting notices that network traffic may be monitored.

Forgetting to document capture conditions hampers future analysis. When reviewing captures days later, you need context: What time was this captured? Which network segment? What filters were applied? What symptoms were users reporting? What else was happening—backups running, system updates deploying?

I maintain a troubleshooting log with every capture: timestamp, duration, location, applied filters, observed symptoms, concurrent events, and eventual resolution. That historical record proves invaluable when similar problems recur months later.

Comparison Table: Network Analyzer Types

| Type | When You'd Use This | Budget Expectations | Skill Level Needed | Setup Timeline | Ideal Scenarios |

| Hardware appliances | Guaranteed capture on high-speed links, physical signal testing, zero packet loss requirements | $15,000–$100,000+ per device | Expert-level TCP/IP and RF knowledge | Several days including rack mounting and configuration | Telecom providers, data centers with 100G links, regulatory compliance requiring certified equipment |

| Software applications | Day-to-day troubleshooting, distributed branch monitoring, budget-conscious deployments | Free (open-source) to $50,000 annually | Moderate networking fundamentals to advanced | Under one hour for basic setup | Small business networks, MSP client monitoring, development/test environments |

| Protocol-specific tools | Debugging VoIP call quality, industrial automation issues, application-layer problems | $500–$20,000 depending on specialization | Moderate—need protocol expertise more than networking | Minutes to install, hours to master | Contact centers with VoIP systems, manufacturing plants, specialized applications |

| Wireless analyzers | Wi-Fi performance issues, site surveys before deployment, rogue access point detection | $1,000–$10,000 for quality tools | Moderate RF and Wi-Fi knowledge | 1–2 hours including adapter configuration | Office buildings with Wi-Fi complaints, hospitality venues, security assessments |

| Flow collection systems | Long-term trending, bandwidth accountability, security anomaly detection at scale | $2,000–$30,000 per year | Beginner to moderate—easier than packet analysis | Several hours for distributed collectors | Large campus networks, ISP customer monitoring, multi-site enterprises |

Frequently Asked Questions About Network Analyzers

Network analysis success comes down to matching tool capabilities with your actual requirements, staff expertise, and realistic budgets. Software solutions fit most organizational needs—offering flexibility and manageable costs—while hardware analyzers serve specialized high-performance scenarios where software physically can't keep up.

The tool itself matters less than your troubleshooting methodology. I've watched teams armed with $80,000 enterprise platforms struggle to diagnose simple DNS misconfigurations, while scrappy startups running Wireshark quickly resolve complex application issues. The difference? Systematic approaches: establishing baselines during normal operations, capturing at strategic network locations, applying targeted filters to reduce noise, and correlating findings with configuration changes and application logs.

Start every analysis session with specific objectives—not vague goals like "see what's happening" but concrete questions like "why is this specific application timing out between 2-3 PM daily?" Document your capture conditions, applied filters, and findings for future reference. Continuously refine baseline understanding as your network evolves through upgrades, application deployments, and traffic pattern changes.

This disciplined approach transforms network analysis from reactive firefighting—scrambling when executives complain about performance—into proactive infrastructure management that identifies developing issues before users notice. That shift from reactive to predictive represents the real value of network analysis, regardless of which specific tools you deploy.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.