Abstract visualization of a service mesh network with interconnected microservice nodes and sidecar proxies on a dark blue gradient background

What Is a Service Mesh in Microservices?

When you deploy microservices in production, each service needs to talk to others reliably. Retry logic. Circuit breakers. Timeout configurations. Load balancing across instances. Mutual TLS for encryption. Someone has to build all that.

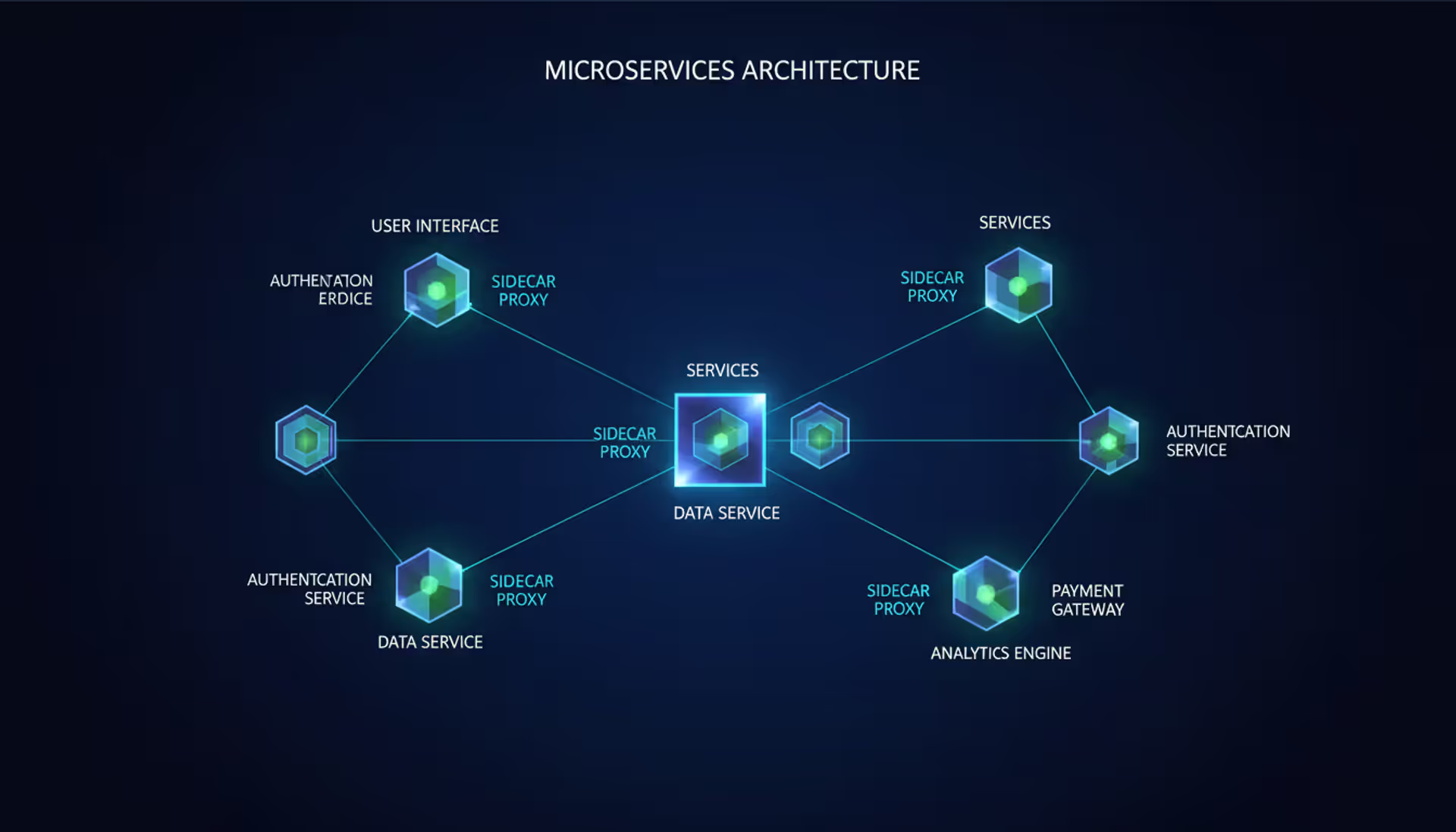

Service mesh technology solves this by pulling all that networking complexity out of your application code and into infrastructure. Picture a dedicated layer of lightweight proxies sitting next to each microservice, automatically handling the messy details of network communication. Your developers write business logic while the mesh manages retries, collects metrics, enforces security, and routes traffic.

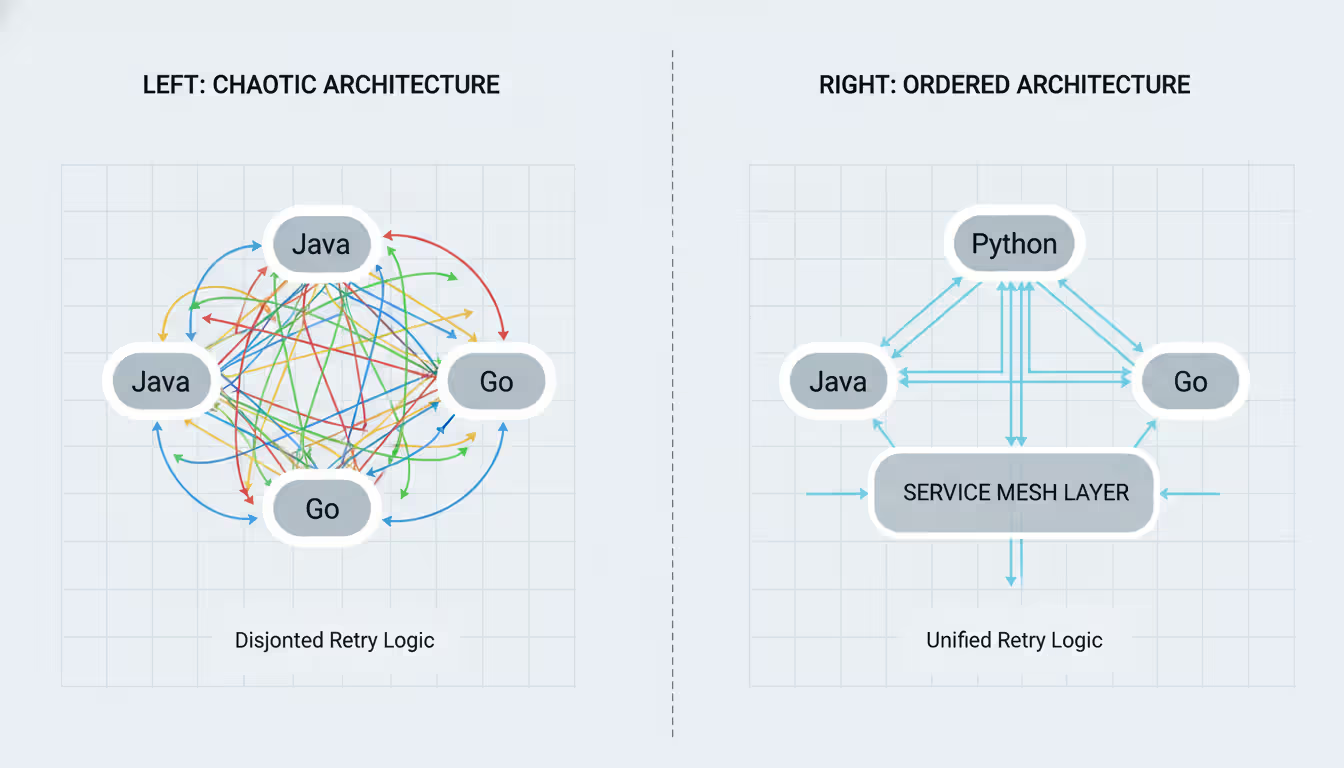

Here's the practical difference: Without a mesh, your Java team builds retry logic one way, your Python team builds it differently, and your Go team probably forgets it entirely until production breaks. Each approach has different timeout values, different backoff strategies, different failure modes. When you troubleshoot an outage, you're digging through twelve different codebases trying to understand how service calls actually work.

With a mesh, you configure retry behavior once. Every service gets the same reliable communication patterns, whether it's written in Rust or Ruby. You can visualize your entire service topology, see exactly which services call which endpoints, and spot problems before customers notice them.

The technology took off around 2017 when Kubernetes adoption exploded and companies suddenly ran fifty or a hundred microservices instead of five. Teams at Google, Lyft, and other large-scale operators realized they needed infrastructure-level solutions for service communication. By 2026, the tooling has matured dramatically—you don't need Google's engineering headcount to run a mesh successfully anymore.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

How Service Mesh Architecture Works

Two major components make up every service mesh: the data plane and control plane. Think of the data plane as the workers doing the actual job, while the control plane acts as management telling workers what to do.

Your data plane is a fleet of proxy instances, typically one running beside each microservice. In Kubernetes, this means each pod gets an extra container—a sidecar proxy—that intercepts all network traffic. Nothing goes in or out of your application without passing through this proxy first. Most meshes use Envoy proxy for this job, though Linkerd built their own in Rust specifically optimized for mesh workloads. These proxies are surprisingly small—often under 20 MB for Linkerd's proxy, though Envoy can run 50-100 MB depending on configuration.

The control plane is the brains of the operation. It pushes configuration to all those data plane proxies, handles TLS certificate distribution and rotation, collects telemetry data, and provides APIs for operators to define routing rules. When you tell your mesh "send 20% of traffic to the canary deployment," you're configuring the control plane. It translates your intent into thousands of individual proxy configurations distributed across your cluster.

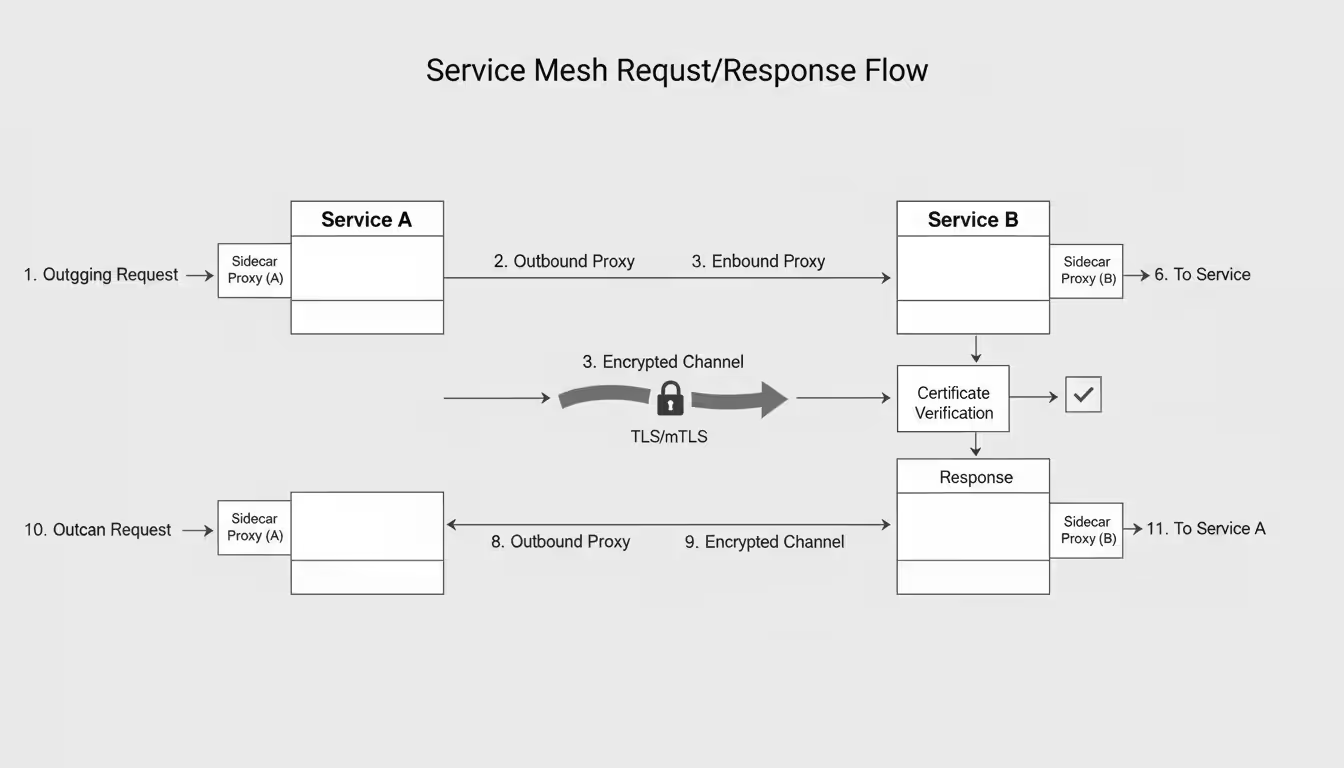

Let's walk through what happens when Service A calls Service B. Your application code in Service A makes a normal HTTP call to Service B. That request immediately hits Service A's sidecar proxy instead of going directly to the network. This outbound proxy checks your timeout policies, maybe implements a retry if you've configured that, selects a healthy instance of Service B using whatever load balancing algorithm you've chosen (round-robin, least-connections, etc.), and establishes a mutual TLS connection.

The encrypted request travels over your network infrastructure to Service B's node. Service B's sidecar proxy receives it, validates the TLS certificate to verify Service A is actually who it claims to be, checks authorization policies to confirm Service A is allowed to call this endpoint, and finally forwards the request to Service B's application container. Service B processes the request and returns a response. That response takes the opposite journey—through Service B's proxy, across the network, through Service A's proxy, back to your application code.

Both proxies collect detailed metrics during this exchange: request duration, HTTP status codes, bytes transferred, connection state. This telemetry flows back to the control plane and typically gets exposed through Prometheus metrics that you can visualize in Grafana dashboards.

One surprise for teams new to meshes: the resource overhead adds up quickly. Each sidecar consumes memory and CPU. In large clusters with hundreds of pods, you might need 15-25% more infrastructure capacity just to run the mesh itself. I've seen teams deploy Istio to a cluster running near capacity, then watch the cluster fall over as sidecar proxies compete with application containers for resources. Size your infrastructure accordingly from the start.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Service Mesh vs API Gateway

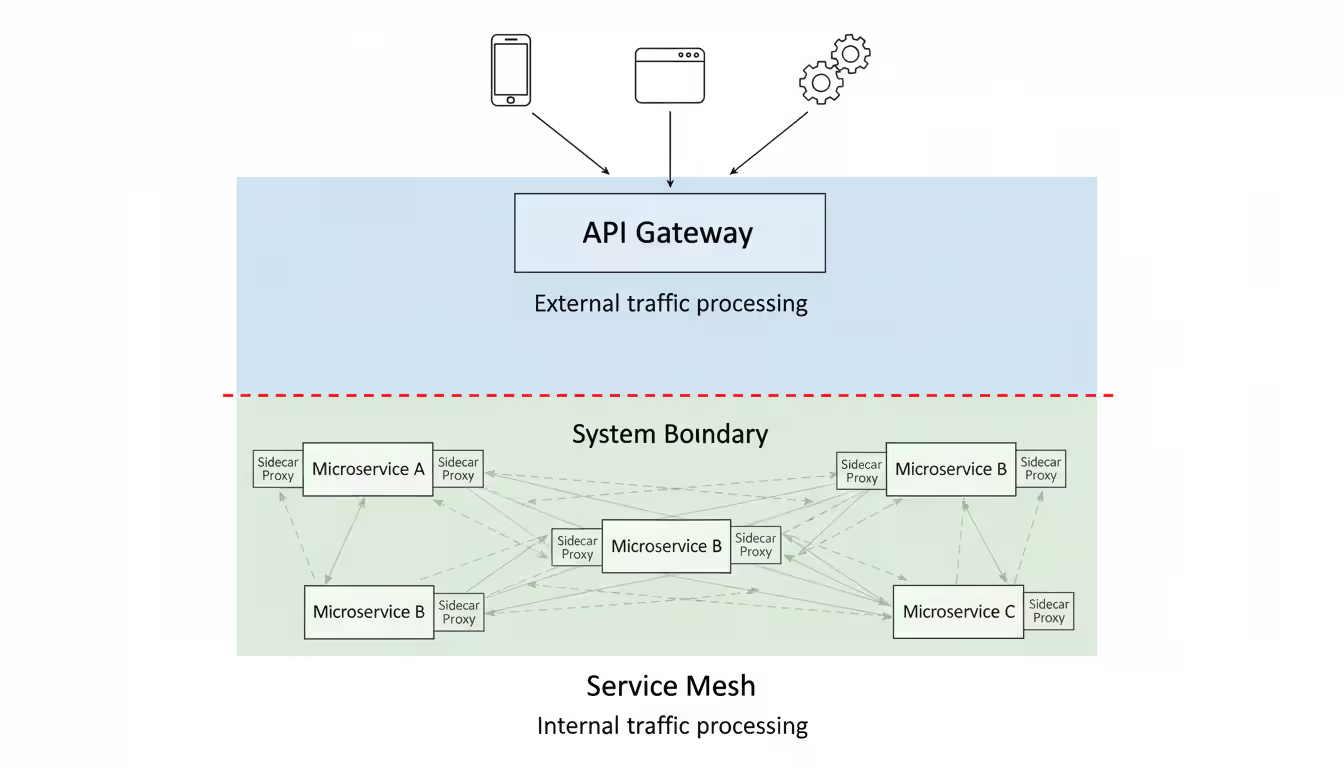

These two technologies confuse people constantly because both manage traffic and sit between services. But they guard completely different boundaries of your system.

Your API gateway stands at the edge, handling traffic from the outside world. Mobile apps, web browsers, partner systems—they all hit your API gateway first. It authenticates requests, enforces rate limits, routes to the appropriate backend service, maybe transforms protocols (your frontend sends REST, your backend expects gRPC), and aggregates multiple service calls into single client-facing responses. You typically run just a handful of gateway instances, and they're the front door to your entire system.

A service mesh lives inside your infrastructure, managing how services talk to each other. It assumes requests have already entered your system and focuses on internal service-to-service communication. You're not running a few mesh proxies—you're running potentially thousands, one next to every single service instance throughout your cluster.

The purposes differ fundamentally. API gateways expose carefully designed APIs to external consumers. You control exactly what endpoints exist, what data formats you accept, what rate limits apply per customer. Service meshes enforce policies on internal traffic that external clients never see directly. The mesh ensures your order service can reliably call your inventory service with automatic retries and mutual TLS, which isn't something external clients care about.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Most real production systems use both, and they work together. An external request hits your API gateway. The gateway authenticates the user, checks their rate limit quota, and routes the request to your order service. Now the service mesh takes over. The order service needs to check inventory (mesh manages that call), process payment (mesh manages that too), and send a confirmation email (mesh again). Every internal hop benefits from the mesh's retry logic, circuit breakers, encryption, and observability.

Here's a rule of thumb: if you've built a microservices system with more than five or six services calling each other, you probably need both technologies. The gateway handles your external API surface. The mesh keeps internal communication reliable.

Some teams try to make their API gateway do mesh-like things, or vice versa. Kong and other gateways have added service mesh features. Istio can act as an ingress gateway. But trying to use one tool for both jobs usually means compromising—you get a mediocre gateway and a mediocre mesh instead of excellent versions of each.

Common Service Mesh Tools and Platforms

Four major platforms dominate the production service mesh landscape as of 2026. Each makes different trade-offs between power and complexity.

Istio gives you the most features and the most operational complexity. Built by Google, IBM, and Lyft, it uses Envoy for its data plane and offers incredibly granular control over traffic routing, security policies, and observability. Need to route requests based on HTTP headers and user identity while enforcing mutual TLS with certificates from your corporate CA? Istio can do it. Want to extend functionality with WebAssembly plugins? Istio supports that too.

The catch? Istio's control plane in earlier versions consisted of multiple components (Pilot for traffic management, Citadel for certificates, Galley for configuration validation) that required careful tuning. Newer versions consolidated into a single istiod component, which helps, but you're still looking at 1-2 GB of memory for the control plane. Each Envoy sidecar adds another 100-150 MB per pod. In a cluster with 200 pods, that's 20-30 GB just for proxy overhead. Companies with strong Kubernetes teams and requirements for advanced features like multi-cluster meshes accept this cost. Smaller teams often drown in Istio's configuration complexity.

Linkerd chose the opposite philosophy: keep things simple. Built specifically for Kubernetes (no VM support, no non-Kubernetes use cases), Linkerd installs with a single CLI command and works with sane defaults right away. The team wrote a custom proxy in Rust that's dramatically lighter than Envoy—we're talking 10-20 MB per sidecar. The entire control plane might use 200-300 MB total.

You get automatic mutual TLS between all services, golden metrics (success rate, request volume, latency percentiles), and a web dashboard showing your service topology. The tradeoff? Fewer knobs to turn. Linkerd doesn't try to solve every possible traffic management scenario. It handles the 80% of use cases most teams actually need without drowning you in YAML. Teams who want observability and zero-trust security without becoming service mesh experts tend to love Linkerd.

Consul by HashiCorp takes a different architectural approach entirely. It's not just a service mesh—it's a service discovery platform that added mesh capabilities. This broader scope makes Consul valuable for hybrid environments mixing Kubernetes, VMs, and bare metal servers. Running services on EC2 instances not managed by Kubernetes? Consul can mesh them. Migrating from a VM-based architecture to containers over the next two years? Consul bridges both worlds.

Consul uses Envoy for its data plane, so you get similar proxy capabilities to Istio. The control plane integrates deeply with Consul's service catalog and distributed key-value store. If you're already using HashiCorp's ecosystem (Vault for secrets, Terraform for infrastructure, Nomad for orchestration), Consul fits naturally. But if you're running pure Kubernetes and don't need VM support, Consul's additional concepts and components add complexity you might not need.

AWS App Mesh is Amazon's managed service mesh. The key word is "managed"—AWS runs and operates the control plane for you. You don't worry about control plane availability, upgrades, or scaling. The control plane integrates natively with AWS services: Cloud Map for service discovery, ACM for TLS certificates, CloudWatch for metrics, X-Ray for distributed tracing.

You still deploy and manage Envoy sidecars yourself (App Mesh isn't fully managed), but the control plane operations burden disappears. The obvious limitation: App Mesh only works in AWS. Multi-cloud architectures or teams concerned about cloud lock-in should look elsewhere. But if you're all-in on AWS and want to offload control plane operations, App Mesh simplifies life considerably.

| Tool | Implementation Complexity | Memory & CPU Cost | Observability | Security Features | When to Choose It |

| Istio | Requires deep Kubernetes knowledge and dedicated platform team | Each sidecar uses 100-150 MB; control plane needs 1-2 GB | Extensive metric collection, full distributed tracing, custom dashboards | Detailed authorization rules, external CA integration, certificate rotation | Large enterprises with complex requirements and strong platform engineering teams |

| Linkerd | Install in under 10 minutes with minimal Kubernetes experience | Sidecars use just 10-20 MB; control plane under 300 MB total | Automatic golden metrics, live traffic tap, built-in topology graph | Automatic mutual TLS, straightforward policy model | Teams wanting simple setup, lower overhead, and solid observability without complexity |

| Consul | Moderate learning curve; broader than Kubernetes-only tools | Sidecars around 50-100 MB; control plane size varies by architecture | Web UI included, Prometheus integration, distributed tracing support | Mutual TLS, intention-based policies, integrates with Vault | Hybrid infrastructure with VMs and containers, or existing HashiCorp tool users |

| AWS App Mesh | AWS-specific knowledge required but control plane is managed | Sidecars use 50-100 MB; AWS handles control plane resources | Native CloudWatch and X-Ray integration, standard Envoy metrics | Automatic mutual TLS, IAM integration, uses AWS Certificate Manager | AWS-only shops wanting to avoid control plane operations |

Picking the right mesh means understanding your constraints. Don't choose Istio just because it's the most popular if your team of three Kubernetes-familiar engineers will drown in configuration complexity. Start with your actual requirements—do you absolutely need multi-cluster mesh? VM support? WebAssembly extensibility?—then pick the simplest tool that satisfies those requirements.

Service Mesh Implementation Steps

Rolling out a service mesh can break production traffic spectacularly if you rush it. I've watched teams take down their entire platform by enabling strict mutual TLS across 100 services simultaneously. Here's how to avoid that fate.

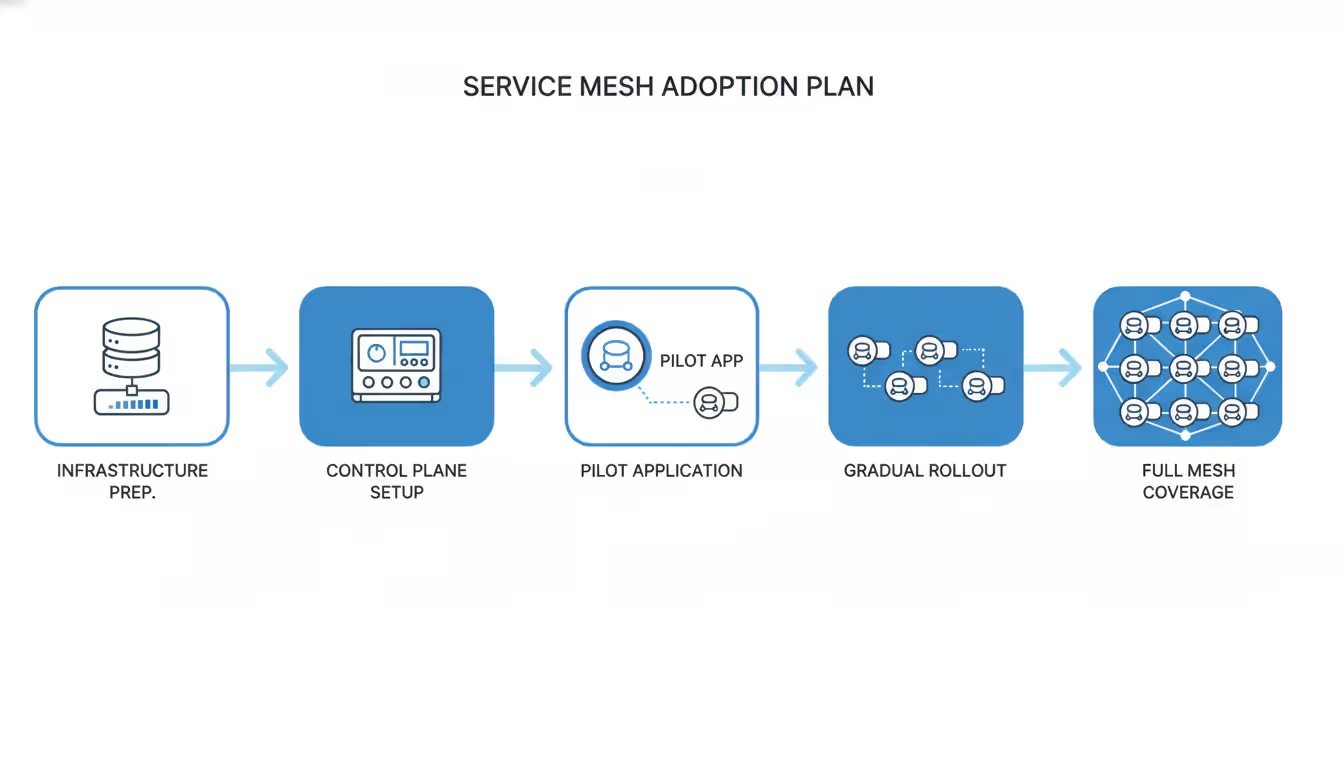

Before you start, verify your Kubernetes cluster is recent enough (version 1.24 or later for most meshes as of 2026) and has enough spare capacity. Calculate 20-30% additional resources for mesh overhead and either add nodes or reduce other workloads. Install Prometheus and Grafana before deploying the mesh—you need observability into the mesh itself from day one. Make sure your team actually understands networking basics like TCP, DNS resolution, TLS handshakes, and certificate chains. Service mesh troubleshooting often requires reading proxy logs and debugging certificate validation failures.

Plan your approach by defining what problem you're actually solving. "We need a service mesh" isn't a valid goal. "We need mutual TLS between services to meet SOC 2 requirements" is. "We need better visibility into which services call which endpoints" is. "We want to do canary deployments with traffic splitting" is. Your primary goal determines which mesh features to enable first.

Map your service dependencies carefully. Draw out (or generate with a tool) which services call which other services. Identify your critical path—the chain of service calls that processes your most important business transactions. These services should be the last to join the mesh, not the first. Start with low-risk services instead.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Choose a pilot application that's non-critical but realistic. Avoid the "hello world" service that gets 10 requests per day—it won't reveal real-world issues. Also avoid your payment processing service that handles $10 million per hour—problems there end careers. Pick something in between, maybe an internal admin tool or a batch processing service with moderate traffic and a few dependencies.

Deploy incrementally. First, install just the control plane in its own namespace. Configure resource limits (don't let the control plane consume unlimited memory), set up monitoring dashboards, and verify all control plane components are healthy. Wait 24 hours. If the control plane itself is unstable, you're not ready to add services yet.

Next, enable sidecar injection for your pilot application's namespace only. In Kubernetes, this usually means adding a label like istio-injection=enabled to the namespace, then rolling your pods (delete and recreate them) so the mesh's admission webhook can inject sidecar containers. Don't enable auto-injection cluster-wide—that's asking for trouble.

Watch your pilot application closely for at least 48-72 hours. Compare latency percentiles before and after—p50, p95, p99. You'll see some increase (typically 1-3ms per hop), but p99 latency shouldn't double. Check error rates in your application logs and metrics. The mesh might be rejecting connections if you haven't configured mutual TLS correctly. Monitor resource consumption—are the sidecars using reasonable CPU and memory, or are they somehow consuming more resources than your application itself?

After your pilot succeeds, expand gradually. Add one or two services per week, not per day. This slow pace feels painful but makes troubleshooting possible. When something breaks, you know exactly which service caused it. If you migrate 20 services in a day and latency spikes, good luck figuring out which one is the culprit.

Your rollout strategy needs escape hatches. Configure the mesh in permissive mode initially—mutual TLS is attempted but not required. This lets meshed services communicate with non-meshed services during migration, preventing the "big bang" connectivity failure where suddenly nothing can talk to anything. Once everything is migrated, switch to strict mode to enforce mutual TLS everywhere.

Use canary deployments for mesh upgrades too. Run two control plane versions simultaneously if your mesh supports it (Istio does), routing a small percentage of services to the new version. Upgrade sidecar proxies gradually across multiple days, not all at once. This sounds paranoid until you've experienced a bad proxy version that increases latency by 200ms—and you've rolled it out everywhere before noticing.

The biggest mistake I see teams make: enabling everything at once. They turn on mutual TLS, authorization policies, request tracing, access logging, and traffic splitting in the same deployment. When latency spikes or connections fail, they can't isolate which feature caused the problem. Enable one feature, validate it works, then move to the next.

When Your Application Needs a Service Mesh

Service mesh technology solves real problems, but not everyone has those problems. Adding a mesh when you don't need one just makes your infrastructure more complicated and fragile.

You probably need a mesh when multiple teams keep reimplementing the same networking logic in different ways. If your Java services have one retry strategy, your Python services have another, and your Node services might not have retries at all, a mesh gives everyone the same reliable communication patterns without writing code. When you can't answer basic questions like "which services depend on this API?" or "why is this service chain slow?" without spending hours diving through logs, a mesh's built-in observability saves enormous time. If security mandates mutual TLS between all internal services but managing certificates manually sounds like a nightmare, meshes automate the entire certificate lifecycle.

Another clear signal: you're spending significant effort on distributed tracing instrumentation. If every team needs to add tracing libraries to their code, ensure proper context propagation across service boundaries, and maintain consistent trace sampling—that's all undifferentiated work a mesh handles automatically at the infrastructure level.

Scale matters more than you'd think. With fewer than five services, running a service mesh adds more operational complexity than it removes. You're better off handling service communication in application code or with simple client libraries. Between five and fifteen services, a lightweight option like Linkerd might help if you specifically need mutual TLS or basic observability. Above fifteen services—especially when different teams own different services—meshes become increasingly valuable for maintaining consistent behavior.

But service count doesn't tell the whole story. Consider call patterns too. An architecture with ten services but each request fans out to hit all ten services benefits greatly from a mesh's retry logic and observability. An architecture with fifty services that mostly operate independently, processing messages from queues without much synchronous communication, doesn't need a mesh at all—meshes optimize request/response patterns, not asynchronous messaging.

Team readiness determines success more than technical requirements. Operating a service mesh requires solid Kubernetes knowledge. If your team struggles with basic concepts like pods, deployments, and services, adding a mesh will multiply problems instead of solving them. You need engineers comfortable debugging networking issues, reading TLS handshake errors, and interpreting proxy logs. Plan for 2-3 months of learning time in non-production environments before deploying to production.

When to skip it entirely: You're running a monolith with a couple microservices bolted on—not enough distributed communication to justify mesh complexity. Your services communicate primarily through message queues (Kafka, RabbitMQ, SQS) with few synchronous calls—meshes don't help much with asynchronous messaging patterns. Your team lacks Kubernetes expertise and can't invest months learning—the operational burden will overwhelm you. You're optimizing for absolute minimum latency in ultra-low-latency scenarios like high-frequency trading—the proxy hop's 1-3ms overhead might actually matter.

Try this test: write down three specific, measurable problems a service mesh would solve for your architecture. "Better observability" is too vague. "We need to see request rates and latency distributions for all internal API calls because we currently have no visibility into service dependencies" is specific. If you can't articulate concrete problems that currently cause measurable pain—engineer hours wasted, production incidents, security compliance issues—you probably don't need a mesh yet. Maybe you never will, and that's fine. Not every architecture requires every technology.

Any organization that designs a system will produce a design whose structure is a copy of the organization's communication structure

— Melvin Conway

Frequently Asked Questions About Service Mesh

Service meshes moved from experimental to production-ready over the past few years. They solve genuine problems around service communication, security, and observability—but only for teams who've hit specific complexity thresholds.

The decision comes down to matching technology to your actual situation. Don't implement a mesh because it's trendy or because conference talks make it sound essential. Implement one when you're spending real engineering time on problems the mesh solves: inconsistent retry logic across services, lack of visibility into service dependencies, manual certificate management burden, or difficulty rolling out canary deployments safely.

Start with clear objectives. Choose the simplest tool that meets your needs—usually Linkerd unless you specifically need Istio's advanced features or Consul's VM support. Deploy gradually with a pilot application, expand incrementally service by service, and invest in team education. Teams that take this methodical approach succeed. Teams that rush to deploy the fanciest mesh across their entire infrastructure in a weekend create outages and end up rolling everything back.

Looking ahead, service meshes will remain valuable infrastructure for teams running complex microservices architectures at scale. The technology continues maturing—performance improves, operational complexity decreases, and tooling gets better each year. But the fundamental question stays the same: do the problems a service mesh solves outweigh the operational cost of running one? Answer that honestly for your specific situation, and you'll make the right call.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.