Abstract decentralized peer-to-peer network with glowing interconnected nodes representing laptops, smartphones and desktop computers on a dark blue gradient background

What Is a Peer to Peer Network?

Think of the last time you downloaded a massive game update or streamed a live event online. Chances are, you weren't pulling all that data from a single server somewhere. Instead, dozens—maybe hundreds—of other people's computers were serving up pieces of that content directly to you.

That's a peer to peer network in action. Rather than funneling everything through one central computer acting as traffic cop, these networks let individual devices talk directly to each other. Your laptop, phone, or gaming console becomes both a receiver and a broadcaster simultaneously.

Here's what makes this interesting: every device (we call them "nodes" or "peers") shares whatever resources it has—bandwidth, storage space, processing power. You download a chunk of data from someone in Seattle, another piece from Barcelona, maybe a third from Tokyo. Then your device starts sharing those same chunks with the next person who needs them.

Why bother with this seemingly complicated setup? Simple—centralized servers hit walls fast. Picture 10 million people trying to download the same software update from one server farm. That server gets hammered, slows to a crawl, maybe crashes entirely. But spread those 10 million people across the network itself? Each new person actually adds capacity instead of consuming it.

How Peer to Peer Networks Function

Let's get into the actual mechanics. How do these networks coordinate without a boss telling everyone what to do?

Decentralized Architecture Basics

Nobody's in charge—that's the whole point. Control gets spread across everyone participating. When your device joins up, it basically shouts "Hey, I'm here, and here's what I've got!" to nearby peers using a discovery protocol.

The network doesn't collapse when individual computers disconnect because there's no critical central point. Lose one peer? No problem—dozens more have the same content.

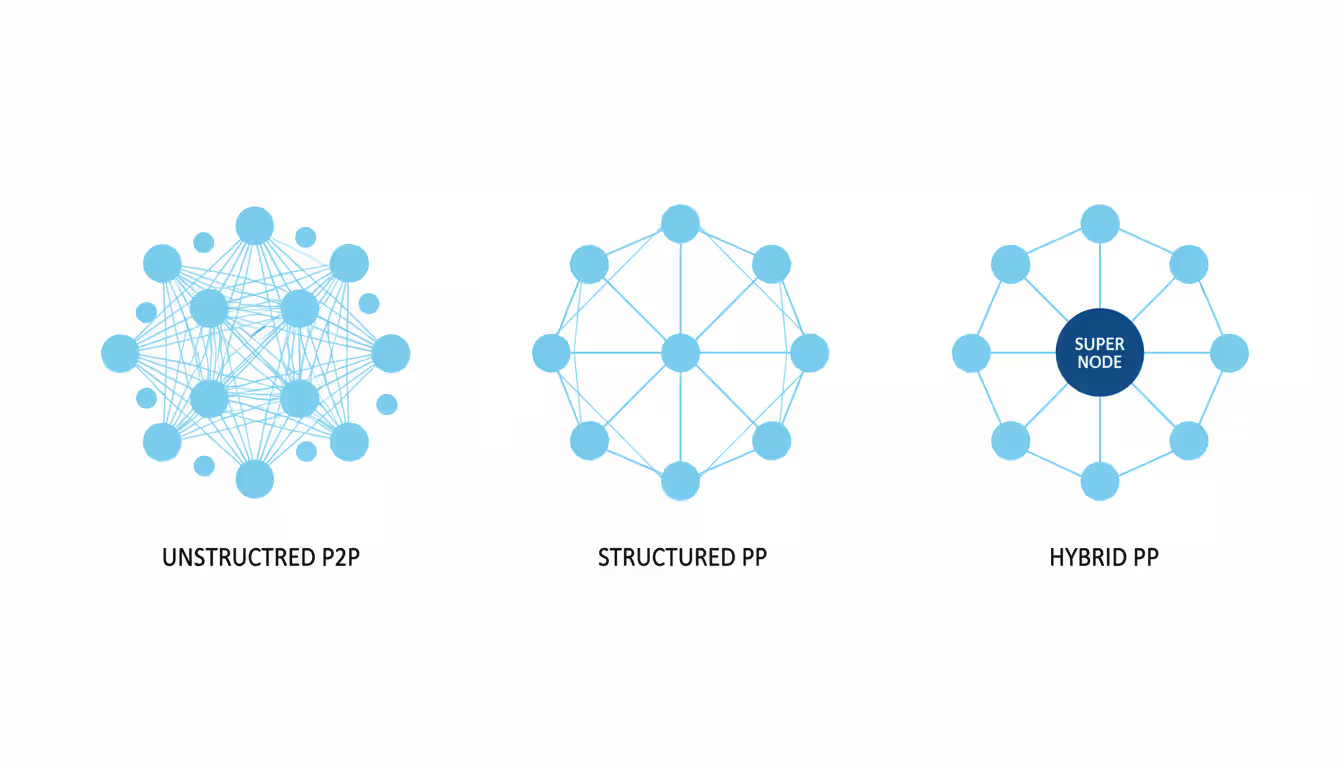

You'll find three main ways these networks organize themselves:

Unstructured networks let connections form organically, almost chaotically. Nodes link up with whoever's nearby or available. Finding specific content means sending out requests that bounce from peer to peer until someone responds "I've got that!" This approach stays simple but gets inefficient fast when searching for rare files. Early BitTorrent implementations worked this way, though they'd use a tracker (somewhat centralized) to jumpstart peer discovery.

Structured networks impose order through distributed hash tables. Each peer gets assigned responsibility for tracking certain content based on cryptographic hashes. Need a specific file? The network knows exactly which peers to ask. Much faster, but managing these relationships when peers constantly join and leave requires sophisticated algorithms. Chord, Kademlia, and Pastry represent different flavors of this approach.

Hybrid models sneak in some centralization for practical reasons. A central directory might index what content exists where, but actual transfers still happen peer-to-peer. Napster pioneered this back in 1999—centralized search, distributed downloads. It combined the best parts of both worlds until legal troubles shut it down.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Node Communication and Data Sharing

When you want to download something, your client software generates a unique hash—think of it as a fingerprint—identifying that exact content. This hash gets broadcast to connected peers.

Peers who have matching content respond. Your software then opens simultaneous connections to multiple sources, downloading different segments in parallel. Maybe you pull bytes 1-100,000 from Peer A, bytes 100,001-200,000 from Peer B, and so on.

Modern implementations get clever about encouraging good behavior. If you just download without ever uploading to others (we call this "leeching"), the network notices. Your speeds get throttled. Peers who share generously get preferential treatment—faster downloads, more connections, higher priority.

The network constantly adapts to people coming and going. Your software sends periodic "still here!" messages to connected peers. Someone drops offline mid-transfer? No sweat—the protocol switches to alternative sources automatically, picking up exactly where it left off.

BitTorrent's tit-for-tat algorithm represents this perfectly. Share with me, I'll share with you. Refuse to contribute? Enjoy your dial-up speeds.

Types of Peer to Peer Network Applications

The architecture proves remarkably versatile. File sharing just scratches the surface.

Peer to Peer VPN Services

Instead of routing your internet traffic through a VPN company's servers, what if you bounced it through other users' devices?

That's exactly how peer to peer VPN services work. Hola became the poster child for this approach, attracting millions of users before security researchers exposed major privacy problems. You'd access Netflix through someone else's IP address in another country—sounds great! Except your connection simultaneously became an exit node for strangers, potentially facilitating anything from spam to much worse.

Your IP address got associated with whatever some random user decided to do. Not ideal.

The core concept remains sound for trusted networks, though. Companies with offices worldwide can create private peer meshes. An employee in Mumbai needs access to resources in Toronto? Route through a colleague's authenticated connection instead of backhauling everything through corporate headquarters. Saves bandwidth, reduces latency, cuts costs.

The difference between public and private peer to peer VPN implementations matters enormously. Public = security nightmare. Private with authentication and vetting = potentially useful tool.

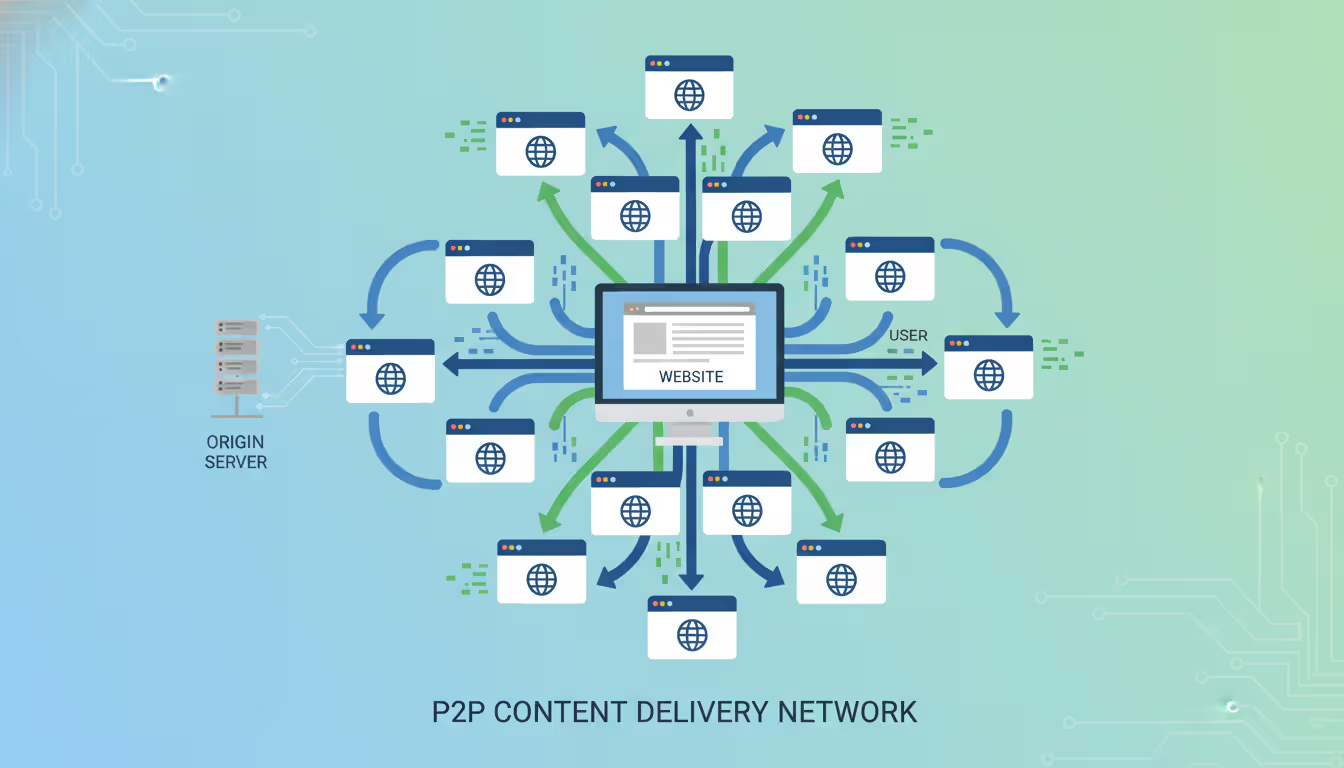

Peer to Peer CDN Solutions

Traditional content delivery networks park cached copies of websites on servers scattered globally. Expensive infrastructure.

Peer to peer CDN tech transforms website visitors into mini-CDN nodes. Visit a site once, and your browser caches assets locally. The next person visiting from your geographic area might pull those same images, scripts, or videos directly from your device instead of the origin server halfway around the world.

The numbers work out beautifully for viral content. That video everyone's watching? Each viewer makes it more available, not less. Server load drops while performance improves—the exact opposite of traditional hosting's scaling problems.

Peer5 (acquired by Microsoft) and Streamroot (acquired by Limelight Networks) built commercial services around this model. JavaScript libraries coordinate everything in-browser. Signaling servers handle lightweight peer coordination, but the bandwidth-heavy content delivery happens edge-to-edge.

WebRTC provides the underlying browser technology making this possible. Direct browser-to-browser data channels, originally designed for video chat, turn out perfect for content distribution too.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

File Sharing and Media Distribution

Yeah, torrents—we can't avoid mentioning them. BitTorrent dominates this space, though its public reputation suffers from piracy associations.

Here's what people miss: Fortune 500 companies use torrenting internally. Facebook distributes server updates across its data centers using BitTorrent protocols. Blizzard Entertainment pushes multi-gigabyte game patches through peer-assisted downloads, converting millions of gamers into distribution infrastructure. Amazon's S3 even offers BitTorrent support for public datasets.

The protocol's efficiency becomes undeniable at scale. Twitter once distributed a 20TB analytics dataset via BitTorrent because centralized hosting would've cost a fortune and taken weeks.

Live streaming evolved these concepts for real-time delivery. Instead of downloading entire files first, streaming protocols prioritize sequential chunks while maintaining buffers from multiple peers. PPLive and Sopcast pioneered this for live TV broadcasts, though legal challenges limited Western adoption.

Academic and scientific communities share massive datasets this way. CERN distributes experimental data. Internet Archive hosts petabytes of public domain content. Linux distributions offer torrent downloads alongside traditional HTTP—the torrent option frequently proves faster and more reliable.

Peer to Peer Platform Examples and Use Cases

Real-world implementations show the technology's range.

BitTorrent handles somewhere between 3-5% of all internet traffic in 2024. That's staggering for a protocol many people only associate with piracy. The µTorrent client alone claims over 150 million users. Legitimate uses include World of Warcraft updates, Ubuntu downloads, archive.org's massive media library, and pandemic-era academic paper distribution when university servers couldn't handle demand.

Blockchain networks took peer-to-peer architecture to unprecedented scale. Bitcoin nodes (roughly 15,000 globally as of 2024) each maintain complete transaction histories going back to 2009. Ethereum's network exceeds 5,000 full nodes. No central authority validates transactions—the network reaches consensus through distributed algorithms. Smart contract platforms extended this to executable code running across thousands of machines simultaneously.

IPFS (InterPlanetary File System) reimagines web infrastructure entirely. Instead of URLs pointing to locations (amazon.com/thing), IPFS uses content addresses—unique identifiers for data itself. Request a file, and IPFS retrieves it from any peer storing a copy. The project envisions a permanent web resistant to link rot and censorship. Wikipedia experiments with IPFS mirrors. NFT projects use it for decentralized asset storage. Brave browser includes native IPFS support since 2021.

Resilio Sync (formerly BitTorrent Sync) lets teams synchronize files across devices without cloud middlemen. Changes propagate through local network connections when available—gigabit LAN speeds instead of throttled internet uploads. Falls back to internet routing when necessary. Small architecture firms, video production teams, and remote research groups use it to move massive files without Dropbox bills or Google Drive limits.

WebRTC applications enable browser-based video calls with direct peer connections. Jitsi Meet, an open-source video conferencing platform, can establish peer-to-peer calls for small meetings, avoiding server costs entirely. Participants connect directly once their browsers negotiate the connection. Only scales to about 4-5 people before requiring server infrastructure, but works remarkably well for small groups.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Folding@home harnesses volunteer computing power for protein folding research. During COVID-19 pandemic peaks, the network exceeded 2.4 exaFLOPS—faster than the world's top 500 supercomputers combined. Participants download work units, process them locally using spare CPU/GPU cycles, return results. Stanford researchers gained computational resources otherwise requiring billions in hardware investment.

Advantages and Disadvantages of Peer-to-Peer Networks

Every architecture involves trade-offs. Here's how peer-to-peer stacks up against traditional client-server models:

| Feature | Peer to Peer | Client-Server |

| Cost | Each participant supplies their own bandwidth and storage—infrastructure costs stay minimal regardless of user count | You're paying for servers, bandwidth, and scaling capacity—costs climb directly with popularity |

| Scalability | Adding users actually helps—more peers mean more available resources throughout the network | Growth requires planning and investment—server upgrades, load balancers, CDN contracts |

| Security | Anyone can join public networks—enforcing consistent security policies becomes nearly impossible | You control everything from one place—implement encryption, access controls, and monitoring uniformly |

| Performance | Wildly variable—amazing with many quality peers, terrible when few are available or connections are slow | Predictable and consistent—you know what users get because you control the infrastructure |

| Maintenance | Changes require convincing users to update their software—no forced deployments | Push updates immediately—users get new features and fixes whether they want them or not |

| Best Use Cases | Content distribution, file sharing where users contribute resources, applications benefiting from decentralization | Banking, healthcare, e-commerce, anything requiring guaranteed uptime and regulatory compliance |

The cost math gets interesting for startups. Launch a content platform using peer-to-peer architecture, and hosting costs barely increase whether you have 100 users or 100,000. Compare that to traditional hosting where success literally becomes expensive—viral traffic can generate five-figure bills overnight.

Scalability extends beyond bandwidth. Distributed computing projects prove this—SETI@home analyzed radio telescope data using volunteer computers instead of supercomputers. Processing capacity grew with participation.

But security concerns kill many peer-to-peer proposals before they start. Healthcare data traversing random people's computers? Financial records distributed across an open network? Compliance officers reject these scenarios immediately. When regulations demand knowing exactly where data resides and travels, decentralization becomes a liability.

Performance unpredictability frustrates users expecting Netflix-quality streaming. A peer-to-peer video service might deliver flawless 4K one evening, then buffer endlessly the next because quality peers logged off. Users don't care why—they just know it's not working.

Security Considerations in Peer to Peer Networks

Security gets complicated when you can't trust everyone participating.

Content verification prevents malicious peers from distributing corrupted or weaponized files. Every legitimate file gets a cryptographic hash—a unique digital fingerprint. Before opening downloaded content, your client recalculates the hash. Matches the expected value? Safe to use. Mismatch? Either corruption occurred during transfer or someone's trying something nasty. Delete it immediately and blacklist that peer.

This works great when you know the correct hash beforehand. Torrent files and magnet links include these hashes explicitly. The challenge comes when discovering content on the network itself—how do you verify the hash without already knowing it?

Encryption secures the pipe between peers. Modern protocols wrap everything in TLS or equivalent encryption. Network administrators can't inspect traffic. ISPs can't throttle specific content (easily). Man-in-the-middle attacks become much harder.

But encryption only protects transmission. It doesn't verify a peer's intentions. An encrypted connection to a malicious peer still delivers malicious content—just privately.

Authentication mechanisms help filter participants. Private networks require credentials before allowing connections. Enterprise implementations might use certificate-based authentication tied to employee identities. Public networks implement reputation systems instead—peers accumulate trust scores based on behavior history. Consistently provide valid content with stable connections? Your reputation climbs. Distribute corrupted files? Reputation tanks, leading to network-wide shunning.

Sybil attacks exploit open networks' inability to verify unique identities. An attacker creates hundreds or thousands of fake peers—all actually controlled by one person. With enough fake identities, they can manipulate routing decisions, launch denial-of-service attacks, or conduct surveillance on network traffic patterns.

Bitcoin combats this by making identity creation expensive—proof-of-work mining requires real computational investment. Some networks tie identities to IP addresses (limited effectiveness—attackers rent botnets). Others implement social graphs where new identities need endorsements from established, trusted peers.

Eclipse attacks isolate specific targets by surrounding them with attacker-controlled peers. The victim only connects to malicious nodes, receiving a filtered view of network reality. For blockchain networks, this enables double-spending attacks. For file sharing, it enables censorship—the victim can't find content the attacker wants suppressed.

Defenses include connecting to geographically diverse peers, maintaining some long-term connections to known-good peers rather than constantly churning connections, and implementing anomaly detection for unusual network behavior patterns.

Traffic analysis remains possible despite encryption. Watch who connects to whom, when, and for how long—patterns emerge. Even without reading content, observers can deduce what's being transferred. Correlation attacks match download patterns across the network to identify participants in sensitive communications.

Tor's onion routing provides stronger anonymity by bouncing traffic through multiple intermediaries, making traffic analysis much harder. But latency increases proportionally—each hop adds delay.

We've watched peer-to-peer evolve from Napster's wild west to blockchain's institutional adoption. The difference? Mature implementations layer defenses comprehensively rather than picking one protection and hoping for the best. Successful networks combine encryption with authentication, add reputation systems and anomaly detection, then monitor continuously for emerging threats. Single-point solutions fail against motivated attackers

— Jennifer Martinez

When to Use Peer to Peer vs Client-Server Networks

Choosing the right architecture depends on matching technical characteristics to actual requirements.

Peer-to-peer makes sense when:

You're distributing content, especially large files or streaming media. The bandwidth requirements alone make centralized delivery prohibitively expensive at scale. User-generated content platforms particularly benefit—consumers naturally become producers.

Budget constraints eliminate traditional hosting options. Bootstrapped startups can't afford server farms and CDN contracts. Open-source projects lack commercial funding entirely. Community-contributed bandwidth becomes the only viable option.

Censorship resistance matters significantly. Journalists in authoritarian countries need communication channels governments can't easily shut down. Activists distributing politically sensitive information require networks without centralized control points vulnerable to seizure or legal pressure.

Network effects directly improve service quality. Content delivery networks get faster with more peers. Distributed computing projects gain capacity as participation grows. More users genuinely makes the service better—rare alignment of incentives.

Client-server makes sense when:

Sensitive data demands strict control. HIPAA-regulated healthcare systems, PCI-DSS-compliant payment processing, FERPA-governed educational records—regulations explicitly require knowing data locations and access patterns. Peer-to-peer architectures fundamentally conflict with these requirements.

Performance consistency matters more than peak potential. Real-time applications—voice/video calls, online gaming, financial trading—require predictable latency. Service-level agreements promise specific uptime and performance guarantees. You can't SLA something you don't control.

Complex transactions and queries happen frequently. Relational databases excel at atomic transactions with ACID guarantees. Distributed consensus protocols exist but impose significant overhead. If your application needs complex JOIN operations or strong consistency, centralized databases typically win.

Frequent content updates require immediate propagation. Push a security patch to centralized servers, and every user gets it instantly. Updating peer-to-peer networks means waiting for users to voluntarily install new client versions—slow and incomplete.

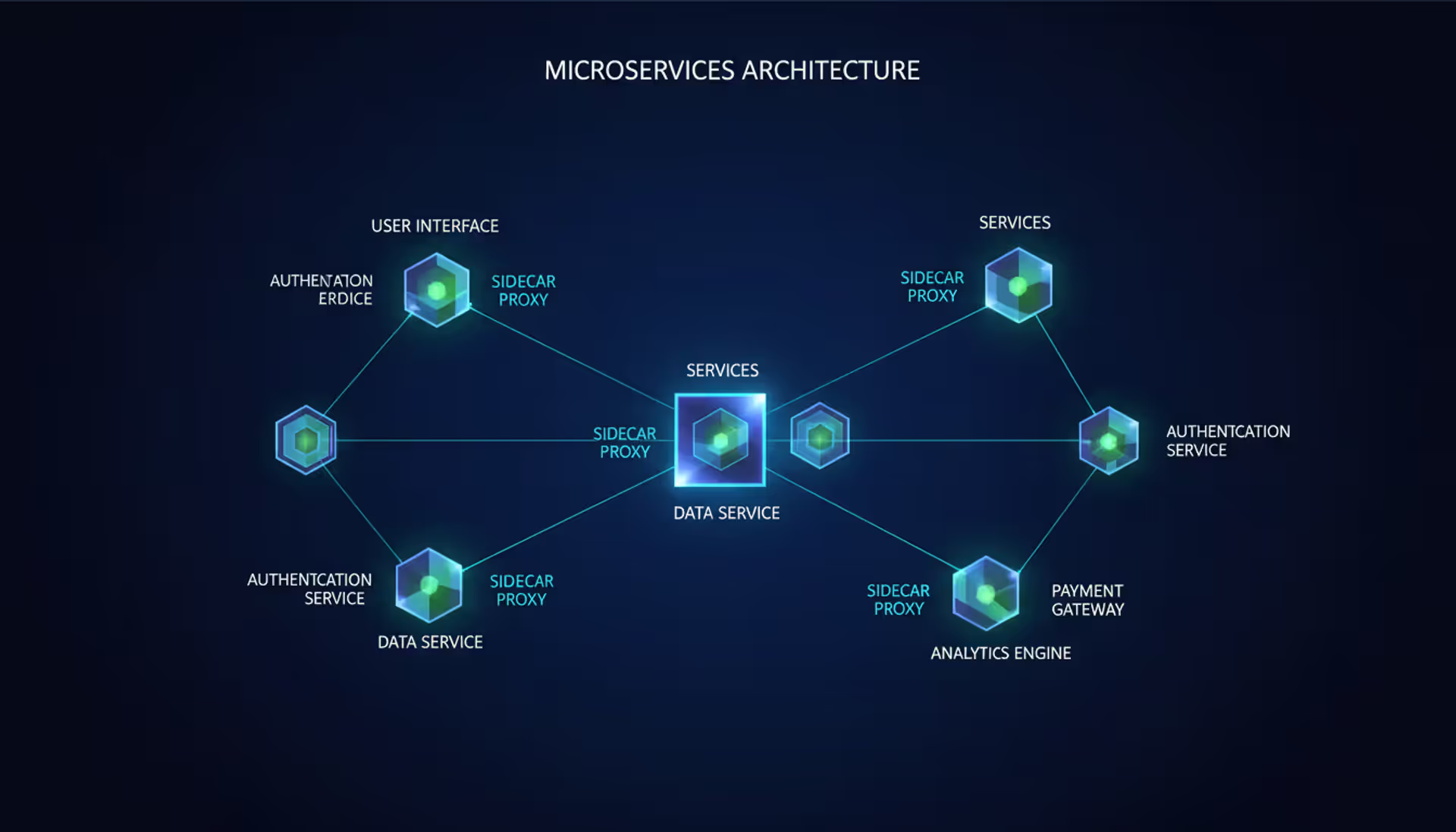

Hybrid approaches often deliver optimal results. Authenticate users centrally, provide search and indexing services from servers, then distribute actual content peer-to-peer. Users get security and discoverability from centralization plus efficiency and scale from distribution.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Small businesses with 8-10 computers sharing printers and files don't need dedicated servers. Peer-to-peer handles these basic needs without extra hardware costs. But once you hit 20+ users, centralized management typically justifies itself through simplified backups, security policy enforcement, and performance reliability. The transition point varies, but complexity generally outgrows pure peer-to-peer around 15-25 users.

Frequently Asked Questions About Peer to Peer Networks

Peer-to-peer networks flip traditional computing hierarchy upside down by eliminating bosses and distributing everything across participants. This architectural shift moves us from centralized chokepoints toward resilient systems that strengthen as more people join.

Knowing when these networks fit—content distribution, bandwidth-intensive applications, scenarios benefiting from decentralization—versus when centralization makes more sense—sensitive data, regulatory compliance, performance guarantees—determines whether you'll succeed or fight your architecture constantly.

Security demands attention regardless of approach chosen. Layer defenses comprehensively: encryption protecting transmission, authentication filtering participants, verification catching corruption, reputation systems identifying bad actors. No single protection suffices against determined threats.

The technology keeps evolving beyond file sharing origins. Blockchain networks demonstrate peer-to-peer principles scaling to hundreds of billions in value. Distributed web infrastructure experiments with IPFS suggest possibilities beyond today's centralized internet. WebRTC brings peer-to-peer capabilities directly into browsers without installing anything.

Whether evaluating peer to peer VPN options for privacy, investigating peer to peer CDN technology for content delivery, or exploring peer to peer platform possibilities for your next project, the fundamental calculus remains unchanged: centralization offers control, decentralization provides resilience. Hybrid approaches frequently deliver the best of both.

The networks succeeding long-term blend these philosophies intelligently—centralizing what benefits from coordination while distributing what gains from scale.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.