Modern data center interior with rows of illuminated server racks in blue and purple lighting, cable trays on ceiling, clean aisle between rows

What Is the Cloud Server?

Think of a cloud server as a computer that doesn't physically exist in one place. Instead of buying a metal box that sits in your office closet, you're renting computing power from massive data centers. The magic happens through virtualization—software that chops up powerful physical machines into dozens of separate virtual environments. Your slice runs completely isolated from everyone else's, with its own operating system and dedicated resources.

Here's what makes this practical: you skip the $5,000 upfront hardware cost. No more worrying about failed hard drives at midnight or whether your server can handle next month's traffic spike. The provider deals with broken components, electricity bills, and keeping the building at exactly 68°F. You connect through your browser or terminal, configure what you need, and adjust your computing power whenever demand changes.

Behind the scenes, a management layer handles the complexity. Need more RAM? The hypervisor grabs it from the resource pool—no technician required, no waiting for shipping. This elasticity works beautifully for online stores preparing for Black Friday, developers spinning up test environments for a few hours, or bootstrapped startups that can't predict next quarter's growth. You're essentially borrowing from a shared pool of enterprise hardware that would cost six figures to replicate on your own.

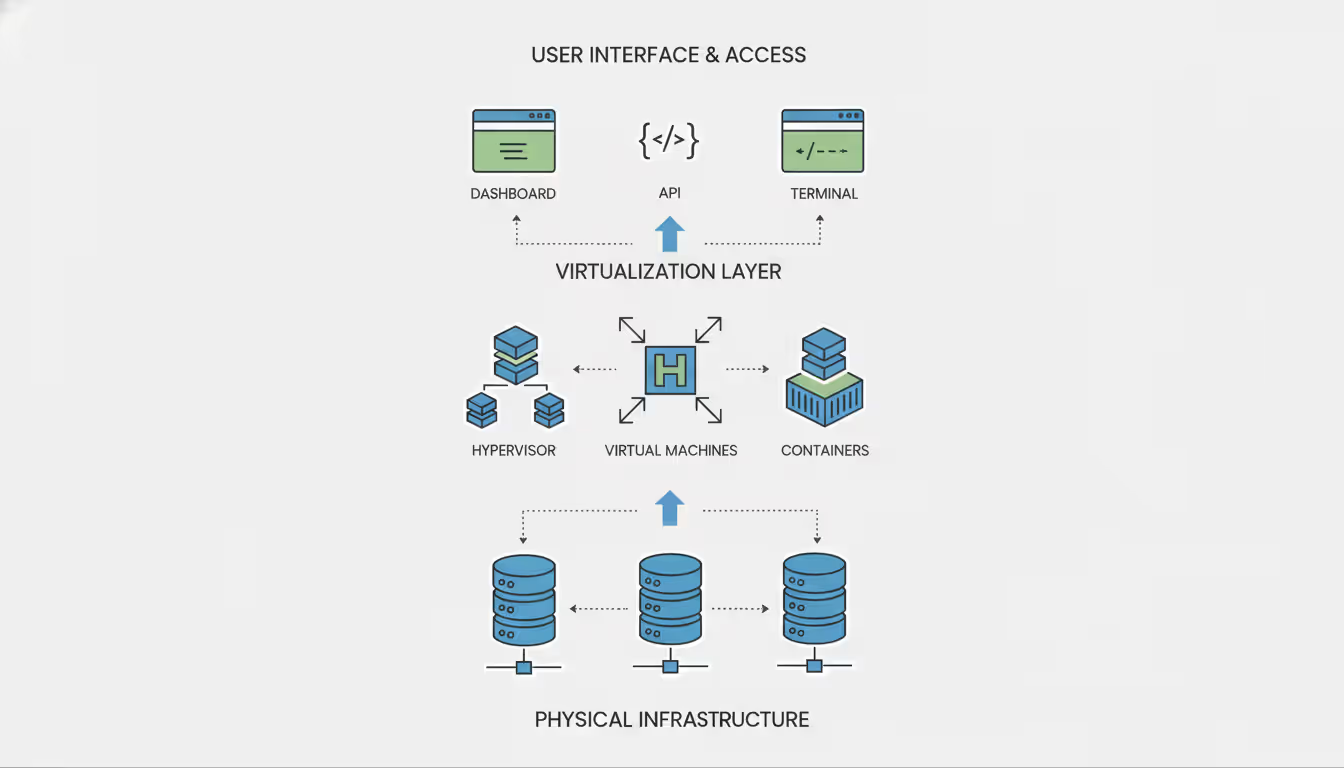

Understanding Cloud Server Architecture

Picture cloud infrastructure as a three-layer cake. The bottom layer holds the actual equipment—thousands of servers mounted in racks, storage systems measured in petabytes, networking gear pushing 100 Gbps connections. These facilities maintain backup power that could run a small town, cooling systems that prevent $2 million worth of hardware from overheating, and security that rivals Fort Knox. Building just one of these data centers costs upward of $500 million.

Above that sits the virtualization layer. Software like KVM, VMware ESXi, or Microsoft Hyper-V becomes the puppet master, carving up physical machines into virtual ones. It allocates CPU cycles, memory pages, and storage blocks to each instance. The clever part? Each customer's environment stays completely isolated. Your neighbor's cryptocurrency mining operation can't peek at your database or steal your processing time. The hypervisor acts as a strict bouncer.

The top layer is where you actually work. APIs, dashboards, and command-line tools let you spin up servers, attach storage, configure networks, and watch performance metrics. This orchestration software also handles the panic situations. When a physical server's motherboard dies at 3 AM, affected virtual machines automatically restart on healthy hardware within five to ten minutes. No pager duty required.

The shift to cloud infrastructure has fundamentally changed how businesses approach capacity planning. Instead of overprovisioning for peak loads, organizations now adjust resources in real-time, paying only for what they consume

— Marcus Chen

Multi-tenancy makes the economics work. Hundreds of customers share the same physical servers, but virtualization keeps everyone in separate sandboxes with guaranteed resources. You get access to hardware that individually costs $40,000, but you're splitting that investment with dozens of others. The provider achieves massive scale; you get enterprise features at startup prices.

Network architecture works differently than you'd expect. Instead of physical cables dictating your network layout, software-defined networking (SDN) creates virtual networks that reconfigure instantly. Want a private subnet? Done in thirty seconds. Need to add firewall rules? Click, click, saved. This flexibility enables network designs that would require a $50,000 equipment purchase and a week of cabling in traditional setups.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

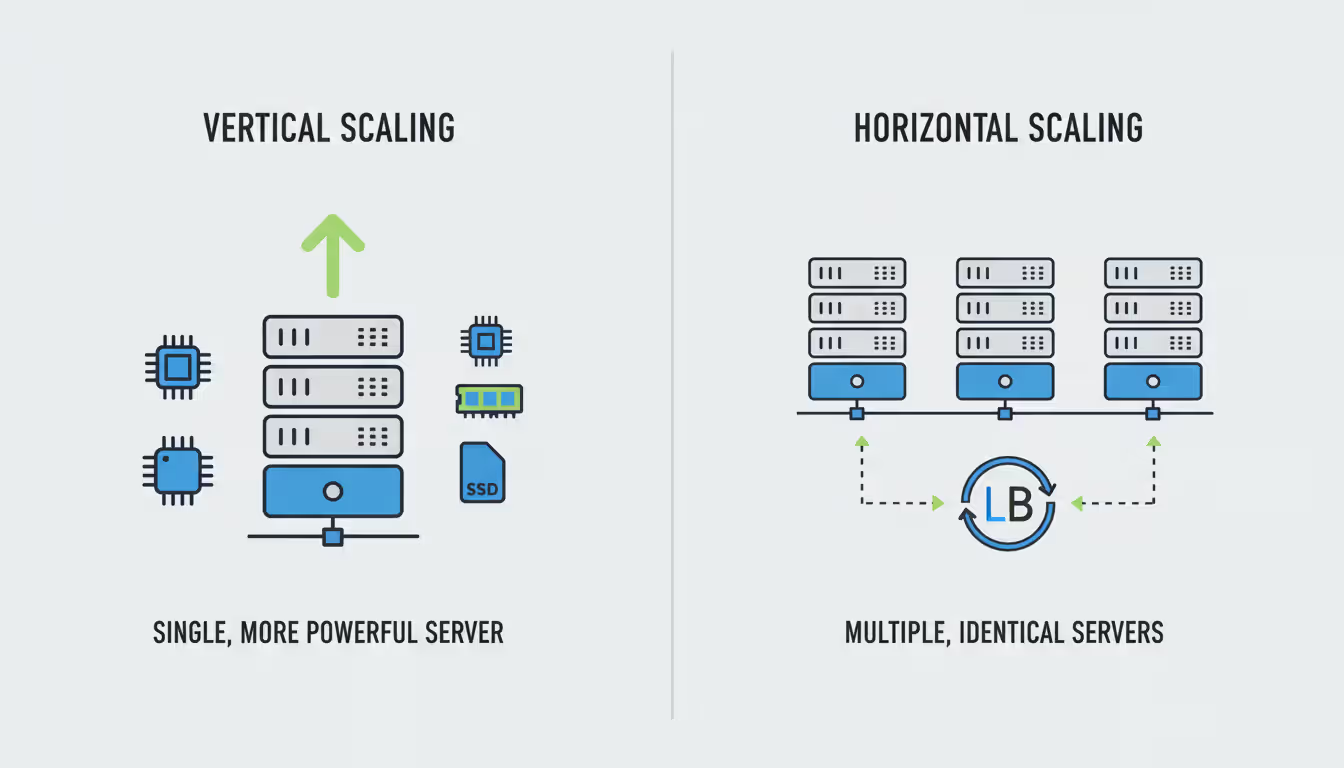

How Cloud Server Virtualization Enables Scalability

Virtualization puts a translation layer between physical hardware and your operating system. One beefy machine with 128 cores and 512GB of RAM can host fifty or more virtual machines, each running different operating systems. The hypervisor plays traffic cop, scheduling CPU time and memory access so everyone gets their fair share.

You'll encounter two main virtualization styles. Full virtualization creates complete virtual machines—each gets its own kernel and operating system. Every VM thinks it owns the hardware. The hypervisor translates between the guest OS and actual components. Maximum isolation, total compatibility, but there's a tax. Each VM needs its own OS installation, eating up 5-10GB of storage and several hundred megabytes of RAM just for the operating system.

Containers take a different approach. They share the host's operating system kernel but maintain isolated spaces for applications. Docker images might be 50MB versus 5GB for full VMs. Deployment happens in seconds instead of minutes. Kubernetes has made this architecture standard for modern applications. The tradeoff? All containers on one host must use the same OS kernel, so you can't mix Linux and Windows containers.

Resource allocation comes in two flavors. Static allocation reserves specific CPU and RAM amounts for each instance. You'll never face a resource shortage, but you're paying for capacity that might sit idle 70% of the time. Dynamic allocation lets the hypervisor overcommit—it might provision 200 virtual CPUs on a 64-core physical machine, betting that not everyone maxes out simultaneously. Higher density means better economics, but someone needs to monitor for resource contention.

Vertical scaling means beefing up an existing server. Most providers let you add CPU, RAM, or storage with a quick restart—maybe five minutes of downtime. Horizontal scaling adds more servers and spreads traffic across them. Web applications typically scale horizontally behind a load balancer. Databases often scale vertically until you hit the limits of the biggest available instance, then you're looking at sharding or read replicas.

Live migration technology is genuinely impressive. The hypervisor can move running VMs between physical hosts without dropping connections. If a server needs maintenance or starts showing disk errors, affected instances transfer to healthy hardware while still processing requests. Your users never notice. This capability is what separates modern cloud infrastructure from the old "pray the hardware doesn't fail" approach.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Cloud Server vs VPS: Key Differences Explained

People use "cloud server" and "VPS" interchangeably, but the architecture tells different stories. A traditional VPS lives on one physical server. You're sharing that box with other VPS instances. If the hardware dies—bad motherboard, failed RAID controller, whatever—every VPS on that machine goes offline until someone physically repairs it. I've seen that take anywhere from two hours to two days.

Cloud servers spread across a cluster of physical machines. Storage replicates across multiple drives or nodes. Your instance can migrate between hosts. When hardware fails in a cloud environment, orchestration software automatically restarts affected instances elsewhere. Usually happens in under ten minutes.

| Feature | Cloud Server | VPS |

| Infrastructure | Distributed across clustered physical servers | Lives on one physical machine |

| Scalability | Add resources instantly, often without restarting | Limited by the physical host; usually requires downtime |

| Pricing Model | Pay-per-use billing (hourly/monthly) or reserved discounts | Fixed monthly cost whether you use 10% or 100% |

| Performance Consistency | Guaranteed resources available; less "noisy neighbor" impact | Can suffer when other VPS instances on same host get busy |

| Best Use Cases | Production apps, variable traffic, high availability needs | Development servers, predictable loads, budget-conscious hosting |

Performance consistency varies wildly depending on who you're paying. Budget VPS providers often oversell—they might provision 800% of actual physical resources, hoping customers won't all peak together. When several VPS instances on the same host get hammered, everyone's performance tanks. I've seen CPU steal time hit 40% during peak hours on oversold hosts. Cloud providers offering guaranteed resources limit overcommitment ratios, preventing this nightmare scenario.

Pricing reflects these architectural differences. VPS hosting charges a flat monthly rate for specific resources. $20 per month whether your server handles 10 requests or 10,000. Cloud servers typically bill for actual consumption measured hourly or per minute. Great for workloads with predictable low usage. Less great for that database server running 24/7 at 80% CPU—suddenly reserved instances or flat-rate plans start looking attractive.

Network performance differs too. Cloud environments typically bundle higher bandwidth limits, better DDoS protection, and sophisticated traffic management. VPS hosting might give you 1Gbps shared among all instances on the physical server. Cloud servers often guarantee dedicated network throughput—AWS's larger instances promise 10 Gbps or more.

For a small business running a WordPress site with steady traffic, VPS hosting makes sense. $15/month flat, predictable costs, simpler architecture. For a SaaS platform that sees 100 users overnight and 10,000 during business hours, cloud servers let you scale up during peaks and down during valleys. Both better performance and lower costs.

How to Choose a Cloud Server Company

Uptime SLAs require reading the fine print. A 99.9% guarantee allows roughly 43 minutes of downtime monthly. Sounds good until you realize some providers exclude scheduled maintenance from that calculation. Others only guarantee the underlying infrastructure—if your specific instance crashes, tough luck. 99.99% permits just 4.3 minutes monthly, but typically costs 30-50% more.

Pricing transparency separates the professionals from the amateurs. Some providers publish clear per-hour rates for compute, storage, and bandwidth. Others hide costs in complex tiers with surprise charges for API requests, cross-region data transfer, or support incidents. I once worked with a company that got hit with $800 in egress fees they didn't know existed—turns out serving video files to users cost extra. Always request a detailed quote matching your actual usage pattern, and explicitly ask about charges missing from the pricing calculator.

Support quality matters most at 2 AM when your database is melting down. Entry-level plans might give you email-only support with 24-hour response targets—useless during an outage. Premium tiers include phone support and 15-minute response guarantees, but cost $200+ monthly just for the privilege. Check whether support staff actually troubleshoot application issues or just handle infrastructure. Some providers employ engineers who'll debug your slow queries. Others staff tier-one agents who can restart instances and escalate tickets.

Compliance certifications become non-negotiable for regulated industries. Healthcare apps need HIPAA compliance. Financial services require PCI DSS. European customers demand GDPR adherence. Verify the provider maintains current certifications for your industry and offers encrypted storage, audit logging, and data residency guarantees. Their certification doesn't automatically make your application compliant—you still need proper controls—but it ensures the infrastructure meets baseline requirements.

Geographic regions affect both performance and legal obligations. Hosting in California might deliver pages to Los Angeles users in 10 milliseconds but take 150 milliseconds to reach New York. Some jurisdictions legally require citizen data stays within national borders. Germany's particularly strict about this. A provider with data centers in your target markets simplifies compliance and improves user experience.

Integration capabilities determine whether the cloud server fits your workflow or fights it. Can you use Terraform for infrastructure-as-code deployments? Does monitoring data export to Datadog or New Relic? Are pre-built images available for Ubuntu 24.04 LTS with your preferred software stack, or are you building everything from scratch? These details don't seem important until you're manually configuring your fifteenth server.

Consider the provider's financial stability and roadmap. Attractive pricing from a startup cloud provider might precede an acquisition or shutdown, forcing a painful migration with two weeks' notice. Established providers invest in new features, expand to additional regions, and maintain infrastructure. Check recent customer reviews on Hacker News or Reddit for recurring complaints about service degradation, unresponsive support, or surprise price increases.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Cloud Server Setup: Step-by-Step Process

Selecting Your Configuration

Start by estimating what you actually need. A basic web server handling moderate traffic might run fine on 2 CPU cores, 4GB RAM, and 50GB storage. Database servers typically need more RAM relative to CPU—MySQL loves memory for caching. Compute-intensive applications like video encoding or data processing want powerful processors. Most providers offer pre-configured instance types: general purpose, CPU-optimized, memory-optimized, or storage-optimized.

Choose your operating system carefully. Linux distributions like Ubuntu, Debian, or Rocky Linux dominate cloud environments. Lower licensing costs, smaller resource footprints. Ubuntu 22.04 LTS uses maybe 200MB of RAM idle. Windows Server makes sense for applications requiring .NET Framework or specific Microsoft technologies, but expect to pay $12-50 monthly just for licensing. Some providers offer pre-configured application images with WordPress, Docker, or development stacks already installed, saving an hour of setup.

Select your region based on user location and compliance requirements. US East typically costs the same as US West, but some emerging markets run 10-20% higher due to infrastructure expenses. Enable backup options during provisioning. Automated snapshots cost $3-5 monthly but can save your business when someone accidentally drops the production database at 4 PM on Friday.

Deploying and Configuring Your Instance

After clicking "create," the orchestration system assigns your instance to available hardware, allocates resources, and boots your OS. Usually completes in 30-60 seconds. You'll get an IP address and initial credentials—SSH key pair for Linux or administrator password for Windows.

Connect using SSH (Linux) or Remote Desktop (Windows). First order of business: update system packages. On Ubuntu, run apt update && apt upgrade. You're patching security vulnerabilities that exist in the base image. Change default passwords immediately. Disable root login over SSH, requiring key-based authentication instead. Script kiddies constantly scan new IP addresses for default credentials.

Configure your firewall with a deny-all policy, then explicitly permit required services. Web servers typically need SSH (port 22), HTTP (port 80), and HTTPS (port 443). Cloud providers offer network-level firewalls (security groups) that filter traffic before it reaches your instance. Configure both the cloud firewall and the local iptables or firewalld for defense in depth.

Install your application stack. For a web server, maybe Nginx, PHP 8.2, and MySQL 8.0. Use package managers—apt, yum, or dnf—they simplify updates and handle dependencies. Configure services to start automatically on boot using systemctl enable nginx mysql. Your application should survive unexpected reboots.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Security and Access Management

Create separate user accounts for each team member. Never share credentials. Use SSH keys instead of passwords for authentication. Consider implementing a bastion host (jump box) for production environments—team members connect to the bastion first, then SSH from there to production servers. This architecture simplifies audit logging and lets you revoke access by removing keys from one location.

Enable automated security updates for critical patches. Ubuntu's unattended-upgrades package installs security fixes automatically. I've seen this prevent exploitation of vulnerabilities that appeared Friday evening. Sure, automatically updating everything can break applications, but kernel and core library security patches should install promptly.

Set up monitoring before problems occur. Install agents that track CPU usage, memory consumption, disk space, and network traffic. Configure alerts for threshold violations. If disk usage exceeds 85%, you want notification before it hits 100% and crashes your application. Many providers include basic monitoring in their control panels, but Prometheus, Datadog, or New Relic offer more sophisticated capabilities.

Establish a backup strategy today. Automated snapshots capture entire instance state but take 10-30 minutes to restore. Application-level backups of databases and critical files enable faster recovery of specific data—maybe five minutes to restore just the database. Test your backup restoration process quarterly. Backups you've never tested are theoretical protection.

Common Use Cases for Cloud Servers

Web hosting remains the bread and butter use case. E-commerce sites add resources during Black Friday and Cyber Monday, then scale down in January. I worked with an online retailer that ran 12 servers in December and 3 in February—cloud infrastructure made that economically viable. Content-heavy sites deploy servers in multiple geographic regions, serving users from the nearest location to cut latency by 100-200 milliseconds.

Development teams use cloud servers as staging and testing environments. Developers spin up exact replicas of production to test changes, then destroy those instances when testing finishes. This costs a fraction of maintaining dedicated test hardware that sits idle nights and weekends. CI/CD pipelines provision fresh servers for each build, run automated tests, then terminate the instances. The entire process might consume fifteen minutes of server time, costing $0.03.

Data storage and processing workloads leverage cloud scalability beautifully. Companies running nightly ETL jobs provision powerful instances with 64 cores for two or three hours, process terabytes of data, then terminate the instances until tomorrow. This pattern costs maybe $15 per night versus maintaining $40,000 worth of hardware that sits idle twenty hours daily. Object storage handles archival data, while high-IOPS SSD volumes attached to instances serve active databases.

Disaster recovery strategies depend on cloud servers' geographic distribution. Organizations replicate critical data to cloud servers in different regions. Primary data center catches fire or floods? Failover to cloud instances within ten minutes. Some companies run production workloads on-premises but maintain "warm standby" cloud servers receiving continuous data replication, ready to take over during outages.

AI and machine learning workloads increasingly run on cloud servers with GPUs. Training deep learning models requires massive computational power for days or weeks. Renting GPU-equipped instances for specific projects costs less than purchasing hardware that depreciates 40% annually as newer models emerge. Researchers access cutting-edge A100 or H100 GPUs for training runs, then switch to cheaper CPU-only instances for inference.

Game server hosting benefits from instant provisioning. Gaming companies launch servers in new regions within hours to support player demand, run limited-time events on temporary infrastructure, and scale capacity matching concurrent player counts. Indie developers test multiplayer features without investing in dedicated server hardware.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Frequently Asked Questions About Cloud Servers

Cloud servers have fundamentally changed how businesses approach infrastructure. Instead of sinking $20,000 into physical servers that depreciate 40% annually, companies now rent flexible computing resources that scale with actual demand. Understanding the architecture, virtualization technology, and operational characteristics helps you decide whether cloud servers fit your requirements and which provider offers the right combination of features, performance, and cost.

The distinction between cloud servers and VPS hosting matters for applications requiring high availability or handling variable workloads. Cloud servers' distributed architecture and instant scalability justify their added complexity and sometimes higher costs for production environments. VPS hosting still makes sense for simpler use cases with predictable resource needs and tighter budgets.

Successful deployment requires careful planning upfront. Select appropriate configurations, implement security practices from day one, and establish monitoring and backup procedures before you need them. This initial investment prevents security incidents and simplifies troubleshooting when things inevitably break at inconvenient times.

Whether you're hosting a business website, running development environments, processing data workloads, or building the next innovative application, cloud servers provide infrastructure that starts small and scales as your needs grow. The key lies in understanding your actual requirements, choosing a provider aligned with your priorities, and implementing operational practices that maximize reliability and security without overcomplicating things.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.