Modern data center corridor with glowing server racks and abstract floating digital spheres representing data objects

Object Storage Guide for Cloud Infrastructure

Content

Content

Think about Netflix streaming movies to millions of viewers simultaneously, or Instagram storing billions of photos. Behind these services? Object storage doing the heavy lifting. It's not your grandfather's file cabinet—there's no folder structure, no C:\ drives, nothing hierarchical at all.

Every piece of data becomes a standalone unit. That vacation photo you uploaded? It gets its own unique ID (something like "x7k9m2p4q8"), rich metadata tags, and lives in a flat pool with billions of other objects. Contrast this with the nested folders on your laptop where files sit inside directories, inside other directories, inside drives.

The business reality? Unstructured data is exploding. Surveillance cameras record 24/7. Medical scanners produce terabyte-sized imaging studies. IoT sensors never sleep. Traditional databases and file servers buckle under this weight. Object storage was built specifically for this problem—absorbing petabytes while costs stay manageable.

What Is Object Storage and How Does It Work

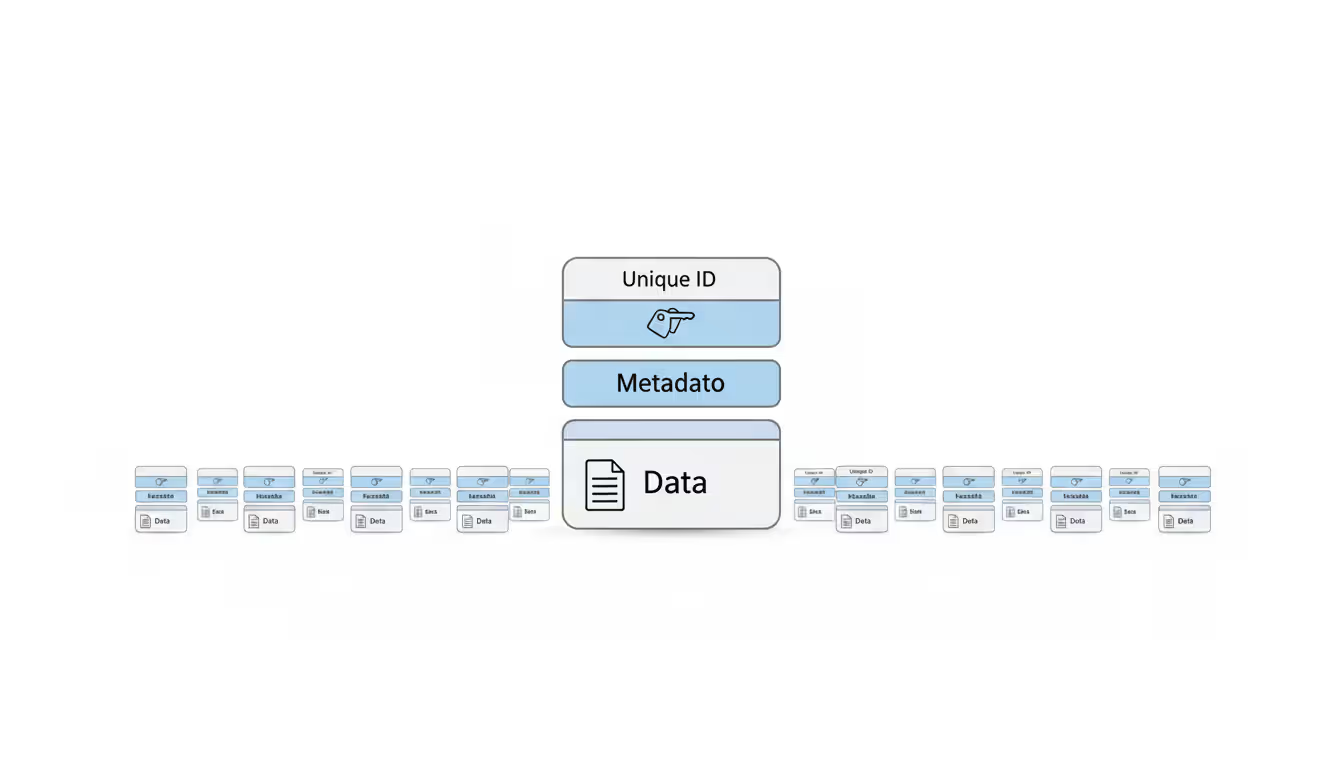

Break free from folders and file trees. Object storage doesn't organize anything hierarchally. Instead, data splits into self-contained units called objects.

Three pieces make up each object. The actual data comes first—could be a PDF, a video file, a database backup, anything. Next comes metadata, which goes way beyond simple timestamps. You can attach dozens of custom tags: department names, project codes, processing status, content classifications, really anything relevant to your operation. Finally, there's the unique identifier—a long string that permanently points to this specific object.

Consider a hospital's radiology department. They store each MRI scan as an object. The metadata might include patient identifier, scan date, body region, technician name, equipment model, referring physician, and diagnostic flags. When a doctor searches for "brain scans from March showing possible tumors," the system queries metadata instantly across millions of stored images.

Or take an online retailer with product photography. Each image becomes an object tagged with SKU, color variant, seasonal collection tags, photographer credit, usage rights, and approved channels. Marketing teams find exactly what they need without digging through folder hierarchies.

Companies slashing storage bills by 60-70%—that's what we're seeing constantly. But they're also accessing data faster than before. The catch? You've got to know when object storage fits and when it absolutely doesn't

— Sarah Chen

When you store something, the system returns that unique identifier. Let's say you upload a training video and get back "b8g4d1f3-5c9e-22fd-92e4-1353bd241114"—that's your permanent address. Reorganize your infrastructure, migrate providers, doesn't matter. That ID always retrieves your video.

Behind the scenes, the platform scatters your data across many physical drives, often in different buildings or cities. You don't choose this distribution pattern or manage it. The system replicates automatically based on durability settings you select. Request your object? Whichever storage node responds fastest sends it back.

The flat namespace changes everything for performance. Traditional file systems choke when a single folder holds 50,000 files—the system crawls through directory listings like reading a phone book. Object storage? Store 100 files or 100 billion, retrieval speed stays consistent. Everything exists at the same logical depth.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Object Storage vs Block Storage vs File Storage

Each storage type solves different problems. Pick the wrong one and you'll pay in performance or dollars.

| Storage Type | Object Storage | Block Storage | File Storage |

| Ideal For | Backup archives, video libraries, static web content, analytics data lakes | Database storage, VM boot disks, high-transaction apps | Team file shares, home directories, collaborative editing |

| Speed | Excellent throughput with large files; 50-100ms latency per operation | Sub-millisecond latency; handles random I/O beautifully | Speed varies by network; decent for sequential access |

| Growth | Scales horizontally without limits | Grows vertically; caps at volume limits | Constrained by file server hardware |

| Pricing | Cheapest per GB; charges for storage + API calls | Most expensive; you pay for allocated space whether used or not | Middle ground; capacity + performance tier costs |

| How You Access It | REST APIs over standard HTTP/HTTPS | Block protocols like iSCSI or Fibre Channel | Network file protocols—NFS on Linux, SMB on Windows |

| Changing Data | Must upload a new version; original stays immutable | Change individual blocks without touching others | Edit files directly; file locking prevents conflicts |

Block storage works at the infrastructure layer. Picture a hard drive carved into uniform chunks—maybe 4KB each, maybe 16KB. Your database runs on block storage because it's constantly tweaking small bits: updating an account balance here, marking an order shipped there. Block storage modifies those tiny pieces independently without rewriting surrounding data.

File storage adds the familiar folder structure we all know. Network drives in offices run on file storage. Teams access shared folders, edit spreadsheets together, organize project files in nested directories. It works great when collaboration matters and users expect traditional filesystem behavior.

Object storage trades edit-in-place capability for massive scale and lower costs. Need to change an object? Upload a replacement version. The original stays put. This immutability simplifies distributed systems tremendously—no coordination headaches because simultaneous edits don't exist.

Real-world example clarifies when to use what. A stock trading platform needs block storage for its transaction engine. Milliseconds count, and the system executes millions of microscopic updates daily. That same trading firm stores seven years of trade history (required by regulators) in object storage—data written once, accessed maybe quarterly, but absolutely must be preserved reliably and cheaply.

When to Use Object Storage in Your Cloud Environment

Object storage shines in specific scenarios. Look for these patterns: massive unstructured data, rare updates after initial write, long retention periods.

Backup and archive represents the slam-dunk use case. Your company generates daily backups—databases, application servers, user files. A medium-sized business might produce 50TB monthly. Those backups pile up fast. Compliance rules often mandate seven to ten years of retention for financial records, healthcare data, or legal documents. Object storage costs 80% less than traditional storage arrays for this kind of capacity.

Media libraries and streaming exploit object storage's ability to serve unlimited concurrent users. Think about a news organization with 40 years of video footage, millions of photographs, audio interviews. Journalists search metadata—"protests," "hurricane damage," "election night"—and pull relevant clips into editing workstations. Streaming platforms store each movie file, every subtitle track in different languages, thumbnail images, preview trailers as separate objects. Content delivery networks grab these assets and distribute them globally.

Big data and analytics warehouses couldn't exist without object storage economics. Data scientists dump everything into storage lakes: application logs, sensor readings, customer interactions, social media feeds. Nothing's cleaned up initially—just preserve the raw data. A retail chain accumulates ten years of point-of-sale data, website clickstreams, inventory movements. Later, analytics tools crunch through this lake identifying purchase patterns. You don't worry about capacity—just keep adding data.

Static website hosting leverages object storage's HTTP-native design. Upload HTML pages, images, CSS, JavaScript as objects. Configure the bucket to serve files directly to browsers. You've eliminated web servers for static content entirely. A product documentation site or marketing campaign landing page serves millions of visitors straight from object storage. One client runs their entire documentation portal this way—500GB of content, 2 million monthly visitors, $50/month storage bill.

IoT data floods meet their match with object storage. A logistics company tracks 50,000 shipping containers worldwide. GPS coordinates every 5 minutes, temperature readings, door sensors reporting open/closed status. That's 14 million data points daily. Object storage absorbs this continuous stream, organizing everything by container ID and timestamp in metadata for later pattern analysis.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Disaster recovery exploits geographic distribution. Replicate critical data to object storage in multiple continents. Primary datacenter catches fire? Applications retrieve data from storage buckets in another region. The system handles replication automatically—no cron jobs, no rsync scripts to maintain.

But know when to walk away. Don't use object storage for active databases where apps update individual records constantly. Skip it for applications expecting POSIX filesystem semantics or file locking. Avoid it when you need single-digit millisecond response times for tiny files.

How Object Storage Services Work in the Cloud

Cloud providers operate object storage as a fully managed service. They own the drives, the buildings, the replication logic, the scaling mechanisms. You interact purely through APIs—no mounting drives, no server connections.

Amazon's S3 API became the industry standard after launching in 2006. Now practically every provider offers S3-compatible endpoints. This compatibility matters because code written for one provider often runs on another with minimal tweaks. Applications make standard HTTP requests to store, fetch, list, or remove objects.

Here's the typical flow. First, create a bucket—essentially a container for objects. Bucket names must be globally unique across the provider's entire platform, like domain names. Second, upload objects into your bucket via PUT requests carrying the data plus any custom metadata tags. Third, retrieve objects with GET requests specifying bucket name and object key.

Scaling happens invisibly. Upload an object and the service automatically spreads it across multiple physical drives and storage nodes. Your needs grow from gigabytes to petabytes? The provider adds capacity behind the scenes. You never provision volumes, configure RAID, or plan hardware refreshes.

Durability comes through automatic replication. Most providers guarantee 99.999999999% durability (eleven nines)—expect to lose one object out of every 100 billion stored annually. The system achieves this by maintaining multiple copies on different physical devices, usually in separate datacenters within a region. Drive fails? Data center loses power? Your objects stay accessible from other locations.

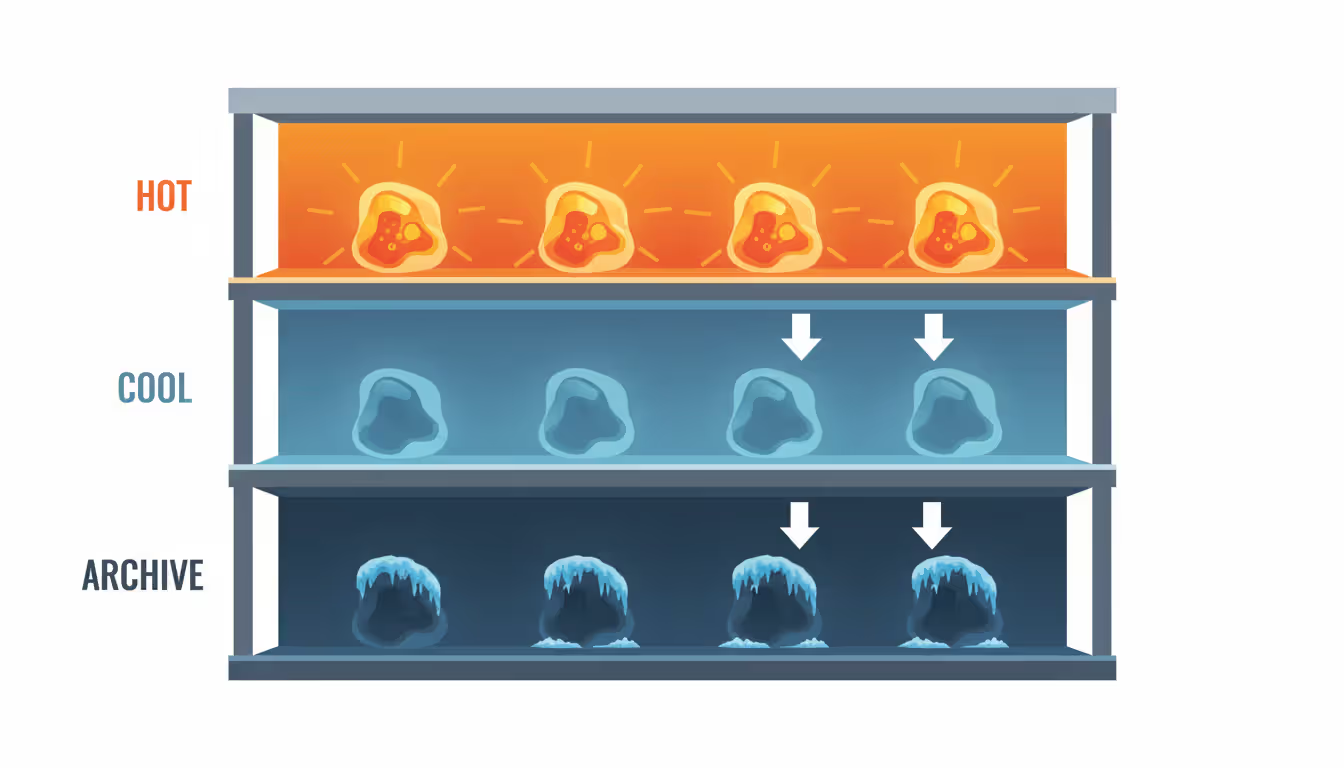

Pricing involves three components usually. Storage capacity charged per GB monthly. Data transfer charged per GB leaving the provider's network (usually downloads to internet; internal transfers might be free). API requests charged per thousand operations. Costs vary dramatically by access pattern—"hot" storage for frequent access costs more per GB than "cold" storage for rarely-touched data.

Different storage tiers optimize for different access patterns. Hot tier: immediate access, no retrieval fees, highest per-GB cost. Cold tier: lower per-GB storage cost but adds retrieval fees, maybe slight delays. Archive tier: rock-bottom storage rates but requires 3-12 hours to access your data and charges higher retrieval fees.

Lifecycle automation moves objects between tiers based on age. Define a rule: keep backups in hot storage for 30 days, transition to cold for 11 months, move to archive for six more years, then delete. You set it once; the system handles transitions automatically forever.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Choosing an Object Storage Service for Your Business

Selecting a provider means evaluating technical specs, operational needs, and total costs against your specific situation.

Durability guarantees deserve scrutiny first. Everyone advertises eleven nines, but implementation varies. How many replicas does the provider maintain? Spread across multiple datacenters or just within one facility? What happens during regional disasters? Some providers offer cross-region replication, maintaining copies thousands of miles apart.

Retrieval speeds differ wildly between storage classes. Hot storage typically delivers first byte in under 100 milliseconds—fine for serving website images or feeding analytics pipelines. Cold storage might add several seconds—acceptable for monthly reports, frustrating for interactive dashboards. Archive storage can take half a day—only suitable for compliance archives you almost never touch.

Geographic footprint affects both speed and compliance. Users concentrated in Asia? Pick a provider with multiple Asian regions to minimize latency. Regulations in certain jurisdictions forbid storing specific data types outside national borders. Healthcare companies in the US face HIPAA constraints. European firms deal with GDPR data sovereignty requirements.

Compliance certifications provide assurance around security practices. Look for SOC 2 Type II audits, ISO 27001 certification, industry-specific attestations like HIPAA for healthcare or PCI DSS for payment data. Financial institutions often require providers to complete lengthy security questionnaires and submit to third-party penetration testing.

True costs extend well beyond advertised per-GB rates. Calculate total ownership by factoring in data transfer fees, API request charges, retrieval costs for cold/archive tiers. One provider might advertise slightly higher storage rates but charge nothing for transfers between their storage and compute services—could prove cheaper overall. API request pricing catches people off-guard. Applications that list bucket contents frequently or perform thousands of small uploads can rack up surprising charges.

Integration capabilities determine how smoothly object storage fits your existing stack. Native SDKs available for your programming languages? Can you mount storage as a filesystem for legacy apps that expect traditional file access? Does the service integrate cleanly with your backup tools, analytics platforms, content management systems?

Security features require careful review. Encryption at rest should be standard—verify whether the provider manages keys or you control them yourself. Encryption in transit via TLS protects uploads and downloads. Access controls should support granular permissions down to individual objects, not just bucket-level all-or-nothing access.

Common Mistakes When Implementing Object Storage

Companies repeatedly misapply object storage by forcing it into inappropriate situations. A software startup migrated their PostgreSQL database to object storage chasing cost savings. Application performance immediately cratered. Database engines expect block storage that supports random reads/writes with minimal latency. Object storage's immutability and 50-100ms latency killed every database operation. They migrated back to block storage after wasting three weeks of engineering time.

Wrong access patterns create performance nightmares and cost overruns. An application downloads entire 5GB video files when it only needs the first 30 seconds for preview generation. Range requests could fetch just the required portion, but developers overlooked this. Wasted bandwidth, unnecessary transfer charges. The flip side: storing thousands of tiny files under 1KB each in object storage proves inefficient. You're paying per-request charges and minimum billable sizes. Aggregate those small files into larger objects instead.

Neglecting lifecycle policies lets storage costs balloon. A marketing team uploads 2TB of campaign assets monthly but never deletes anything. Three years pass—they're paying for 72TB of storage, mostly supporting campaigns that ended years ago. Lifecycle rules could automatically archive assets older than six months and delete anything past two years. Potential savings? 80% without any manual work.

Security misconfigurations expose sensitive data to the internet. Default bucket permissions sometimes allow public read access. Developers spin up test buckets and overlook this. A healthcare startup accidentally made a bucket containing patient records publicly readable. HIPAA violation, regulatory fines, news coverage. Always verify bucket permissions, enable access logging, use automated tools that scan for publicly exposed buckets.

Skipping versioning creates recovery nightmares after accidental deletions or overwrites. A financial services firm stored regulatory reports without versioning enabled. An automated script contained a bug that deleted hundreds of critical objects. Without versioning, those objects vanished permanently. They spent weeks regenerating reports from source data—an entirely avoidable mess. Versioning preserves previous object versions, enabling quick recovery.

Ignoring monitoring and alerting prevents early detection of issues. Storage costs can spike unexpectedly when applications misbehave or unauthorized uploads occur. Set alerts for unusual storage growth rates, abnormally high API request volumes, unexpected data transfer spikes. Monitor error rates for failed operations—might indicate application bugs or network problems before they impact users.

Author: Adrian Keller;

Source: clatsopcountygensoc.com

Frequently Asked Questions About Object Storage

Object storage evolved from niche technology into foundational cloud infrastructure. Its horizontal scaling combined with low costs makes it indispensable for modern data-heavy applications. Success comes from matching technology to appropriate scenarios—media libraries, backups, archives, analytics lakes—while avoiding situations requiring low-latency random access or frequent in-place updates.

The distinctions between object, block, and file storage represent fundamental design trade-offs. Object storage gives up modification flexibility and ultra-low latency to gain unlimited scalability and cost efficiency. Understanding these trade-offs helps you architect storage solutions balancing performance, cost, and operational complexity.

Data volumes keep growing exponentially. Object storage becomes more critical each year. Organizations mastering implementation—selecting appropriate storage tiers, implementing lifecycle automation, securing access properly, monitoring costs vigilantly—gain significant advantages managing their data infrastructure efficiently and economically.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.