Enterprise server room rack with multiple network switches showing LED port indicators and neatly organized patch cables in blue ambient lighting

Network Switch Monitoring Tools Guide

Here's something most IT teams discover the hard way: you don't think about your switches until Friday at 4:47 PM when everything stops working. One port misconfiguration or bandwidth spike can trigger a cascade that takes down half your infrastructure. Users can't work. Your phone won't stop ringing.

Monitoring tools specifically designed for network switches give you early warning systems. They track what's happening at the port level, watch for configuration changes someone made at 2 AM, and show you which links are approaching capacity. Instead of scrambling to fix things after they break, you spot problems while there's still time to act.

What Are Network Switch Monitoring Tools

These are software platforms built specifically to watch what Layer 2 and Layer 3 switches are doing right now. They pull data about individual port status, traffic moving through each interface, CPU load, memory usage, temperature sensors, and any configuration edits—whether authorized or not.

When we talk about network switch definition in monitoring contexts, we're focusing on the devices that move traffic between network segments. Not your servers, not your applications, not endpoints—just the switches themselves.

Generic network monitoring casts a wide net across routers, firewalls, wireless gear, and everything else. Switch-focused tools dig deeper into the metrics that matter specifically for switching: VLAN assignments, spanning tree changes, link aggregation states, MAC address table size, and port error counters. This narrow focus means better troubleshooting for Layer 2 headaches.

Consider a typical enterprise running 200 switches across three buildings. Without purpose-built monitoring, finding which specific port is dropping packets requires manually checking devices one by one. That's hours wasted on detective work. Proper monitoring tools discover your switches automatically and track every port continuously.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Key Features of Network Switch Monitoring Software

Good network switch software does more than ping devices and call it monitoring. Here's what actually matters.

Port-level visibility treats each interface as its own entity. You'll see operational status (up, down, administratively disabled), negotiated speed and duplex mode, input/output error counters, discard rates, and current utilization as a percentage. When users complain about slowness, you can identify the exact port causing problems instead of guessing.

Bandwidth tracking creates graphs showing traffic volume over days, weeks, and months. Most tools sample every five minutes, building a picture of normal patterns versus unusual spikes. You'll spot the gradual climb from 40% to 65% average utilization that signals "upgrade this link in the next quarter" rather than waiting for it to hit 100% and choke.

SNMP support powers most collection methods. Version 2c works fine for basic deployments—it's simple, widely supported, and gets the job done. Version 3 adds authentication and encryption when security requirements demand it. Tools query specific Management Information Base objects to pull back counters and status flags. Standard MIBs cover common metrics across vendors, while vendor-specific MIBs unlock proprietary features.

Alerting mechanisms tell you when something needs attention. Simple threshold alerts ("port utilization exceeded 80%") generate too much noise. Better implementations use compound conditions: "alert me when this port stays above 80% for more than ten consecutive minutes AND it's during business hours." That specificity reduces false alarms dramatically.

Visualization dashboards turn thousands of data points into something humans can actually parse. Topology maps show your switch stack and how devices connect. Time-series graphs reveal trends. Heat maps highlight hotspots at a glance. Drill-down features let you go from "Building 3 has an issue" to "Port 24 on switch 3B-IDF-2 is the problem" in three clicks.

Historical data retention matters more than people realize. Keeping 12-18 months of metrics helps you identify seasonal patterns (retail networks spike during holidays), justify budget requests with actual usage data, and troubleshoot intermittent issues that happened last month.

Network Switch Configuration Management Capabilities

Configuration drift—where your switches gradually diverge from intended standards—creates both security holes and troubleshooting nightmares. Imagine discovering during an audit that 40% of your switches use different SNMP community strings, or someone enabled telnet on edge switches last year and nobody noticed.

Automated backup copies the running configuration on schedule (usually nightly) and after any detected changes. These backups live in version-controlled repositories that track every modification, creating an audit trail you can review.

Change detection sends alerts when configurations shift, whether from deliberate updates or unauthorized tinkering. Advanced platforms compare configs line-by-line and highlight exactly what changed: "VLAN 47 added to trunk port Gi0/12" instead of just "configuration changed."

Compliance checking compares actual switch configs against policy templates you define. Maybe your security standards require all access ports to disable Dynamic Trunking Protocol. The tool scans every switch and flags ports where DTP is still active. You get a report showing exactly which devices violate which policies.

Rollback capabilities become lifesavers when changes go sideways. Rather than manually reconstructing what worked before, you restore a known-good configuration from six hours ago or last Tuesday. Three clicks and the switch is back to a stable state.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

How Network Switch Monitoring Works

Most switch monitoring runs agentless—nothing needs installation on the switches themselves. The monitoring platform initiates all communication, reaching out to query devices remotely.

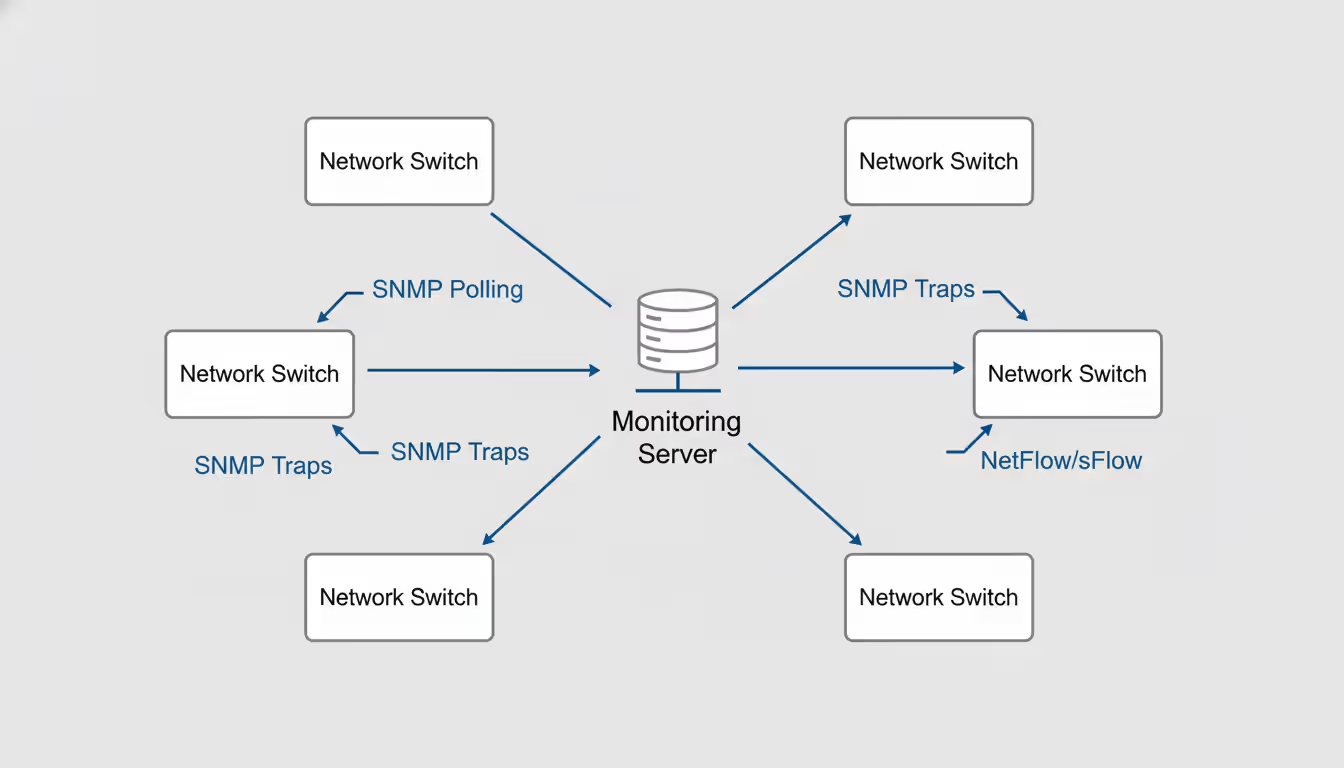

SNMP polling forms the foundation. Your monitoring server acts as the SNMP manager, sending GET requests to switches at regular intervals. Each switch responds with values from its MIB database: interface counters, CPU percentages, temperature readings, and operational status flags.

Polling intervals present a trade-off. Check every minute and you catch brief spikes but create more network overhead and consume switch CPU cycles. Poll every five minutes and you reduce load but might miss transient issues. Most organizations land on 2-5 minute intervals as the sweet spot.

SNMP traps flip the model—switches push alerts to your monitoring server when specific events occur. A port goes down? Trap. Temperature exceeds safe limits? Trap. Spanning tree topology changes? Trap. This event-driven approach provides immediate notification for critical issues instead of waiting for the next poll cycle.

NetFlow, sFlow, and IPFIX export granular traffic flow records: source and destination addresses, port numbers, protocols, byte counts, timestamps. This gives you application-level visibility—you can see that 30% of your bandwidth goes to video streaming or identify security threats based on unusual traffic patterns. The downside? Generating flow records consumes switch CPU resources and creates substantial data volumes.

CLI scraping involves the monitoring tool SSHing into switches and running show commands, then parsing the text output. This approach accesses data not exposed through SNMP, like detailed routing tables or vendor-specific diagnostics. It's also slower, requires managing SSH credentials, and puts more load on switches. Typically reserved for configuration backups or extracting proprietary vendor information.

(Note: Including a network switch image here showing monitoring architecture—a central server polling multiple switches via SNMP while receiving traps and flow data—would make these concepts immediately clear.)

The monitoring server processes everything it collects: storing metrics in time-series databases, evaluating alert conditions against your thresholds, and updating dashboards. Modern platforms handle 2,000+ switches simultaneously, processing millions of metrics hourly while maintaining fast query performance when you need historical data.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

How to Choose the Right Network Switch Monitoring Tool

Feature checklists look impressive in vendor presentations. Real-world selection requires asking harder questions.

Scalability requirements start with your current switch count but need to account for growth. A tool that handles 75 switches smoothly might struggle at 750. Ask vendors for customer references running environments similar to your target size. Database architecture tells you a lot—platforms built on time-series databases like InfluxDB or Prometheus typically scale better than those using traditional relational databases that weren't designed for high-frequency metric storage.

Vendor compatibility means more than "works with Cisco." Does it support your specific switch models, including that older distribution layer switch running firmware from 2018? What about the newest models you just purchased? Get trial access and test with your actual hardware before signing anything. Vendor labs don't always reflect real-world complexity.

Deployment model choices shape operational overhead. On-premises software gives you complete control and works in air-gapped environments, but you're maintaining servers, databases, and patching the monitoring platform itself. SaaS offerings reduce your operational burden but require internet connectivity and may raise compliance questions about where monitoring data lives. Hybrid approaches split the difference but add integration complexity.

Integration capabilities determine whether monitoring exists in isolation or connects to your actual workflows. REST APIs let you extract data programmatically. Webhooks push alerts to other systems. Pre-built integrations with ServiceNow, Jira, Slack, or Teams mean alerts create tickets automatically or notify on-call engineers where they're already working.

Total cost calculations go beyond sticker price. Implementation services, training, annual support contracts, and infrastructure costs (servers, storage, network connectivity) add up quickly. Some vendors charge per-switch, others per-port, some per-monitoring-server. Run a 3-5 year TCO analysis instead of comparing only first-year costs.

Team skill requirements vary wildly between platforms. Some tools assume deep networking expertise with SNMP MIB browsers and manual OID entry. Others provide simplified interfaces targeting generalist IT staff. Evaluate honestly: will your team actually use a complex platform effectively, or will a simpler tool see more consistent adoption?

We've seen organizations select feature-rich platforms that require 60 hours of training, then watch them gather dust while teams revert to manual troubleshooting because the tool was too cumbersome for daily use.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Common Network Switch Monitoring Mistakes to Avoid

Good tools still fail when implemented poorly. Here are traps that catch even experienced teams.

Skipping baseline establishment creates meaningless alerts from day one. Before setting any thresholds, collect data for 2-4 weeks to understand normal behavior. That distribution switch hitting 70% utilization every weekday from 9 AM to 5 PM? That's normal for your environment. Alerting at 60% just creates noise. Alert when sustained utilization exceeds established patterns instead.

Alert fatigue develops when monitoring generates dozens or hundreds of notifications daily. One healthcare network we evaluated sent 340 switch alerts per day on average. Most were false positives or minor issues nobody acted on. After spending a week tuning—raising some thresholds, adding time-based conditions, suppressing duplicate alerts—they reduced volume to 18 meaningful alerts daily. Response times improved because staff stopped ignoring notifications.

Configuration drift neglect happens when teams enable configuration backups but never review them. What's the point of detecting 50 config changes weekly if nobody looks at the reports? Schedule 15-minute weekly reviews scanning change logs. You'll catch unauthorized VLAN additions, security policy violations, or well-intentioned changes that introduced problems.

Insufficient documentation bites you when the admin who configured everything accepts another job. Six months later, nobody remembers why specific thresholds were chosen, what that custom SNMP OID monitors, or how alert escalation paths work. Document decisions while fresh, include reasoning behind choices, and update docs when you tune settings.

Monitoring the monitor gets overlooked until the monitoring system silently fails and you don't notice for days. Implement external health checks verifying your monitoring server is collecting data, evaluating alerts, and sending notifications. Some operations teams run a lightweight secondary monitoring system specifically watching the primary monitoring infrastructure.

Capacity planning neglect wastes all that utilization data you're collecting. Teams diligently monitor bandwidth but never analyze trends or plan upgrades until ports saturate and users complain. Quarterly capacity reviews take 60-90 minutes and prevent emergency upgrade projects.

Setting Up Your First Network Switch Monitor

Visibility separates reactive troubleshooting from proactive management. I've watched teams burn entire afternoons tracking down application slowness, eventually discovering a switch port was flapping every few minutes—something that would've been obvious immediately with proper monitoring. Configuration tracking is just as crucial. We investigated a security breach that traced back to an unauthorized VLAN someone created on an edge switch nine months prior. Without automated configuration management, that modification stayed invisible until it became an incident

— Daniel Brooks

Implementing monitoring follows a logical progression from discovery through operational use.

Step 1: Network discovery identifies what needs monitoring. Automatic discovery scans IP ranges using SNMP, queries CDP or LLDP neighbors, or imports from existing network management databases. Manual addition works fine for small deployments or when firewalls block discovery traffic. Budget 20-40 minutes for discovering 50 switches, longer for complex networks with multiple security zones.

Step 2: SNMP configuration enables data collection. On each switch, configure community strings (v2c) or user credentials (v3) with appropriate access controls limiting SNMP queries to your monitoring server IPs. Use read-only credentials—monitoring rarely needs write access, and read-write community strings create security risks.

Enable SNMP trap generation on switches, pointing to your monitoring server's IP. At minimum, enable linkUp/linkDown traps. Add vendor-specific critical traps for hardware failures, but be selective—enabling all possible traps generates overwhelming traffic.

Step 3: Threshold configuration defines what's normal versus problematic. Start conservative to avoid overwhelming your team. Sample starting points:

- Interface utilization: 80% sustained for 15 minutes

- Error rate: 0.1% of total traffic

- CPU utilization: 75% sustained for 10 minutes

- Memory utilization: 85% of available

- Temperature: 10°C below maximum rated operating temperature

Refine these based on your specific environment's baselines and operational experience over the first month.

Step 4: Dashboard creation organizes information by audience and purpose. Build a network operations center view showing overall switch health across all sites. Create a capacity planning dashboard highlighting utilization trends and growth rates. Develop device-specific views for troubleshooting individual switches showing port details, error counts, and recent config changes.

Effective dashboards answer specific questions quickly: "Do we have any critical issues right now?" "Which links need capacity upgrades this quarter?" "What changed on this switch when the problem started?"

Step 5: Alert routing connects detection to response. Configure critical alerts to page on-call engineers via PagerDuty or VictorOps. Route warnings to create tickets in your ITSM system for business-hours review. Send informational alerts to team Slack channels for awareness. Match alert severity to response urgency appropriately.

Step 6: Documentation and training ensures sustainable operations. Document access procedures, common troubleshooting workflows using the new tools, and dashboard purposes. Run hands-on training where team members practice investigating simulated issues. Record training sessions for new hires.

Initial setup typically takes 1-2 days for 50 switches, including discovery, configuration, basic dashboards, and documentation. Expect another 2-4 weeks of threshold tuning and workflow refinement as your team gains experience.

Comparison of Network Switch Monitoring Approaches

| Approach | Pros | Cons | Best Use Cases | Complexity |

| SNMP Polling | Works across all vendors, proven and stable, minimal switch overhead, simple credential management | Fixed intervals may miss brief events, limited to vendor-exposed MIBs, community strings pose security challenges in v2c | General health monitoring, port statistics, environmental sensors, standard operational metrics | Low |

| NetFlow/sFlow | Deep traffic visibility, identifies top talkers and applications, enables security analysis, assists capacity planning | Consumes switch CPU generating flows, produces massive data volumes requiring storage, needs separate collection infrastructure | Traffic pattern analysis, application performance investigation, security threat detection, bandwidth accounting | Medium |

| CLI-Based (SSH) | Accesses all switch data including proprietary features, retrieves information unavailable via SNMP, works with legacy devices | Creates high switch overhead, demands secure credential storage, output parsing is brittle, significantly slower than SNMP | Configuration backups, accessing vendor-specific diagnostics, managing older equipment lacking modern APIs | Medium-High |

| Agent-Based | Provides real-time event streaming, enables efficient collection, exposes advanced metrics, reduces polling overhead | Requires software installation on switches (often impossible), increases maintenance complexity, limited vendor support | Specialized environments with agent support, situations demanding sub-second event notification | High |

Frequently Asked Questions

Network switch monitoring transforms infrastructure management from putting out fires to preventing them. The right tools surface what's happening across your switch infrastructure—health status, performance trends, configuration changes—enabling faster troubleshooting and catching issues before users notice.

Success requires more than software deployment. Establish performance baselines before setting thresholds. Tune alerts aggressively to prevent notification fatigue. Integrate monitoring into daily operational workflows rather than treating it as a separate system. Review collected data regularly for capacity planning instead of letting it accumulate unused.

Cost considerations extend beyond initial licensing. Account for implementation services (often 20-30% of purchase price), staff training time, ongoing support contracts, and infrastructure requirements if running on-premises. Some vendors price per-switch, others per-port or per-monitoring-server. Calculate 3-5 year total ownership costs rather than comparing only first-year expenses when evaluating options.

Start with foundational capabilities—SNMP-based health monitoring and performance metric collection—before layering on advanced features like traffic flow analysis or automated configuration compliance. Organizations treating monitoring as continuous improvement rather than one-time projects realize the greatest value, adapting approaches as infrastructure and requirements evolve.

The investment pays back through reduced downtime, faster incident resolution, and data-driven infrastructure planning. When done well, monitoring shifts from "something we should do" to "how operations actually work.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.