Modern enterprise data center corridor with server racks, fiber optic cables, and a SAN switch with glowing port indicators

Storage Area Network Devices Guide

Picture your data center in 1997. Every server had its own stack of hard drives. Some servers were bursting at the seams while others sat 80% empty. You couldn't move capacity around without rebuilding machines. Backups? Each server needed its own strategy. That chaos drove enterprise IT toward centralized storage pools accessed through dedicated networks—what we now call storage area networks.

A SAN operates fundamentally differently than strapping disks directly into servers. When your database server writes transaction data, it's not talking to local SCSI drives. Instead, specialized network cards send block-level commands across high-speed fabrics to remote storage arrays. Your operating system genuinely can't distinguish between a local disk and a SAN volume—that transparency matters for compatibility and performance.

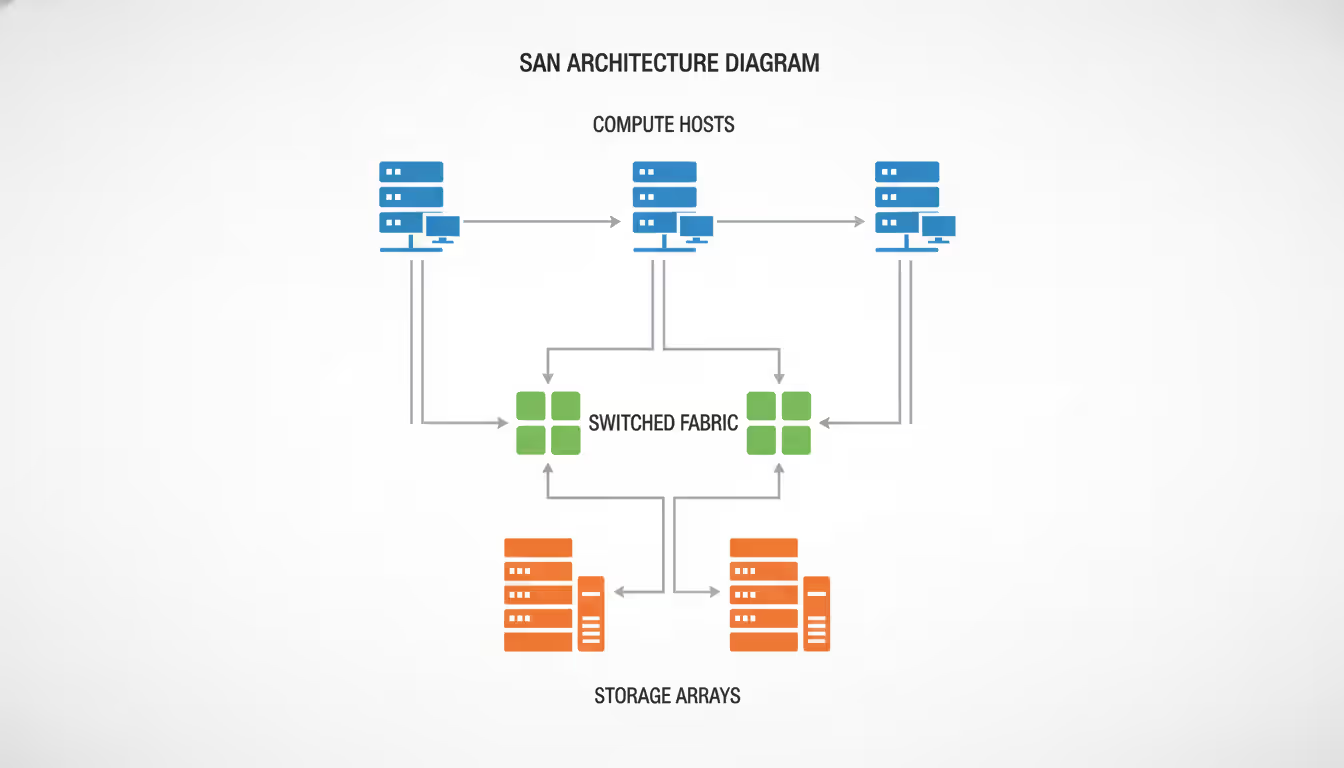

The architecture splits into three distinct tiers: compute hosts running applications, a switched fabric network handling storage traffic, and the actual storage arrays with their spindles or flash drives. This separation emerged from necessity—earlier attempts at "everything connected to everything" created maintenance nightmares where touching one component risked breaking unrelated systems.

Block-level access explains why databases love SANs. File-level protocols like NFS or SMB impose overhead checking permissions, updating directory metadata, and managing file handles. Block protocols skip straight to "write these specific sectors"—lower overhead translates directly to reduced latency.

What Is a Storage Area Network

The fundamental value proposition centers on pooling. Rather than provisioning 2TB to Server A and watching it sit 70% empty while Server B runs out of space, you create a shared reservoir. Server B grabs what it needs from available capacity. When that development project wraps up and returns 3TB to the pool, your Exchange environment can consume it immediately.

Storage area network san architectures assume component failure as inevitable. Drives fail at predictable rates—Backblaze publishes annual failure statistics showing 1-2% of drives dying each year in production environments. Cables get snagged during maintenance. Switch power supplies burn out. SAN designers counter this reality with redundancy at every layer: dual fabrics, dual HBAs per host, dual controllers per array, dual power circuits from separate panels.

The storage area network meaning crystallized around 1998 when Fibre Channel standardization hit critical mass. Companies could finally mix switches from Brocade with arrays from EMC and HBAs from Emulex—before standardization, every vendor's SAN solution was proprietary soup requiring single-vendor lock-in.

Contrast this with direct-attached storage where your database server has eight internal drives—simple but inflexible. Or network-attached storage which presents file shares over Ethernet—easy to understand but higher latency because of the file-system overhead. SANs occupy the sweet spot for applications needing both performance and flexibility.

One financial services client explained their 2019 transition from DAS to SAN: previously, their trading database lived on local flash drives in a two-node cluster. When storage filled up, they scheduled weekend downtime to physically install new drives and rebalance data—three times yearly. Post-SAN, they provision additional volumes from the pool in fifteen minutes without touching the database servers. Annual maintenance windows dropped from six to zero.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Core Storage Area Network Devices and Components

SAN Switches and Directors

Your fabric switches form the connective tissue. Entry-level 24-port models work fine for small shops—maybe a dozen servers connecting to two storage arrays. You'll spend $8,000-15,000 per switch. Problems emerge around 30+ servers when you exhaust port counts and start daisy-chaining switches, adding latency and complexity.

Directors represent the enterprise answer. Picture a chassis accepting hot-swappable blade modules, each blade sporting 24-48 ports. Total port density might hit 384 ports in a single chassis. Redundant supervisor modules prevent single points of failure—if one supervisor dies, the standby instantly takes over without dropping frames. These beasts start around $90,000 and climb past $200,000 when fully loaded.

Watch the oversubscription specifications. Marketing claims "2 Tbps total bandwidth!" sound impressive until you divide by port count and realize that assumes perfectly balanced traffic patterns. Real-world workloads aren't balanced—backup windows see everyone hammering storage simultaneously. A 48-port switch advertising 1.6 Tbps total throughput (2:1 oversubscription) delivers roughly 16 Gbps per port under full load, not the 32 Gbps each port theoretically supports. Core switches handling tier-1 workloads need non-blocking architectures where every port sustains wire speed simultaneously.

Modern switches pack diagnostic capabilities that would've seemed magical fifteen years back. They track frame-level metrics, measure queue depths at microsecond granularity, identify misbehaving devices causing fabric-wide slowdowns, and predict cable failures by analyzing error rate trends before complete failure. One hospital IT director told me they identified a flaky cable showing elevated CRC errors only under heavy load—something they'd chased for weeks as a "mystery Monday morning slowdown." Switch analytics pinpointed it in 45 minutes.

Zoning implements logical segmentation. You absolutely don't want development servers discovering production database LUNs—seen too many incidents where someone accidentally mounts prod volumes to a test environment and corrupts them. Zones control which initiator ports (the HBAs in your servers) can even see which target ports (storage array front-end connections). Security failures here have caused spectacular outages.

Host Bus Adapters (HBAs)

These PCIe adapters bridge your server's internal bus to the external SAN fabric. Don't cheap out with onboard converged network adapters unless you've validated they handle your I/O load—dedicated HBAs contain specialized ASICs offloading protocol processing, checksum calculations, and error recovery from your application CPUs.

Always specify dual-port cards. The second port isn't passive backup—both carry active traffic through multipathing software. Path failures happen constantly (cable maintenance, switch reboots, transceiver failures), and multipathing automatically redistributes I/O to healthy paths within seconds. Single-port HBAs save perhaps $150 per server while introducing catastrophic single-point failures. Terrible trade-off.

Firmware compatibility nightmares occur more often than vendors admit. Real example from 2023: customer ran HBA firmware 11.4.330.12, switch code 9.1.1, array firmware 6.5.0.13. Everything hummed along beautifully for nine months. They installed a security patch upgrading array firmware to 6.5.1.2, and suddenly experienced intermittent path flapping every 20-90 minutes. Three emergency troubleshooting calls later, they discovered that specific firmware combination wasn't qualified—HBA needed version 11.4.520 or newer to work reliably with array 6.5.1.x. One hour of planned HBA firmware updates would've prevented sixteen hours of unplanned disruption.

Keep a compatibility matrix spreadsheet religiously. Before any firmware update anywhere in your stack, cross-reference vendor interoperability matrices. Test in non-production environments first. Yes, this is tedious bureaucracy. It's also how you avoid explaining to your CFO why the ERP system crashed during month-end close.

Storage Arrays and Disk Systems

Arrays provide the actual persistence layer. Enterprise models feature active-active dual controllers—both simultaneously handling I/O rather than one sitting idle as cold standby. Each controller sports its own CPU complex, cache memory (typically 128GB-1TB nowadays), and multiple front-end network ports.

Controllers contain massive cache acting as high-speed buffers. Write operations acknowledge to the host immediately upon hitting cache (protected by battery backup or supercapacitors), then destage to physical media during idle cycles. This explains why arrays can acknowledge writes faster than the physical drives could possibly handle—cache absorption.

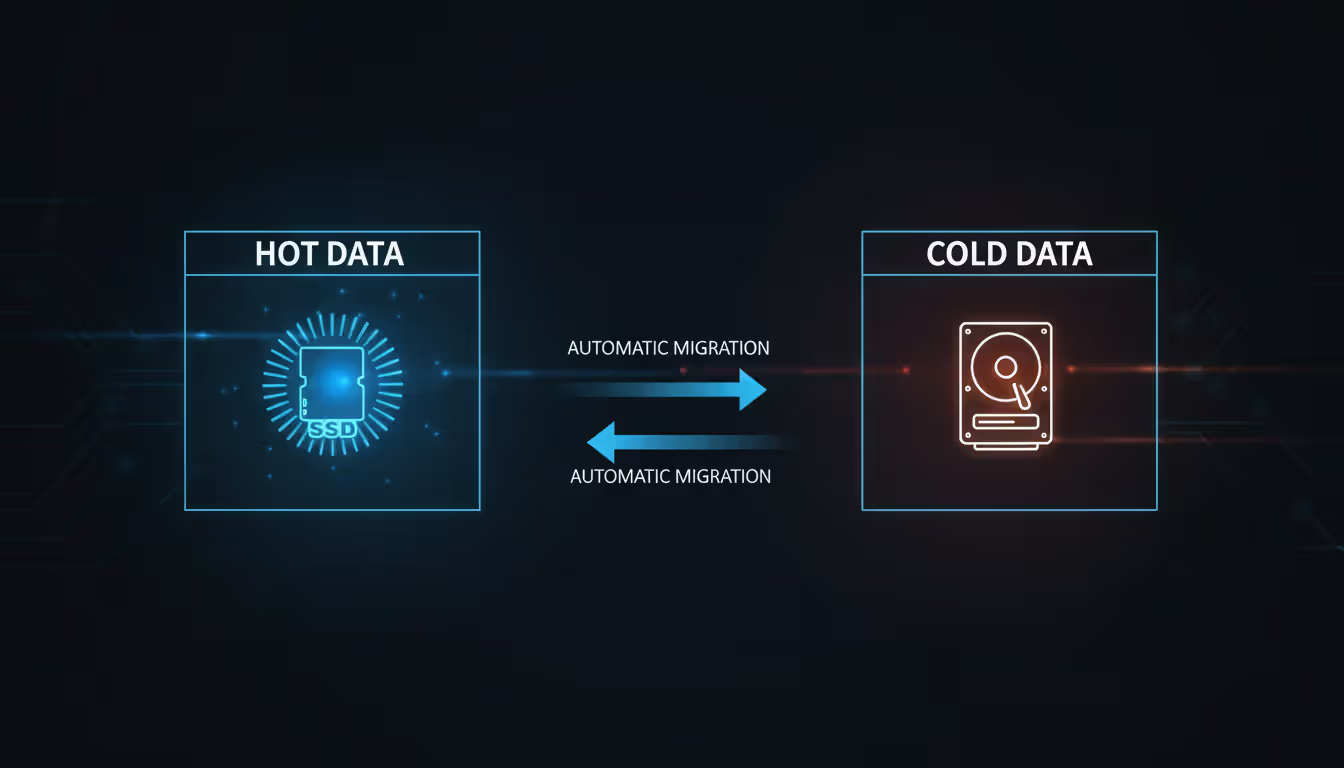

Automated tiering became standard around 2015. Arrays continuously monitor which logical blocks get accessed frequently, keeping hot data on expensive flash SSDs while migrating cold blocks to cheaper nearline SAS drives. One insurance company described their document management system storage costs dropping 47% after enabling tiering—current year's policy scans stayed on flash for claims adjuster speed, but decade-old documents moved to spinning rust at one-eighth the per-GB cost.

Snapshots provide point-in-time copies using copy-on-write mechanisms. The initial snapshot consumes essentially zero space—just metadata pointers. As blocks change, the array preserves previous versions before overwriting. Common pattern: hourly snapshots retained for 72 hours. When somebody inevitably corrupts the payroll database at 2pm (happens more than you'd think), restore from the 1pm snapshot rather than recovering from last night's tape backup.

Replication comes in synchronous and asynchronous variants. Synchronous writes to both local and remote arrays before acknowledging—your RPO (recovery point objective) stays at zero because both copies always match perfectly. The constraint? Distance limitations around 100 miles due to speed-of-light latency killing application performance beyond that. Asynchronous replication ships data in scheduled batches, tolerating minutes of RPO, but works across continents.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

SAN Management Software

Control planes matter as critically as data planes. Management platforms discover every device in your fabric topology, mapping the complex web of initiator-to-target relationships. Quality software auto-generates topology diagrams—invaluable when troubleshooting at 3am trying to understand why Server-23 suddenly can't reach Storage-Array-5.

Capacity forecasting modules track consumption trends and project exhaustion dates. If you're growing at 4TB monthly and currently sit at 185TB of 240TB capacity, the software alerts you that you'll hit 80% full in 13 weeks—time to order expansion shelves accounting for procurement and installation lead times.

Performance analytics correlate resource consumption patterns with application behavior, spotting problems like backup jobs saturating fabric bandwidth during business hours—schedule them differently, or upgrade bandwidth, or implement QoS throttling.

Self-service provisioning transformed operations for one client running a 400-VM VMware environment. Previously, requesting storage meant emailing the SAN team (response time: two business days), then coordinating with VMware admins to rescan and format new volumes. After implementing automated workflows, developers request capacity through a web portal, and within 20 minutes the management software provisions from the appropriate tier, creates necessary zones, presents to the target cluster, and even formats the VMware datastore—zero human intervention required.

Integration with Infrastructure-as-Code tools (Ansible, Terraform, Pulumi) enables declaring storage alongside compute and network resources. Spin up complete environments by executing playbooks that provision everything consistently. Configuration drift between dev and prod environments—a massive source of subtle bugs—evaporates when identical automation builds both.

How Storage Area Network Protocols Work

Storage area network protocols determine how bits physically move from server to storage—and the differences impact performance, cost, and operational complexity more than marketing materials suggest.

Fibre Channel dominated enterprise SANs for two decades. This purpose-built protocol runs over specialized optical or copper cables (the "Fibre" name refers to the logical topology, not cable type—copper works fine for short distances under 15 meters). Current implementations hit 128 Gbps per link, with 256 Gbps specifications finalized. FC was designed from scratch for lossless storage traffic, incorporating hardware-level flow control, in-order delivery guarantees, and credit-based buffer management. This clean-sheet design explains consistently low latency—sub-500-microsecond round-trip times are routine.

The catch? FC demands specialized everything. Your network team's deep Cisco Catalyst expertise provides zero help with Brocade FC directors—completely different command syntax, different architectural concepts, different troubleshooting methodologies. HBAs cost 3-5x equivalent Ethernet NICs. Optical transceivers run $400-800 each versus $80-150 for Ethernet SFPs. One manufacturing client calculated their FC infrastructure ran 2.8x the total cost of comparable-bandwidth iSCSI, though performance advantages justified the premium for their SAP HANA in-memory database.

iSCSI wraps SCSI commands inside TCP/IP packets, enabling SANs over standard Ethernet infrastructure. Your existing switch fleet works (with appropriate tuning). Your network engineers already troubleshoot TCP/IP all day. 25 GbE and 100 GbE networks deliver abundant bandwidth for most workloads—a properly configured iSCSI SAN routinely sustains 100,000+ IOPS with 3-5ms latency, perfectly adequate for virtualization clusters or mid-tier databases.

Early iSCSI (2003-2008 era) suffered terrible CPU overhead—servers burned 25-35% of CPU cycles just handling TCP processing. Modern converged network adapters with iSCSI offload engines eliminate this tax, pushing protocol work to dedicated silicon. Enable jumbo frames (9000-byte MTU instead of 1500) to reduce per-packet overhead—improves throughput by 15-25% in testing. Configure flow control preventing buffer overflows during congestion. Dedicate VLANs exclusively to storage traffic, isolating it from user network congestion.

FCoE attempted converging storage and data networks by encapsulating FC frames inside Ethernet. The value proposition seemed compelling: one unified fabric for everything, eliminating separate cable plants. Reality proved messier. FCoE requires lossless Ethernet implemented through Data Center Bridging extensions—not all switches support DCB properly, and troubleshooting converged networks grows exponentially harder than maintaining separate fabrics. Adoption essentially stalled by 2017 as most organizations concluded they preferred running native FC or native iSCSI rather than wrestling with FCoE complexity.

NVMe over Fabrics represents the current performance frontier. Traditional SCSI commands trace lineage back to 1986 parallel SCSI buses—forty years of backwards-compatibility kludges accumulated. NVMe was designed specifically for modern flash storage, eliminating legacy baggage. Queue depths explode from SCSI's typical 32-256 outstanding commands to 64,000+ per queue. Latency drops into single-digit microseconds. All-flash arrays with NVMe back-ends can finally deliver their full performance potential without protocol bottlenecks limiting throughput.

You can transport NVMe over RDMA-capable Ethernet (RoCE v2), InfiniBand, or even Fibre Channel (FC-NVMe specification). Adoption accelerated sharply through 2023-2024 as driver stability improved and array vendors standardized implementations. Designing a greenfield all-flash SAN today? NVMe-oF deserves serious consideration.

| Protocol | Maximum Speed | Infrastructure Cost | Implementation Complexity | Ideal Use Cases |

| Fibre Channel | 128 Gbps (256 Gbps emerging) | High—specialized HBAs, switches, cables | Moderate—requires new skill development | Mission-critical databases, tier-1 transactional systems, high-availability clusters |

| iSCSI | 100 Gbps | Low—leverages standard Ethernet | Easy—builds on existing IP knowledge | Budget-conscious deployments, branch offices, development/test environments |

| FCoE | 100 Gbps | Moderate—requires DCB-capable switches | High—complex convergence configuration | Limited adoption—mostly legacy brownfield environments |

| NVMe-oF | 200+ Gbps | High initially (dropping as adoption grows) | Moderate—maturing tools and documentation | All-flash arrays, latency-sensitive applications, high-performance computing |

Types of Storage Area Networks

Types of storage area networks span multiple dimensions—transport protocol, physical topology, and deployment model all influence design decisions.

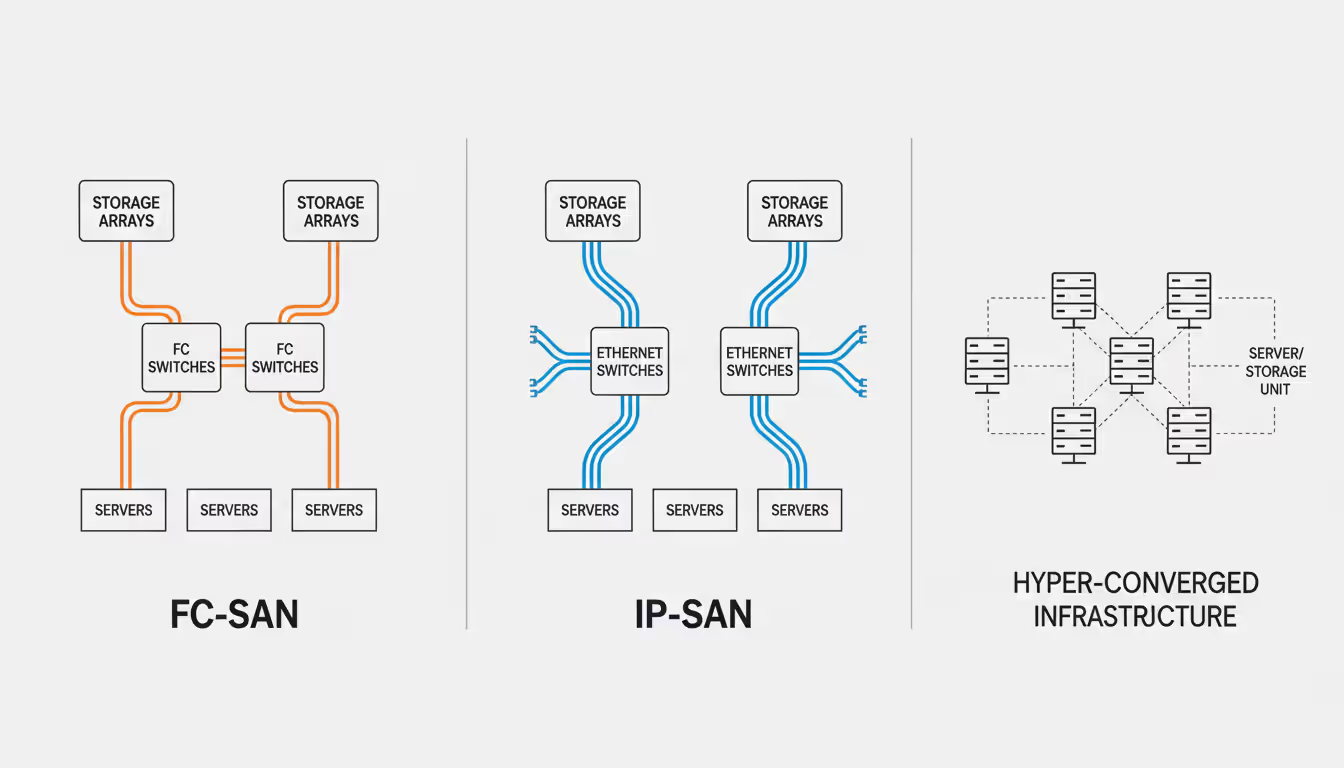

FC-SANs dominate traditional enterprise data centers. These networks employ Fibre Channel switches creating redundant fabric meshes. Standard architecture uses completely independent dual fabrics: Fabric-A and Fabric-B share nothing. Each server connects to both fabrics through separate HBAs. Storage arrays expose every volume through ports on both fabrics. If Fabric-A suffers catastrophic failure (someone fat-fingers a config wipe—this genuinely happens), traffic flows entirely through Fabric-B. Servers experience path failover messages in logs but applications continue running.

IP-SANs built on iSCSI appeal to organizations with deep Ethernet expertise but limited FC knowledge, and to cost-conscious buyers where performance requirements don't justify FC premiums. Best practices mandate dedicated storage VLANs (never mix storage and user traffic on the same broadcast domain), jumbo frames enabled consistently across the entire path, and flow control configured preventing buffer overflows during congestion. Converged network adapters combining standard Ethernet connectivity with iSCSI offload engines reduce PCIe slot consumption—valuable in dense 1U servers with limited expansion slots.

Unified SANs present multiple protocols from shared physical storage pools. Your array might simultaneously serve FC LUNs to Oracle RAC clusters, iSCSI volumes to Exchange mailbox servers, and NFS exports to VMware ESXi hosts—all carved from the same underlying capacity pool. This flexibility improves utilization (one pool to manage instead of three protocol-specific silos) but requires careful performance monitoring. You can't allow bulk file copies over NFS to starve FC database traffic of IOPS.

Hyper-converged infrastructure fundamentally inverts traditional SAN architecture. Instead of centralized arrays accessed over networks, HCI distributes storage across cluster nodes using each server's internal drives. Software-defined storage creates virtual SANs using 10 GbE or 25 GbE interconnects between nodes. Volume replication ensures data survives node failures. HCI works beautifully for virtual desktop infrastructure, remote offices, and edge computing where dedicated SAN infrastructure would be economic overkill. Performance lags purpose-built SANs for I/O-intensive workloads—you're using consumer-grade SSDs and Ethernet, not enterprise storage arrays with dedicated FC fabrics.

Topology choices range from simple direct-attach (server connects directly to storage without intervening switches) through flat single-tier switched designs to complex core-edge hierarchies. Direct-attach works for two-node clusters but doesn't scale—every server needs dedicated cables to every array, and cable management becomes unmanageable beyond 6-8 servers. Flat topologies with all devices connecting to one switch layer suit mid-sized environments up to perhaps 40 servers.

Large enterprises deploy core-edge hierarchures: high-density directors at the core (512+ ports, massive backplane throughput, maximum redundancy features), edge switches in server racks uplink to the core. Traffic flows host → edge switch → core director → storage, analogous to spine-leaf architectures in modern data center networks. This design scales to hundreds of servers while maintaining manageable structured cabling.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Storage Area Network Examples in Real-World Use

Storage area network examples reveal how diverse industries exploit SAN characteristics solving specific operational challenges.

Healthcare imaging systems generate absurd data volumes. A 400-bed hospital's radiology department produces 2.5-3.5 TB daily from CT scanners, MRI machines, and digital X-ray systems. One regional hospital network deployed a 64 Gbps FC-SAN connecting their PACS (Picture Archiving and Communication System) to 5.2 PB of tiered storage. Radiologists demand instant access—a chest CT might consume 650 MB, and they're reviewing 25-40 studies hourly. Flash tier maintains recent exams (last 90 days) with sub-80ms retrieval latency. Automated tiering moves older studies to 7200 RPM nearline SAS drives, balancing performance against per-gigabyte costs. Synchronous replication to a disaster recovery site 12 miles distant ensures patient records survive site-level failures—critical for HIPAA compliance and operational continuity during emergencies.

Financial services trading platforms cannot tolerate milliseconds, let alone seconds. One commodities exchange rebuilt their matching engine using NVMe-oF connecting application servers to all-flash arrays, achieving 180-microsecond average order execution latency—measured from order entry to confirmation. Synchronous replication maintains bit-identical copies at a secondary data center 35 miles away—metro distance keeping speed-of-light latency tolerable. They execute failover drills quarterly, actually cutting primary site power, verifying trading systems fail over to secondary within their 15-second RTO (recovery time objective). Every component incorporates redundancy: dual HBAs, dual fabrics, dual array controllers, dual power feeds from separate utility grids.

Virtualization platforms pack hundreds of VMs onto shared storage. A managed services provider runs 1,800 tenant VMs on an iSCSI SAN using 100 GbE connectivity. Arrays with thin provisioning let them over-subscribe capacity (provision 1.2 PB to tenants from 600 TB physical pool—most VMs consume 35-50% of allocated space). Hourly snapshots provide tenant-controlled recovery from accidental deletions without administrator involvement. Quality-of-service features guarantee minimum IOPS per tenant, preventing noisy neighbors from monopolizing storage performance. Integration with VMware vSphere enables vMotion and Storage vMotion, migrating live VMs between hosts and datastores without downtime—essential for maintenance windows and load balancing.

Media production studios push sequential throughput to extremes. A visual effects house working on 8K feature film content needs sustained 30+ GB/s aggregate throughput for a dozen editors simultaneously accessing the same raw footage. They deployed hybrid architecture: 64 Gbps FC-SAN backend connected to high-performance NAS gateways presenting storage to editing workstations via 100 GbE and parallel NFS. The SAN provides enterprise reliability and snapshot capabilities (critical protecting months of rendering work), while the NAS layer delivers concurrent multi-stream throughput that Avid Media Composer and DaVinci Resolve workflows demand. Active projects live on all-flash, completed projects tier to archive storage, and final deliverables replicate to S3-compatible cloud object storage for long-term retention.

We've watched SAN transport evolve from 1 Gbps Fibre Channel—which seemed impossibly fast in 1998—through 128 Gbps FC and now NVMe-oF pushing past 200 Gbps. The genuine revolution isn't raw speed, though. It's that storage stopped being the bottleneck. Modern applications max out CPU or exhaust memory long before storage becomes the limiting factor. Organizations that properly architect SAN infrastructure essentially eliminate storage latency as a constraint on application performance—that translates directly to competitive advantage when you're processing transactions or serving customers faster than competitors still fighting I/O waits

— Elena Volkov

Choosing the Right SAN Devices for Your Infrastructure

Performance requirements should drive component selection, not vendor marketing slideware. Start by profiling actual workload characteristics. That SQL Server supporting your financial system—capture real IOPS, sequential throughput, block sizes, and read/write ratios using perfmon over two weeks including quarter-end processing. You might discover it peaks at 42,000 random read IOPS with 8KB blocks but averages 5,500 IOPS most hours. Those measured numbers determine whether you need all-flash or can use hybrid arrays with intelligent read caching.

Latency sensitivity varies dramatically by application type. High-frequency trading platforms notice every 50 microseconds—NVMe-oF or 64 Gbps FC makes economic sense here. ERP batch jobs running overnight can tolerate 12ms latency without user impact—economical iSCSI suffices. Measure end-to-end latency including all network hops, storage controller processing queues, and actual media response times. Don't assume FC automatically guarantees low latency if you're routing through four switch hops and hitting overloaded array controllers sharing resources across 80 other hosts.

Scalability planning prevents expensive forklift upgrades. Choose arrays offering independent capacity and performance scaling. You should be able to add drive shelves without controller replacement, and upgrade controllers without drive migration. One healthcare client purchased a "massively scalable" array that hit controller bottlenecks at 180,000 IOPS—adding more flash drives didn't improve performance because controllers couldn't push additional I/O. They replaced the entire array 22 months post-purchase. Validate scaling claims with customer references running similar workload profiles at your target scale.

Budget conversations need encompassing total cost of ownership, not just acquisition sticker prices. That $210,000 FC-SAN might incur $32,000/year in vendor support contracts (15% of purchase price annually), consume $9,500/year in power and cooling, and require a dedicated administrator costing $135,000/year. The $72,000 iSCSI SAN costs $11,000/year in support, $3,200 in power, and your existing network team manages it. Over five years, TCO becomes $725,000 vs. $398,000—suddenly the "premium" option costs 82% more. Run these numbers honestly before deciding.

Vendor ecosystem dynamics affect long-term success. Scrutinize interoperability matrices obsessively—does your preferred storage vendor certify their arrays with your chosen switch manufacturer? Multi-vendor SANs introduce complexity and potential finger-pointing during outages ("Brocade claims it's a Pure Storage problem; Pure says it's Brocade—both want packet captures and more logs before escalating"). Some organizations deliberately standardize on single-vendor stacks (Dell/Dell, HPE/HPE) accepting lock-in in exchange for simplified support escalation. Others intentionally choose best-of-breed switches with multi-vendor storage, accepting complexity for flexibility. Neither approach is objectively wrong—match the strategy to your team's capabilities and organizational risk tolerance.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Common SAN Implementation Mistakes to Avoid

Bandwidth undersizing creates bottlenecks that even expensive storage can't overcome. Seen multiple all-flash arrays capable of 400,000 IOPS connected via six 16 Gbps FC links—theoretical maximum around 9.6 GB/s, but the array can deliver 18+ GB/s to properly connected hosts. Storage hardware sits 50% idle while applications queue waiting for I/O. The fabric became the constraint. Match interconnect bandwidth to storage capabilities generously, and monitor utilization continuously. Seeing 65% link utilization during normal operations? You'll hit 100% during backup windows or monthly financial close, introducing queuing delays that ripple through the application stack.

Poor zoning practices create security vulnerabilities and troubleshooting nightmares. Worst-case scenario: one giant zone containing all servers and all storage. Every host can see every LUN—when someone makes a mistake (and someone always eventually does), they might mount production database volumes to a test server and irreversibly corrupt them. Use single-initiator single-target zoning where practical: Server-SQL01-HBA1 can exclusively see Storage-Array-A-Port3, nothing else. Yes, this creates more zones to document and manage. The management overhead is trivial compared to recovering from cross-mounting disasters or investigating unexplained storage access during security audits.

Inadequate redundancy undermines the entire SAN value proposition. You architected a SAN for high availability, then deployed single-fabric because budget constraints? A switch failure or configuration mistake brings down every application simultaneously. One insurance company lost their single-fabric SAN for seven hours when a junior network engineer accidentally pushed the wrong configuration file during what should've been a minor update. Recovery required manually recreating 180+ zones from outdated documentation. Dual fabrics would've limited the blast radius to 50% capacity reduction, allowing applications to stay operational on the surviving fabric while calmly fixing the broken one.

Lack of proactive monitoring leaves you operationally blind until failures occur. Implement continuous monitoring of link errors, frame discards, queue depths, port utilization trends, and latency percentiles. Establish graduated thresholds: informational alert at 55% utilization, warning at 70%, escalation at 82%, critical at 90%. Don't wait until you hit 97% to realize capacity expansion is urgent. One financial services firm discovered chronic fabric congestion only during post-incident forensics—they'd experienced intermittent application slowdowns for six weeks, but without monitoring, blamed application code performance.

Skipping realistic failure testing before production deployment invites disasters. "Failover tested" should mean you actually pulled cables, rebooted switches during peak load, powered off array controllers while I/O was active—not just reviewed architecture diagrams in meetings assuming theory matches reality. Load test at 110% of expected peak I/O levels, not idle synthetic traffic. Restore actual multi-terabyte datasets from backups validating recovery procedures—don't assume backups work because the software reports "success." One retail company discovered during an actual disaster that their backup solution had silently failed to capture SQL transaction logs for four months. Realistic testing would've caught this within days.

FAQ

Storage area network devices form the foundation supporting modern enterprise applications—from the host bus adapters in application servers through the fabric switches interconnecting everything to the arrays actually persisting your data. Each component fulfills specific roles delivering block-level storage at enterprise scale with the redundancy and performance that mission-critical workloads demand.

Choosing appropriate protocols requires matching technical capabilities to workload characteristics. Fibre Channel's maturity and consistently low latency justify cost premiums for tier-1 databases where every microsecond affects transaction throughput. iSCSI's accessibility and lower infrastructure costs suit mid-market deployments where 4-6ms latency is perfectly acceptable. NVMe over Fabrics represents the performance frontier for organizations pushing boundaries with all-flash infrastructure requiring sub-millisecond response times. Healthcare imaging systems, financial trading platforms, virtualization clusters, and media production studios all leverage these identical building blocks configured differently addressing their specific operational requirements.

Success flows from careful planning, proper implementation discipline, and ongoing management rigor. Avoid common pitfalls: undersized bandwidth, single-fabric designs eliminating redundancy, poor zoning practices creating security exposures, and inadequate monitoring leaving you blind to developing problems. Invest in training—either developing internal expertise through vendor courses and experience or engaging specialists who already possess it. Test failover scenarios extensively before production deployment, not during actual disasters when executives are demanding status updates.

As data volumes continue growing 28-40% annually and application performance expectations keep rising, well-architected SAN infrastructure provides scalable, reliable foundations businesses need competing effectively. Organizations that master SAN technology essentially eliminate storage as a constraint on application performance—letting databases, virtualization platforms, and analytics workloads reach their full potential rather than waiting on I/O.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.