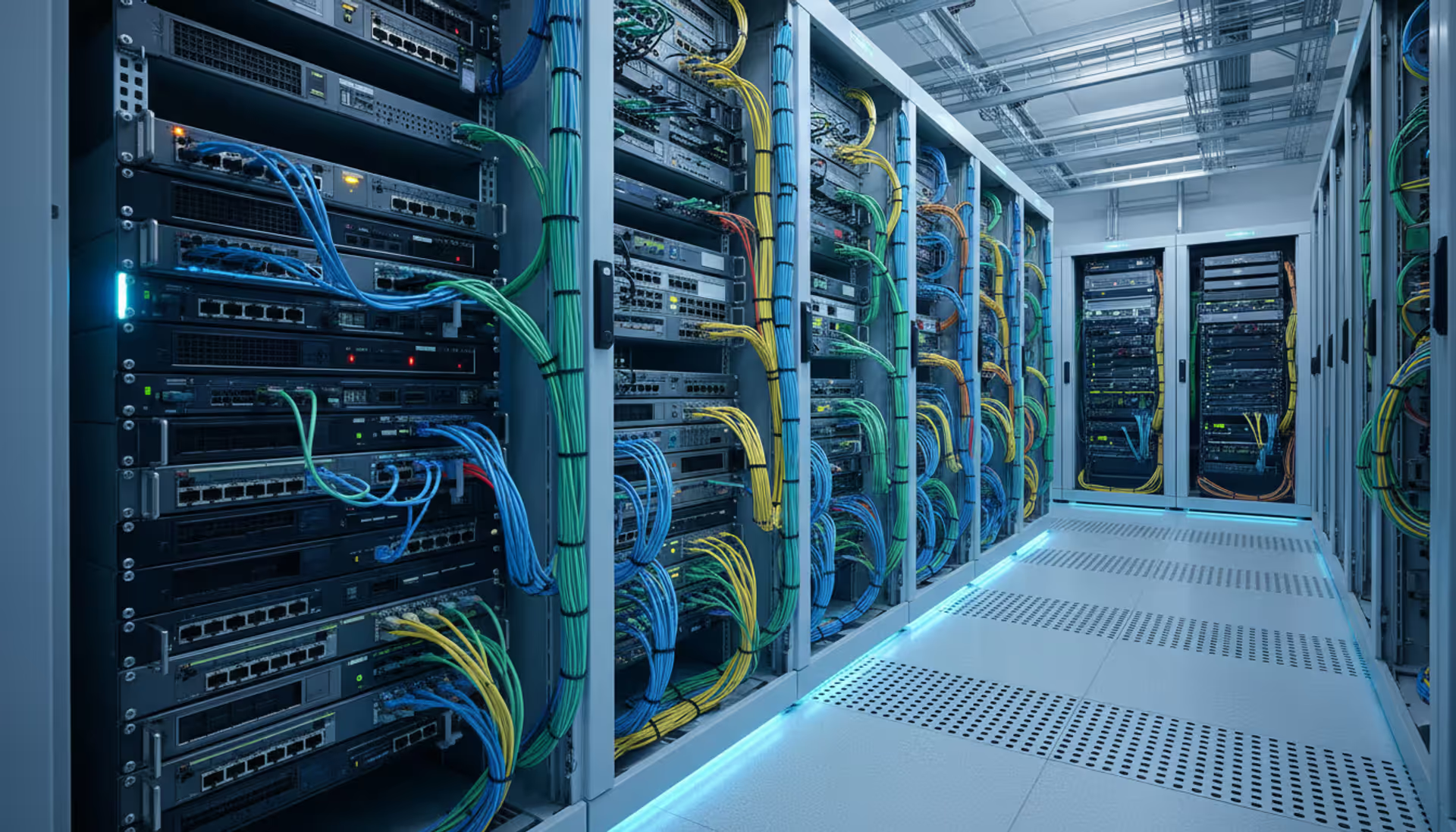

Modern enterprise server room with network racks, switches, routers, patch cables, and blue LED lighting along a clean aisle

How to Configure Network Devices?

Walk into any enterprise network room and you'll find racks of equipment humming away—routers, switches, firewalls, wireless controllers. But here's the thing: even expensive hardware becomes useless without proper configuration. I've watched networks grind to a halt because someone skipped a single VLAN setting. I've also seen small businesses lose customer data when firewalls shipped with default passwords that never got changed.

Your network's stability depends entirely on how well you configure each piece of equipment. Every device needs specific settings—IP schemes, security rules, routing decisions—customized to your environment. Rush through this process or skip documentation? Expect outages at 2 AM. Take the methodical approach? Your infrastructure becomes something you can actually trust.

The stakes keep climbing. A typical mid-sized company now manages 50-100 network devices instead of the 10-15 they had five years ago. Security threats multiply daily. One misconfigured switch can expose your entire VLAN structure to lateral attacks. That's why understanding configuration from planning through maintenance matters more than ever.

Understanding Network Device Configuration Basics

Think of configuration as programming your network hardware to make thousands of decisions per second. Should this packet go left or right? Does this traffic deserve priority? Is this connection attempt legitimate? You're setting parameters—IP addresses, routing tables, access rules, VLAN memberships—that answer these questions automatically.

Different devices need different attention. Routers? They're making decisions about inter-network traffic, so you'll configure routing protocols (OSPF, BGP, EIGRP), NAT rules for translating addresses, and WAN interfaces connecting to your ISP. Switches work locally, managing traffic inside your building through VLANs that segment departments, port configurations that set speed and duplex, and spanning tree settings preventing network loops. Firewalls get the most complex treatment—security policies defining what's allowed, zone configurations separating trust levels, and intrusion prevention rules blocking known attacks. Wireless access points? You're setting SSIDs, encryption standards (WPA3 these days), and radio frequencies to avoid interference.

Performance problems trace back to configuration mistakes more often than hardware failures. I've seen routers set with 1280-byte MTU values fragmenting every packet unnecessarily, killing throughput. Switches configured with default spanning tree timers creating 50-second convergence delays during failovers. Badly tuned QoS policies that prioritize bulk file transfers over VoIP calls.

Security suffers even worse. Default passwords stick around because "we'll change them later" becomes never. Unnecessary services—CDP, HTTP, Telnet—keep running because nobody explicitly disabled them. One device with lazy configuration becomes the entry point for everything else.

Building a complete network device inventory isn't glamorous work, but skip it and you're flying blind. Which switches run firmware version 12.2 with that critical vulnerability? Where exactly is that router handling your PCI-compliant traffic? What's the MAC address of the rogue access point someone plugged in Tuesday? Your inventory answers these questions—or it doesn't.

Pre-Configuration Requirements and Planning

Jumping straight to configuration commands guarantees problems. You need prep work first. Start by mapping your topology—not just boxes and lines, but actual traffic flows. Where does your guest WiFi traffic go? Which path does database replication take between sites? Draw it out. Physical diagrams show cable connections; logical diagrams show VLANs and routing. Both matter.

Your network device inventory needs depth. Sure, record model numbers and serial numbers, but also track firmware versions (Router-1 runs IOS 15.6, Router-2 runs 15.9), purchase dates (warranty expires March 2025), physical locations (Building C, Rack 12, U-position 24), and assigned roles (core routing, internet edge, management network). When something breaks at midnight, this detail separates quick recovery from hours of detective work.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

IP addressing schemes seem straightforward until you've got 500 devices and realize your original plan allocated 30 addresses for a department now needing 200. Think ahead. Maybe 10.1.x.x addresses data VLANs, 10.2.x.x handles voice, 10.3.x.x covers management interfaces, 10.99.x.x serves guests. Document your subnet masks, default gateways, and DHCP ranges. Reserve blocks for future expansion. Write it down somewhere everyone can access.

VLAN planning deserves serious thought. Putting everything in VLAN 1 means zero traffic isolation—your printers see your finance traffic. Bad idea. Split things up: VLANs 10-49 for departments, 50-79 for voice, 80-89 for management, 90-99 for guests. Between switches, you'll need trunk links carrying multiple VLANs on one cable. Plan which ports trunk and which provide access to end devices.

Security policies must exist before you touch a CLI. What's your password policy? Minimum 15 characters mixing types? Changed quarterly? Which management protocols make the cut? (SSH yes, Telnet absolutely not.) What encryption standards? (AES-256, not DES.) Who can access management interfaces? (Only from the 10.3.1.x management VLAN.) These decisions become configuration lines.

Step-by-Step Configuration Process

Initial Access and Default Settings

Unbox a new switch and it's basically wide open. Default usernames like "admin" with passwords matching "admin"—sometimes just blank fields. Manufacturers do this so you can actually get in, but leaving these defaults is like installing a deadbolt then taping the key to your door.

Connect via console cable first (USB-to-serial adapters work great). You'll need terminal software—PuTTY on Windows, screen on Mac/Linux. Settings: 9600 baud, 8 data bits, no parity, 1 stop bit. Hit enter a few times and you'll see a login prompt.

Once you're in, check the firmware immediately. Running version 2.1.3 when 2.4.8 is current? You're missing security patches and bug fixes. Visit the vendor's support portal—Cisco's software download section, Juniper's support site, HPE's firmware library. Download the latest stable release (not beta versions unless you enjoy unpredictable behavior). Upgrade now before configuring anything else.

Set the system clock or point to NTP servers right away. Sounds minor, but try correlating logs across devices when their clocks drift 20 minutes apart. Configure at least two NTP servers: ntp server 216.239.35.0 and ntp server 216.239.35.4 for Google's public NTP, or better yet, run an internal NTP server synchronized externally.

Hostname assignment seems trivial until you're staring at logs from "Switch1" and "Switch2" wondering which building they're in. Use systematic names: ATL-B3-SW-Core-01 tells you Atlanta, Building 3, Switch, Core role, unit 1. Future you will appreciate the clarity.

Core Configuration Parameters

Start configuring interfaces after basic setup completes. Management interfaces need IP addresses first—you want remote access before unplugging that console cable. On switches, configure VLAN interfaces (SVIs). On routers, configure the loopback interface for management traffic (it stays up even when physical interfaces fail).

WAN interfaces connect to your ISP and need their provided settings—maybe a static IP like 203.0.113.45/30, or PPPoE credentials if you're on DSL/fiber. LAN interfaces use your internal addressing: 10.1.1.1/24 on the finance VLAN, 10.1.2.1/24 on engineering. Remember to bring interfaces up—many ship in administratively down state requiring "no shutdown" commands.

Routing configuration depends on your scale. Three locations with one path between them? Static routes work fine: ip route 10.2.0.0 255.255.0.0 198.51.100.1. Managing 15 locations with multiple paths? You need dynamic routing. OSPF works well for single organizations, BGP when connecting to multiple ISPs or managing complex policies. Configure your metrics carefully—OSPF uses cost based on bandwidth, EIGRP uses bandwidth and delay. Wrong metrics send traffic down slow backup links instead of fast primary connections.

Configuring VLANs on switches means creating them first (vlan 10, name Finance), then assigning switch ports. Access ports serve end devices and belong to one VLAN: switchport mode access, switchport access vlan 10. Links between switches need trunk mode carrying all VLANs: switchport mode trunk, switchport trunk allowed vlan 10,20,30,40. Be specific about allowed VLANs—"switchport trunk allowed vlan all" creates unnecessary broadcast domains.

QoS becomes critical when bandwidth fills up. Without it, someone's file backup chokes your video conference. Configure classification to identify traffic types—maybe matching DSCP values or examining port numbers. Then create queuing policies giving voice traffic priority, video second priority, bulk transfers lowest. Test under actual load conditions because theoretical QoS configs often need tweaking when real traffic patterns hit them.

Security Configuration Best Practices

Change every default credential immediately—not tomorrow, now. Create passwords with 15+ characters: Tr0ub4dor&3style mixing cases, numbers, symbols. Use different passwords per privilege level. Store them in a proper password vault (LastPass Enterprise, 1Password Business, HashiCorp Vault), never in spreadsheets or sticky notes.

Disable services you're not actively using. Many devices enable HTTP, Telnet, CDP, LLDP, SNMPv1/v2 by default. Shut them down: no ip http server, no cdp run, transport input ssh (blocking Telnet). Each running service expands your attack surface. Only enable what you actually need: SSH for remote CLI access, HTTPS if you use web management, SNMPv3 with strong authentication if you're monitoring.

Access control lists restrict who can reach management interfaces. Create an ACL permitting only your management subnet: access-list 99 permit 10.3.1.0 0.0.0.255. Apply it to VTY lines (for SSH access) and management interfaces. Attempts from other networks get blocked and logged, revealing scanning activity early.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Logging captures everything worth knowing later. Enable local logging but also configure remote syslog servers—local logs vanish during power failures or get overwritten. Send logs to centralized collectors: logging host 10.3.1.100. Set appropriate severity levels—debugging fills disks fast, informational catches most useful events. Include timestamps and source interfaces so you know exactly when and where events happened.

Back up configurations before changing anything. Manual method: copy running-config to a TFTP server. Better approach: use your network device manager's automated backup scheduling. Store configs in version control (Git repositories work great) with meaningful commit messages: "Added VLAN 45 for new IoT devices - Ticket #2847." When changes break things, you can revert to known-good configurations in seconds instead of rebuilding from memory.

Tools for Discovering and Managing Network Devices

Network device discovery tools scan your infrastructure finding devices automatically. They probe IP ranges with pings and SNMP queries, examine switch MAC address tables for downstream devices, analyze ARP caches showing recent connections. Discovery eliminates the manual spreadsheet approach where devices appear, disappear, and nobody notices until something breaks.

Modern discovery platforms combine multiple techniques simultaneously. Active scanning sends packets to potential device addresses—fast but visible in logs. Passive monitoring watches existing traffic identifying devices without generating new packets—stealthy but slower. Agent-based discovery installs software on endpoints reporting back inventory details—comprehensive but requires deployment effort. Use all three methods together for complete visibility into your actual device population.

| Tool | Primary Capabilities | Compatible Equipment | Cost Structure | Ideal Use Case |

| SolarWinds Network Discovery | Automated discovery, visual topology maps, SNMP polling | Cisco, Juniper, HP, Dell, Arista, others | Perpetual license (~$3K) or annual subscription (~$1.2K/year) | Enterprises with 100+ devices needing deep visibility |

| ManageEngine OpManager | Auto-discovery, health monitoring, config archiving | Supports 1000+ device manufacturers | Tiered subscriptions starting $715 for 25 devices | Organizations watching budgets carefully |

| Auvik | Cloud-delivered discovery, automatic documentation, alerting | Strong multi-vendor support, SMB-focused | Monthly per-device (~$4-6/device/month) | MSPs managing multiple client networks remotely |

| PRTG Network Monitor | Agentless discovery, sensor-based monitoring | Broad multi-vendor compatibility | Free edition up to 100 sensors, paid starts ~$1.7K | Small-to-medium networks wanting quick setup |

| Netbox | Open-source IPAM/DCIM, API-driven discovery | Vendor-neutral design | Free (self-hosted, your infrastructure costs) | Teams with development skills wanting customization |

Network device manager platforms centralize configuration tasks across your entire infrastructure. Instead of SSH-ing into 40 switches individually making the same change, you push updates from one interface. Templates define standard configurations with variables for device-specific values—{{HOSTNAME}}, {{MGMT_IP}}, {{LOCATION}}. Compliance scanning compares running configs against approved baselines, flagging unauthorized deviations. Some platforms automatically remediate drift, restoring approved configurations when changes occur outside the change management process.

Configuration management features save massive time. Need to update SNMP community strings across 100 devices? Template-based provisioning does it in minutes. Wondering which switches still allow Telnet access? Compliance checks find them instantly. Someone made undocumented changes Friday night? Automated detection catches it Saturday morning.

Network device monitoring extends beyond simple ping tests. Effective monitoring tracks interface utilization (that uplink hitting 90% consistently needs upgrading), error counters (CRC errors indicate cable problems), resource consumption (CPU spiking to 100% suggests routing table issues or attacks), environmental sensors (temperature alarms warn about HVAC failures before equipment overheats). Set thresholds triggering alerts before minor issues cascade into outages. Historical metrics reveal trends—interface utilization growing 5% monthly means you'll need capacity upgrades in six months.

Common Configuration Mistakes to Avoid

Weak passwords and default credentials remain embarrassingly common even in 2025. Attackers run automated scans checking for admin/admin, cisco/cisco, public SNMP communities. I've seen enterprise routers with "Password1" protecting management access. Using identical passwords across all devices? One compromised switch hands over your entire infrastructure. Generate unique 15-character passwords per device. Better yet, implement certificate-based authentication eliminating password vulnerabilities entirely.

Skipping configuration backups invites disaster. Devices fail suddenly—power supply death, flash corruption, lightning strikes. Configurations get accidentally erased—"write erase" entered on the wrong console window, typo in a script. Without backups, you're manually rebuilding from incomplete documentation (if you're lucky) or from memory (if you're not). Automated backup solutions cost nothing compared to the recovery time they prevent. Configure before problems strike, not after.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

VLAN configuration mistakes cause both security breaches and performance degradation. Leaving all ports in the default VLAN creates zero segmentation—your guest WiFi sees your finance database traffic. Misconfiguring trunk ports leaks VLANs where they shouldn't go—suddenly VLAN 10 appears on a switch where it was never intended. Voice VLANs configured incorrectly add latency destroying call quality. Testing VLAN isolation thoroughly matters—connect devices to different VLANs and verify they cannot communicate without routing through your firewall.

Documentation gaps turn institutional knowledge into single-person dependencies. The engineer who configured your core routers leaves for a new job, taking their understanding with them. Suddenly nobody knows why that specific static route exists or which VLAN serves the building automation system. Document not just what settings exist but the reasoning behind them: "VLAN 47 created for building HVAC controllers - isolated due to poor vendor security practices - see security assessment SA-2024-089." Include network diagrams, IP spreadsheets, VLAN assignments, and change histories accessible to your entire team.

Firmware updates get ignored until breaches occur. Manufacturers constantly patch vulnerabilities—buffer overflows allowing remote code execution, authentication bypasses granting unauthorized access, denial-of-service flaws crashing devices. Establish firmware lifecycle management: subscribe to vendor security bulletins, test updates in lab environments (never test directly in production), schedule maintenance windows for upgrades, maintain rollback procedures if updates cause unexpected problems. Balance security needs against stability—apply critical security patches quickly, minor feature updates less urgently.

Logging and monitoring neglect prevents early problem detection. Configuration changes, failed authentication attempts, interface state flaps—these signals warn about developing issues. Without monitoring, you learn about problems from user complaints: "The network is slow" or "I can't access the database." By then, minor issues have escalated into major outages. Configure comprehensive logging before issues arise, feeding logs into centralized collectors with alerting rules. When unusual patterns appear, you'll know immediately instead of discovering problems hours later.

Maintaining and Monitoring Configured Devices

Ongoing monitoring keeps your configured devices performing as designed. Network device monitoring platforms track availability (is it responding?), performance metrics (how heavily loaded?), and configuration state (has anything changed?). Establish baseline metrics during normal operation—CPU typically runs 25%, interfaces average 40% utilization, memory consumption sits around 60%. Configure alerts for significant deviations: CPU exceeds 75%, interface utilization tops 80%, memory climbs above 85%. These thresholds catch problems while you can still respond proactively.

Performance monitoring predicts capacity problems before users suffer. Graph interface utilization weekly and you'll spot trends—that 1Gbps uplink averaging 400Mbps last month now hits 650Mbps. Continue that trajectory and you'll saturate the link in three months. Upgrade proactively instead of reactively. Memory usage trends reveal leaks or insufficient resources—gradual climbs from 40% to 90% over weeks suggest memory leaks fixed by firmware updates or hardware upgrades. Temperature monitoring prevents thermal shutdowns in poorly ventilated equipment rooms—alerts at 65°C give time to investigate cooling problems before devices protect themselves by shutting down at 75°C.

Author: Derek Hollowell;

Source: clatsopcountygensoc.com

Configuration backup strategies need redundancy. Automated nightly backups capture routine changes with zero manual effort—schedule them for 2 AM when traffic drops. Manual backups immediately before major changes provide known-good restoration points—about to reconfigure your entire OSPF topology? Back up first so you can revert if things break. Store backups in multiple locations—local file server in your data center, cloud storage bucket in AWS/Azure, maybe even an off-site office. Retention policies should keep daily backups for 30 days, weekly backups for 90 days, monthly backups for a year. Test restoration quarterly because untested backups are theoretical backups offering false confidence.

Change management processes prevent configuration chaos. Require written change requests documenting what changes, why it's necessary, detailed implementation steps, rollback procedures if things break, and testing verification. Implement peer review for critical changes—another engineer reviews your planned commands before you execute them. Schedule changes during maintenance windows (Tuesday 2-4 AM beats Friday 5 PM). Maintain detailed change logs recording timestamps, administrator identities, and actual commands executed. Audit trails prove invaluable during troubleshooting and compliance reviews.

Configuration drift happens gradually. An administrator makes a "temporary" change that becomes permanent. Someone adjusts settings during troubleshooting and forgets to revert. Devices get partially reconfigured and nobody documents it. Drift detection compares current running configurations against approved reference configurations, automatically flagging differences: "Router-Core-1 has ACL 150 not present in reference config" or "Switch-Floor2-3 VLAN 89 found but not documented." Review flagged changes—some are legitimate updates needing documentation, others are undocumented modifications requiring investigation.

Configuration audits verify security settings remain effective over time. Quarterly reviews should check: Are unused services still disabled? Do ACLs still restrict management access appropriately? Do passwords still meet complexity requirements? Are VLAN assignments current? Do routing tables contain obsolete entries? Have firewall rules accumulated unnecessary permits? Annual deep audits examine accumulated configuration debt—the gradual buildup of temporary changes, deprecated settings, and unnecessary complexity. Clean it up before it causes problems.

Firmware lifecycle management tracks software versions systematically. Maintain inventory showing current firmware per device, latest available version, and update status: "Router-1: running 15.6, current 15.9, update scheduled March 15" or "Switch-3: running 12.2.55, current 12.2.58, testing in lab." Test updates in lab environments mirroring production—same hardware models, similar configurations. Schedule updates during maintenance windows with rollback plans ready (keep old firmware images accessible, document downgrade procedures). Prioritize critical security updates over minor feature additions.

Configuration management fails when organizations treat it as a one-time project instead of an ongoing discipline. I've investigated dozens of major network outages over my career, and the pattern repeats: configurations drift slowly without anyone noticing until something breaks catastrophically. The successful organizations treat configuration management like they treat security—with documented processes, regular audits, and automated monitoring catching problems early. It's not exciting work, but it's the difference between networks that work and networks that fail at the worst possible moments

— Michael Torres

FAQ

Network device configuration creates the foundation everything else builds on. Your infrastructure reliability, security posture, and performance characteristics all trace back to configuration quality. The difference between networks that work and networks that fail comes down to methodical planning, systematic execution, and disciplined ongoing maintenance.

Start with thorough preparation—comprehensive inventory documentation, accurate topology mapping, well-designed IP addressing schemes, and clearly defined security policies. These investments pay dividends throughout the configuration process and long afterward during troubleshooting and expansion. Develop configuration standards balancing security requirements with operational practicality. Templates and automation ensure consistency across similar devices while maintaining necessary flexibility for unique requirements.

The tools available in 2025 dramatically simplify tasks that once consumed weeks of administrative effort. Network device discovery tools maintain accurate inventories automatically, eliminating manual tracking spreadsheets. Management platforms enforce configuration standards, detect unauthorized drift, and accelerate bulk changes. Monitoring solutions alert you to developing problems before users notice impact. Leverage these tools, but remember they supplement rather than replace solid processes.

Avoid common pitfalls through disciplined habits: change default credentials immediately, backup configurations religiously before and after changes, document thoroughly including the reasoning behind decisions, test changes in lab environments before production deployment. Treat configuration as living documentation evolving with your network rather than static settings applied once and forgotten.

Success requires viewing configuration as continuous discipline rather than one-time projects. Regular audits catch accumulated configuration debt. Continuous monitoring reveals drift before it causes problems. Proactive firmware management patches vulnerabilities before exploitation. The organizations excelling at network device configuration don't necessarily deploy the most sophisticated tools—they maintain the most consistent processes and possess the discipline to follow them even when pressured to cut corners.

Your network's future reliability starts with decisions made during configuration today. Invest the time and attention these decisions deserve

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.