Modern data center with glowing server racks and overlay network connection lines representing cloud infrastructure

How to Build a Cloud Infrastructure?

Building cloud infrastructure demands careful architectural planning, strategic tool selection, and disciplined execution. Whether you're establishing a private cloud environment for enterprise workloads or designing cloud-native applications from the ground up, the technical decisions you make today shape performance, security, and operational costs for years to come.

Organizations in 2026 increasingly recognize that properly designed cloud systems deliver advantages traditional data centers struggle to match—dynamic resource allocation, geographic distribution, and operational flexibility. Yet the journey from initial concept to production deployment involves dozens of interconnected choices affecting everything from monthly bills to system reliability.

This comprehensive guide examines the complete cloud building process, covering architectural foundations, strategic planning frameworks, hands-on deployment techniques, and lessons learned from real-world implementations to help you establish reliable, efficient cloud infrastructure.

Understanding Cloud Architecture Fundamentals

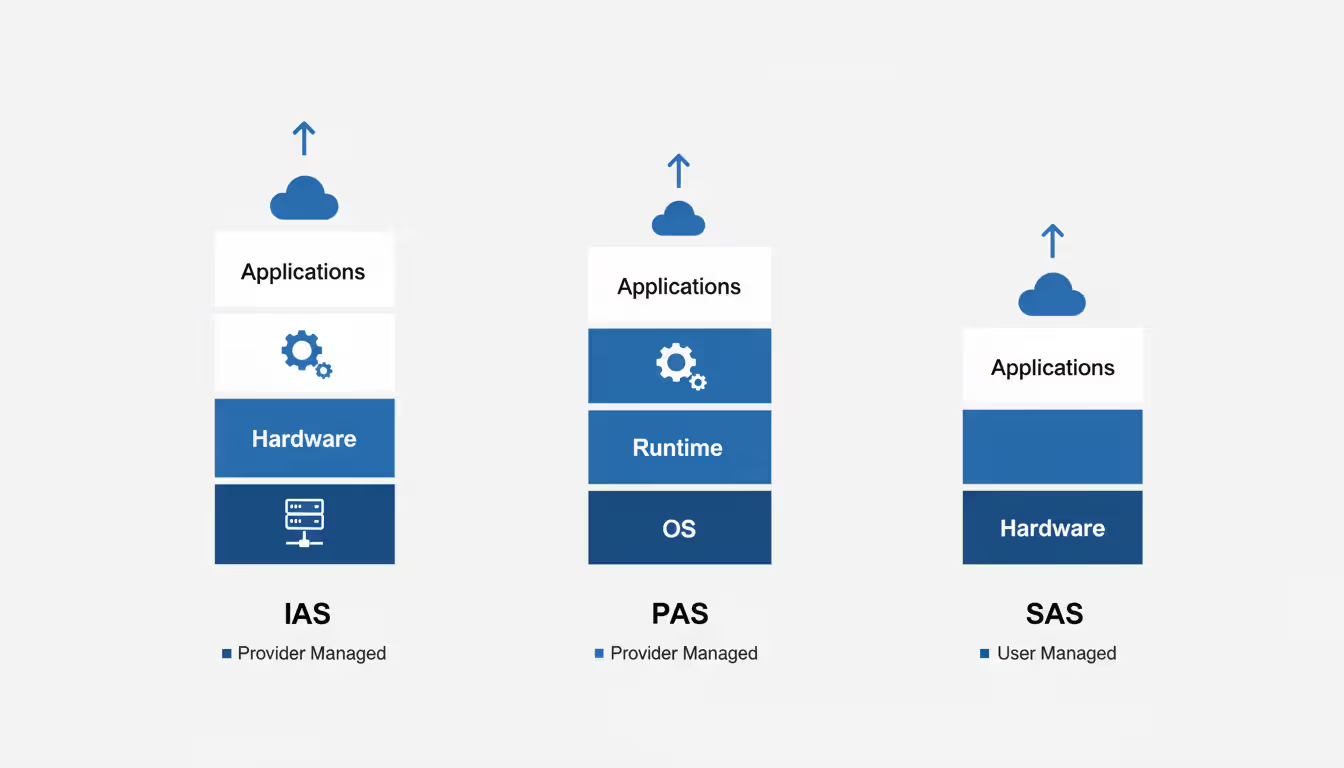

Modern cloud systems organize around three primary service models, each representing different management boundaries. With Infrastructure as a Service, you receive virtualized computing resources—virtual machines, storage volumes, and network components—while retaining responsibility for operating system configuration, application deployment, and security patches. Platform as a Service shifts infrastructure management to the provider, giving you managed runtime environments where you deploy code without configuring underlying servers. Software as a Service delivers complete applications through web interfaces, with providers handling all technical operations.

The deployment model you select fundamentally impacts control, compliance, and economics. Public cloud environments run on shared infrastructure operated by major providers, offering rapid scaling and minimal upfront investment. Private cloud deployments use dedicated infrastructure—whether on-premise or hosted—granting complete control over security policies and data location. Hybrid architectures combine both approaches, keeping compliance-sensitive workloads isolated while leveraging public cloud economics for general-purpose computing.

Every cloud environment assembles from common building blocks. Compute resources deliver processing power through various formats: traditional virtual machines, lightweight containers, or event-driven serverless functions. Storage systems span multiple types—object stores for unstructured data like images and backups, block storage providing low-latency volumes for databases, and file systems enabling shared access across multiple servers. Network infrastructure connects these components through virtual networks, subnets, traffic routing rules, load distribution systems, and access control barriers.

Mastering these fundamentals prevents costly architectural mistakes. Organizations that select Infrastructure as a Service when Platform as a Service better fits their needs end up managing infrastructure complexity that provides zero business value. Companies deploying workloads with strict data residency requirements into public clouds discover compliance violations only after migration completes.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Planning Your Cloud Build Strategy

Successful cloud implementations begin with thorough requirements documentation. Quantify your performance expectations: request volumes per second, acceptable latency ranges, storage capacity projections, and data transfer patterns. Characterize workload behavior—steady-state traffic versus unpredictable spikes, CPU-intensive processing versus memory-heavy operations. These specifications drive instance selection, storage configuration, and scaling policies.

Deployment model selection depends on factors beyond technical capabilities. Regulatory frameworks often mandate specific data handling practices—healthcare organizations operating under HIPAA restrictions or financial services companies meeting PCI-DSS standards may need private infrastructure components. Budget realities matter significantly: public clouds minimize upfront expenditure but can generate surprising bills without diligent cost management, while private clouds require substantial capital investment but offer predictable long-term economics at scale.

Application assessment reveals which systems adapt well to cloud environments versus requiring significant refactoring. Legacy monolithic applications with tightly coupled components and local state dependencies often struggle in distributed cloud environments. Stateless applications designed for horizontal scaling transition smoothly. Batch processing systems, development and testing environments, and modern web applications generally make excellent cloud migration candidates.

Security architecture requires upfront planning, not reactive patches. Establish your identity management framework—defining which users and services can access specific resources under what conditions. Develop network security policies encompassing firewall configurations, encryption standards for data at rest and in transit, and comprehensive audit logging requirements. Leading organizations embed security controls directly into infrastructure templates, ensuring consistent protection across all deployments.

Consider this practical scenario: A mid-sized e-commerce platform processes 10,000 simultaneous users during peak shopping periods, requires database response times under 50 milliseconds, and handles payment data subject to PCI compliance. Their analysis drives them toward a hybrid architecture: public cloud resources handle web tier scaling, dedicated private infrastructure processes payment transactions, with encrypted VPN tunnels connecting the environments.

Setting Up Your Cloud Server Environment

Cloud server deployment starts with selecting your provider or, for private clouds, procuring physical hardware. Public cloud platforms enable rapid deployment—launch servers within minutes through web consoles or API calls. Private cloud implementations require hardware installation, hypervisor deployment, and network configuration before provisioning your first virtual machine.

When working with public cloud providers, establish your account and immediately configure spending alerts. Unexpected bills derail more projects than technical challenges. Structure your organizational hierarchy using accounts, subscriptions, or projects depending on platform conventions. Implement resource tagging standards from day one, enabling accurate cost allocation by team, environment, or business unit.

Instance type selection requires matching workload characteristics to available options. General-purpose instances balance CPU and memory for web applications and small databases. Compute-optimized variants suit CPU-intensive tasks like video encoding or scientific simulations. Memory-optimized choices handle large in-memory databases or caching systems. Storage-optimized instances excel at data warehousing or high-volume log processing.

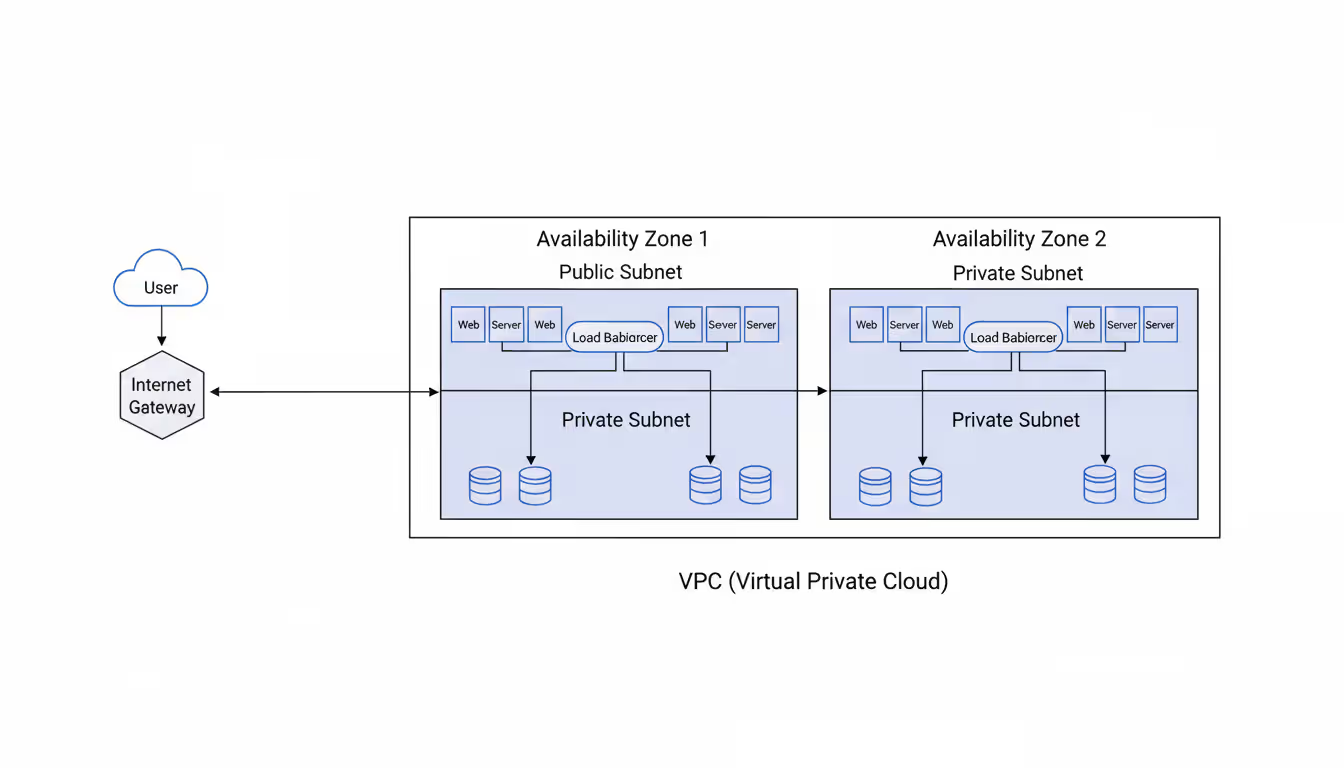

Network architecture establishes communication channels and security boundaries. Begin by creating a Virtual Private Cloud or equivalent network container, then subdivide it into logical segments—public subnets for internet-facing components, private subnets for backend systems. Establish routing policies controlling traffic between subnets and external networks. Deploy internet gateways enabling public access and NAT gateways allowing private resources to initiate outbound connections.

Virtual firewall rules function as traffic control mechanisms at the instance level. Start with restrictive policies, opening only essential ports. A web server requires port 443 for HTTPS traffic and potentially port 80 for HTTP, but should never expose SSH access (port 22) to the entire internet. Implement jump boxes or VPN tunnels for administrative access to production systems.

Storage provisioning demands understanding performance tradeoffs. Block storage delivers high-performance volumes for databases and application data requiring low latency. Object storage provides economical, massively scalable repositories for files, backups, and static website content. File storage offers network-accessible filesystems when multiple servers need concurrent access to shared data.

Here's a concrete implementation example: Building a web application environment involves creating a VPC spanning two availability zones for redundancy, establishing public and private subnets in each zone, launching web server instances in public subnets behind an application load balancer, deploying database servers in private subnets, and configuring firewall rules permitting HTTPS from any source to the load balancer, load balancer to web servers on port 8080, and web servers to database on port 5432.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Building Cloud Applications Step by Step

Cloud application development diverges from traditional approaches in architecture patterns, deployment workflows, and operational characteristics. Begin by selecting development frameworks aligned with your team's expertise and application requirements. Contemporary frameworks including Node.js, Python Flask/Django, Java Spring Boot, and .NET Core all support cloud deployment scenarios effectively.

Architectural decisions profoundly impact future scalability and maintainability. Monolithic applications consolidate all functionality into single deployable artifacts—simpler for initial development but challenging to scale or update. Microservices architectures decompose functionality into independent services that scale and deploy separately. This distributed approach introduces complexity but provides flexibility essential for large applications with distinct functional boundaries.

Container technology packages applications with their dependencies into portable units executing consistently across environments. Write a Dockerfile specifying your application's runtime environment, required libraries, and startup sequence. Build container images, validate locally, then publish to a container registry. This packaging consistency eliminates environment-specific deployment failures.

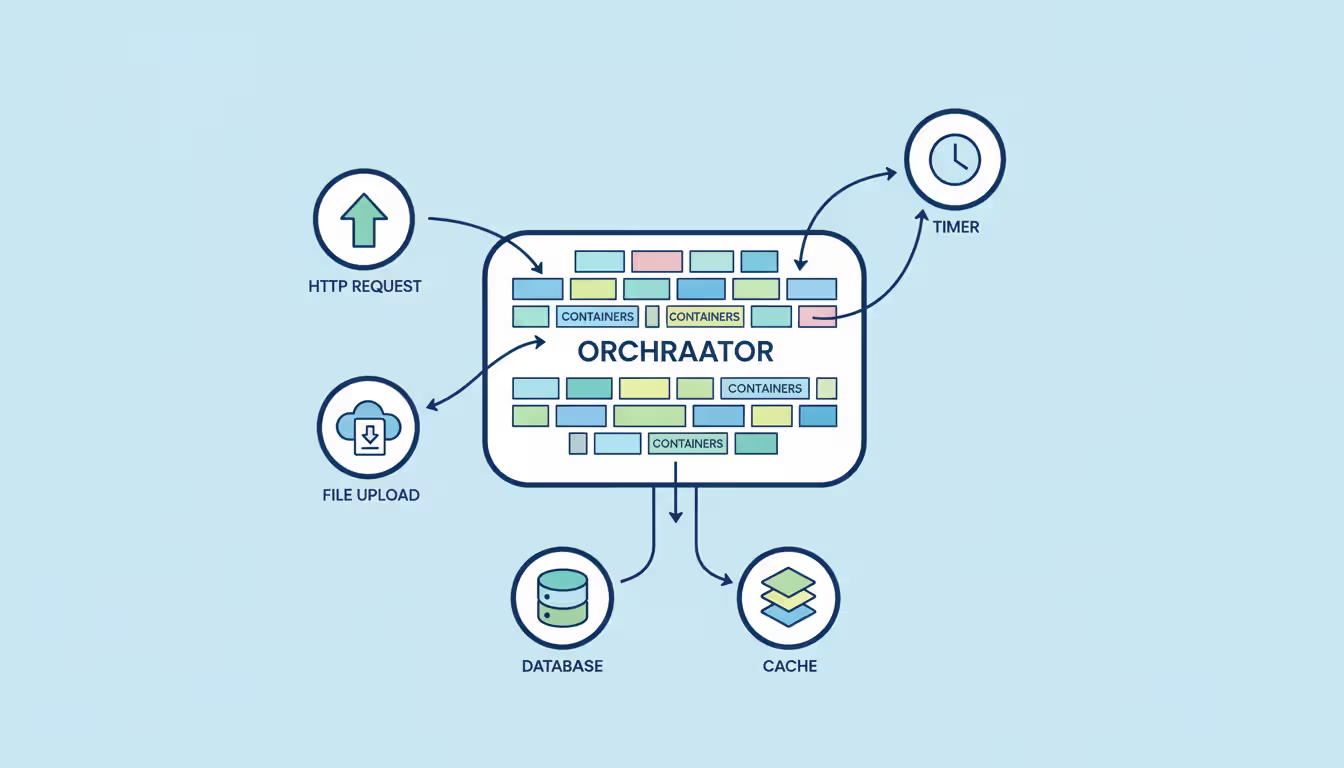

Container orchestration platforms manage containerized applications at scale, handling deployment, scaling, and operational management across server clusters. Express your application components as orchestration resources—Deployments manage stateless applications, StatefulSets handle databases requiring stable identities, Services provide networking, Ingress controllers route external traffic. The orchestrator automatically handles container placement, health monitoring, and failed instance replacement.

API design determines how application components communicate. RESTful APIs leveraging standard HTTP methods provide simplicity and broad tooling support. GraphQL offers flexible querying capabilities for complex frontend data requirements. gRPC delivers high-performance binary protocols for service-to-service communication. Select based on actual requirements rather than industry trends.

Building Cloud Native Applications

Cloud native applications embrace distributed system characteristics from inception rather than adapting traditional applications. They implement microservices patterns enabling independent scaling and deployment of functional components. They externalize state to databases or distributed caches rather than storing it in memory or on local disks. They expose health check endpoints allowing orchestrators to detect and replace unhealthy instances automatically.

The twelve-factor methodology offers practical principles: externalize configuration into environment variables, stream logs to centralized collectors, execute applications as stateless processes, and maintain environment parity between development and production. Adhering to these guidelines produces applications that deploy seamlessly across cloud platforms and scale horizontally without code modifications.

Serverless computing represents the furthest evolution of cloud native design. Write individual functions executing in response to events—HTTP requests, database modifications, file uploads, or scheduled triggers. Cloud providers handle all infrastructure operations, scaling from zero to thousands of concurrent executions automatically. This model excels for event-driven workloads but introduces cold start latency and platform-specific dependencies.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Migration vs Building from Scratch

Existing applications present a migration-versus-rebuild decision. Lift-and-shift approaches move applications to cloud infrastructure with minimal modification—virtual machines replace physical servers, cloud storage replaces storage area networks. This delivers quick wins but forgoes cloud-native benefits like elastic scaling and managed services.

Refactoring modifies applications to exploit cloud capabilities without complete rewrites. Replace self-managed databases with managed database services reducing operational overhead. Implement horizontal scaling support. Externalize configuration and session state. This balanced approach improves cloud alignment while controlling development investment.

Complete rebuilds make sense when legacy applications use obsolete technologies, carry significant technical debt, or require fundamental architectural changes. Starting fresh enables implementing modern patterns and technologies, but demands substantial time and resources. Evaluate whether benefits justify investment for your specific circumstances.

Tools and Platforms for Cloud Development

Platform selection influences available services, operational complexity, and long-term economics. The leading providers each offer distinct advantages.

| Provider | Pricing Approach | Usability | Optimal Scenarios | Key Services | Adoption Difficulty |

| AWS | Usage-based billing, capacity reservations, volume discounts | Moderate complexity with extensive choices | Enterprise systems, startups, comprehensive service requirements | CloudFormation templates, container services, serverless computing, 200+ service catalog | Initially challenging, extensive documentation available |

| Azure | Consumption-based pricing, reserved capacity, Windows licensing benefits | Strong Microsoft ecosystem integration | Windows-based workloads, enterprises using Microsoft technologies, hybrid scenarios | ARM Templates, integrated DevOps platform, Active Directory integration | Moderate learning curve, intuitive for Microsoft-experienced teams |

| Google Cloud | Per-second metering, committed use reductions | Clean, developer-friendly interface | Data analytics, machine learning workloads, container-based applications | Deployment Manager, managed Kubernetes, BigQuery analytics, advanced ML capabilities | Moderate difficulty, exceptional Kubernetes implementation |

Infrastructure as Code tools define infrastructure through version-controlled configuration rather than manual web console operations. Terraform operates across multiple cloud platforms using declarative configuration—describe your desired end state and Terraform determines the steps to achieve it. CloudFormation, ARM Templates, and Deployment Manager provide cloud-specific alternatives with tighter platform integration.

Continuous integration and deployment pipelines automate build, test, and release processes. Jenkins provides extensive flexibility and plugin ecosystem but requires operational management. GitLab CI/CD integrates directly with git repositories for streamlined workflows. GitHub Actions triggers automated workflows from repository events. Platform-native options like AWS CodePipeline, Azure DevOps, and Google Cloud Build integrate seamlessly with their respective ecosystems.

Monitoring systems provide visibility into application and infrastructure health. Prometheus collects time-series metrics from applications and infrastructure. Grafana renders those metrics through customizable visualization dashboards. Cloud platforms offer integrated monitoring—CloudWatch, Azure Monitor, Cloud Monitoring—automatically collecting metrics from platform services. Distributed tracing solutions like Jaeger track request flows across microservices architectures.

The biggest shift in cloud architecture over the past few years has been the move from infrastructure-centric to application-centric thinking.Teams that succeed in 2026 treat infrastructure as code, embrace automation throughout their deployment pipeline, and design for failure rather than trying to prevent it entirely

— Sarah Chen

Common Mistakes When Building Cloud Infrastructure

Excessive resource provisioning burns money on idle capacity. Organizations commonly select oversized instances, operate resources around the clock when only business hours matter, or neglect deleting test environments after completion. Right-size instances based on actual utilization data. Deploy automatic scaling to align capacity with demand patterns. Schedule non-production resources to run only during active hours.

Security vulnerabilities emerge from configuration errors and excessive permissions. Common issues include unrestricted default firewall rules, unencrypted data storage, overly permissive identity policies, and absent audit trails. Apply least-privilege principles consistently—grant only minimum required permissions. Enable encryption by default across services. Segment networks logically. Regularly review access logs for anomalies.

Inadequate cost governance transforms cloud flexibility into financial chaos. Without proper oversight, expenses spiral as teams freely provision resources. Deploy comprehensive tagging policies enabling spending visibility by project or team. Establish budget alerts triggering at multiple thresholds. Conduct regular reviews identifying and terminating unused resources. Purchase reserved capacity or savings plans for predictable baseline workloads. Leverage spot instances for fault-tolerant batch processing.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Insufficient disaster recovery preparation leaves organizations vulnerable to data loss and prolonged outages. Many focus exclusively on primary environment construction while neglecting backup and recovery capabilities. Deploy automated backup systems with documented, tested restoration procedures. Design for high availability across multiple geographic zones. Document and regularly practice disaster recovery procedures. Define Recovery Time Objectives and Recovery Point Objectives, then architect systems meeting those targets.

Excessive vendor dependence occurs when applications rely heavily on provider-specific services. While managed services offer operational convenience, they create significant migration barriers. Balance convenience against portability by preferring open standards where practical. Containerization and Kubernetes provide abstraction layers reducing infrastructure dependencies. Wrap provider-specific APIs behind internal interfaces when feasible, while avoiding over-engineering pursuing theoretical portability you may never need.

Inadequate pre-production testing causes avoidable deployment failures. Development environments frequently use smaller instances, different database engines, or simplified networking configurations that conceal problems until production deployment. Maintain staging environments mirroring production specifications closely. Implement blue-green or canary deployment patterns limiting failure impact. Apply chaos engineering practices validating resilience under adverse conditions.

FAQ

Building cloud infrastructure successfully combines technical knowledge, strategic planning, and hands-on experience. Achievement requires understanding foundational architecture patterns, selecting appropriate technologies and platforms, and avoiding common pitfalls that waste resources or introduce security vulnerabilities.

Begin with clearly defined requirements and realistic project scope. Select deployment models and service types matching your actual needs rather than following industry hype. Implement security and monitoring from initial deployment rather than adding them later. Embrace automation through infrastructure as code and continuous deployment pipelines maintaining consistency and reducing manual errors.

Cloud computing continues evolving with new services, patterns, and best practices emerging continuously. Build learning into your operational rhythm—experiment with emerging approaches in non-production environments, monitor provider announcements, and extract lessons from both successes and failures. Organizations thriving in cloud environments treat infrastructure as continuously improving systems rather than completed projects.

Whether you're constructing a private cloud for enterprise needs, developing cloud native applications, or deploying your initial cloud server, fundamental principles remain consistent: plan thoroughly, start with simplicity, automate relentlessly, and iterate based on real-world performance data. Well-designed cloud infrastructure provides the foundation for scalable, resilient applications adapting to evolving business requirements.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.