Abstract digital landscape showing multiple glowing cloud platforms connected by bright neon data flow bridges floating over a dark background

How to Implement Cloud Platform Integration?

Think of cloud platform integration as building bridges between islands of software. You've got Salesforce tracking your customer conversations, AWS running your web servers, Snowflake storing years of transaction data, and Slack buzzing with team messages. Each works fine alone, but together? That's where the magic happens—or the chaos, depending on your approach.

Here's the reality: connecting these systems means creating automated pathways for information to move between them. When a sales rep closes a deal in Salesforce, your accounting system should know about it instantly. When inventory drops below a threshold, your purchasing platform needs to trigger reorders without someone copying data into spreadsheets at 2 AM.

Most companies stumble into this need gradually. You start with one or two apps, then suddenly you're managing fifteen different platforms. Your marketing team can't see what the sales team is doing. Customer service reps waste five minutes per call pulling up information from four different screens. Engineers spend half their time manually transferring data instead of building features.

Real-world scenarios show why this matters. E-commerce sites connect Stripe for payments, ShipStation for logistics, and QuickBooks for accounting—all updating simultaneously when orders arrive. Medical practices link patient portals to scheduling systems, electronic records, and insurance verification tools. Manufacturing operations feed sensor data from factory floors into analytics dashboards that predict equipment failures before they happen.

The technical approach varies wildly. Sometimes you're making direct API calls between two services. Other times you need middleware translating between incompatible formats—like hiring an interpreter for systems that speak different languages. The core goal stays constant: eliminate the manual busywork that wastes your team's time and introduces mistakes.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Cloud Platform Architecture for Integration

Integration architecture sounds intimidating, but it's really about answering one question: how do these systems talk to each other?

Most setups break down into three levels. Your applications live at the top—that's where actual business stuff happens. The integration layer sits in the middle, handling the messy work of translating requests and routing messages. At the bottom, databases and storage keep everything persistent. Think of it like a building: offices on top, mail room in the middle, archives in the basement.

APIs have become the standard language for these conversations. REST APIs won the popularity contest because they piggyback on regular HTTP—the same protocol your browser uses. Send a GET request, receive some JSON data. Simple enough that a developer can test it with basic command-line tools.

GraphQL offers more flexibility for specific situations. Instead of calling five different endpoints to gather related data, you write one query that pulls exactly what you need. Facebook built it to reduce mobile app bandwidth, and it's particularly useful when clients need customized data structures.

Middleware acts as the traffic cop directing data flow. Enterprise service buses (ESB) excel at complex routing—"if this field equals X, send to System A; otherwise, route to System B and C." They can get bloated though. Lighter options like RabbitMQ or Apache Kafka focus on high-speed message passing without the overhead.

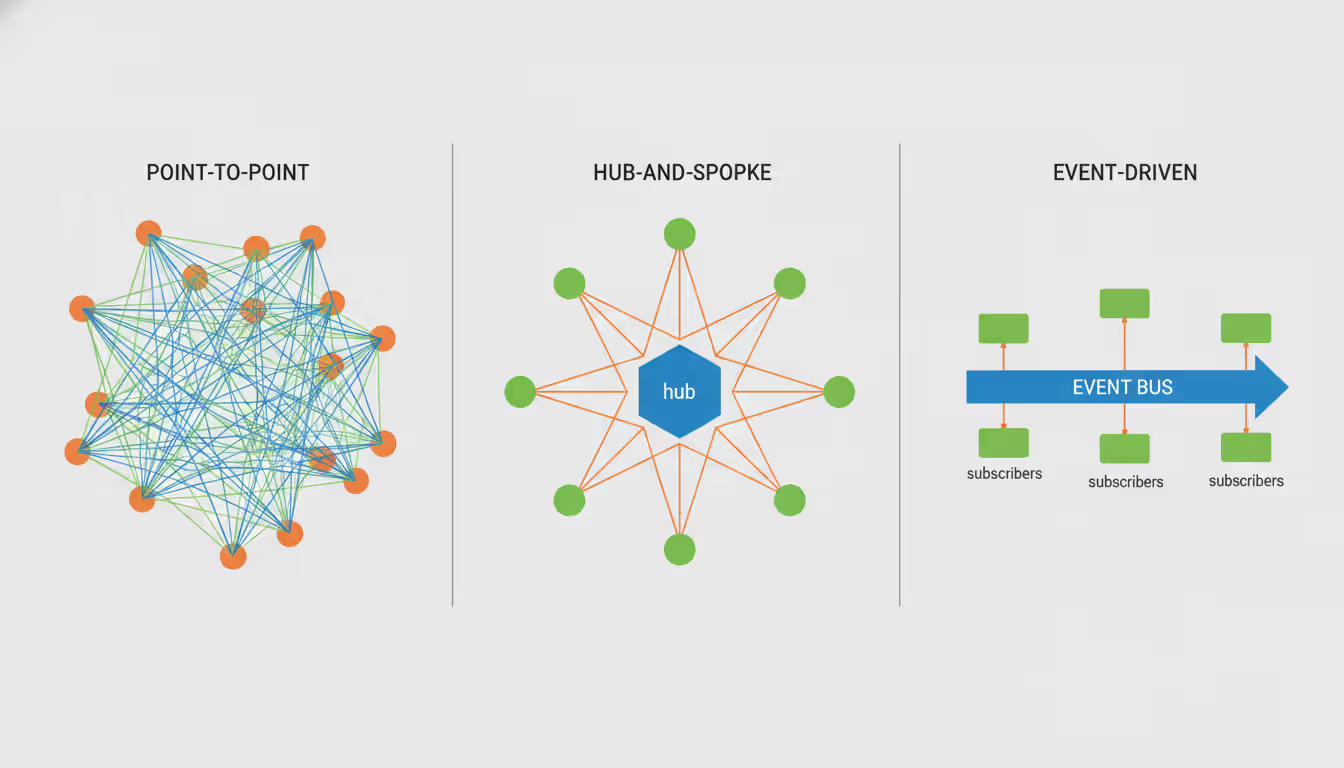

Connection patterns shape your entire architecture. Point-to-point seems easiest initially—just wire System A directly to System B. But connect ten systems this way and you've got forty-five integration points to maintain. One API change cascades into updating dozens of connections.

Hub-and-spoke centralizes everything through a single integration hub. Updates happen in one place, monitoring gets simpler, but that hub becomes a potential failure point and performance bottleneck.

Event-driven architectures flip the script entirely. Systems broadcast "hey, something happened" messages to whoever's listening. Subscribers react if they care, ignore it if they don't. This loose coupling means you can add new systems without modifying existing ones—they just subscribe to relevant events.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Common Integration Architecture Patterns

The aggregator pattern solves a common problem: pulling together scattered data. Imagine building a financial dashboard showing your total net worth. You need checking account balances from Chase, savings from Ally, investments from Vanguard, and crypto from Coinbase. The aggregator calls each API, converts everything to a common currency if needed, handles any that timeout, and presents one consolidated number.

Gateway patterns simplify client-side code dramatically. Your mobile app makes one call to the gateway instead of ten calls to different microservices. The gateway fans out those requests, waits for responses, combines the results, and sends back a single payload. As a bonus, you centralize authentication, rate limiting, and caching logic that would otherwise clutter every service.

Adapter patterns rescue you from legacy system nightmares. That mainframe from 1987 doesn't speak REST, but it's running critical processes you can't replace. An adapter wraps it with a modern API—accepting HTTP requests and translating them into whatever archaic protocol the mainframe expects. You preserve the investment while enabling integration with contemporary systems.

Orchestrator patterns coordinate multi-step processes. Processing a mortgage application requires credit checks, employment verification, income documentation, property appraisal, and fraud screening. An orchestrator manages the sequence, handles branching logic (if credit score below 640, request additional documentation), and rolls back previous steps if something fails midway through.

Microservices vs Monolithic Integration Approaches

Monolithic integration platforms put everything in one box. You configure workflows through a unified interface, deploy as a single application, and monitor from one dashboard. Setup goes faster initially—you're not wiring together distributed components. Scaling means upgrading the entire platform though, even if only one integration is hitting limits. When it breaks, everything breaks together.

Microservices split functionality into independent components. Authentication runs separately from data transformation, which runs separately from message routing. You can scale the authentication service horizontally without touching anything else. Teams deploy updates to individual services without coordinating release schedules. The downside? Complexity explodes. You're debugging issues across network boundaries, managing service discovery, and implementing distributed tracing just to understand what's happening.

Most real-world implementations land somewhere between extremes. Critical integrations handling payment processing might run as isolated microservices—too important to risk sharing resources. Less demanding integrations share a common platform for efficiency. You're balancing operational overhead against flexibility and isolation.

How to Plan Your Cloud Platform Migration Strategy

Start by actually knowing what you have. Document every application, database, file share, scheduled job, and integration currently running. You'd be surprised how many companies discover "shadow IT" during this phase—systems nobody remembered existed until the inventory.

Classify everything by importance and difficulty. Your payment processor crashes and revenue stops immediately—that's critical. The monthly report generator fails and someone notices next week—less critical. Some applications migrate easily because they're already containerized and stateless. Others have hardcoded server IP addresses and will fight you every step.

Migration patterns provide proven playbooks. "Lift and shift" means moving virtual machines to the cloud with minimal changes—fast but wasteful since you're not leveraging cloud capabilities. Replatforming involves modest updates, like swapping your self-managed MySQL for AWS RDS. Refactoring rebuilds applications to use auto-scaling, serverless functions, and managed services. Repurchasing trades custom apps for SaaS alternatives. Retiring eliminates applications nobody uses anymore (there are always a few).

Integration planning prevents migration disasters. Map every data flow—System A queries System B's database directly, System C calls System D's API, System E shares files with System F. Moving one system breaks connections with everything it touches.

When you migrate a database to the cloud, connection strings change. Firewall rules need updates. Authentication might switch from Windows integrated auth to certificate-based. Every system connecting to that database needs simultaneous updates, or you coordinate a maintenance window where both old and new systems run in parallel with data synchronization.

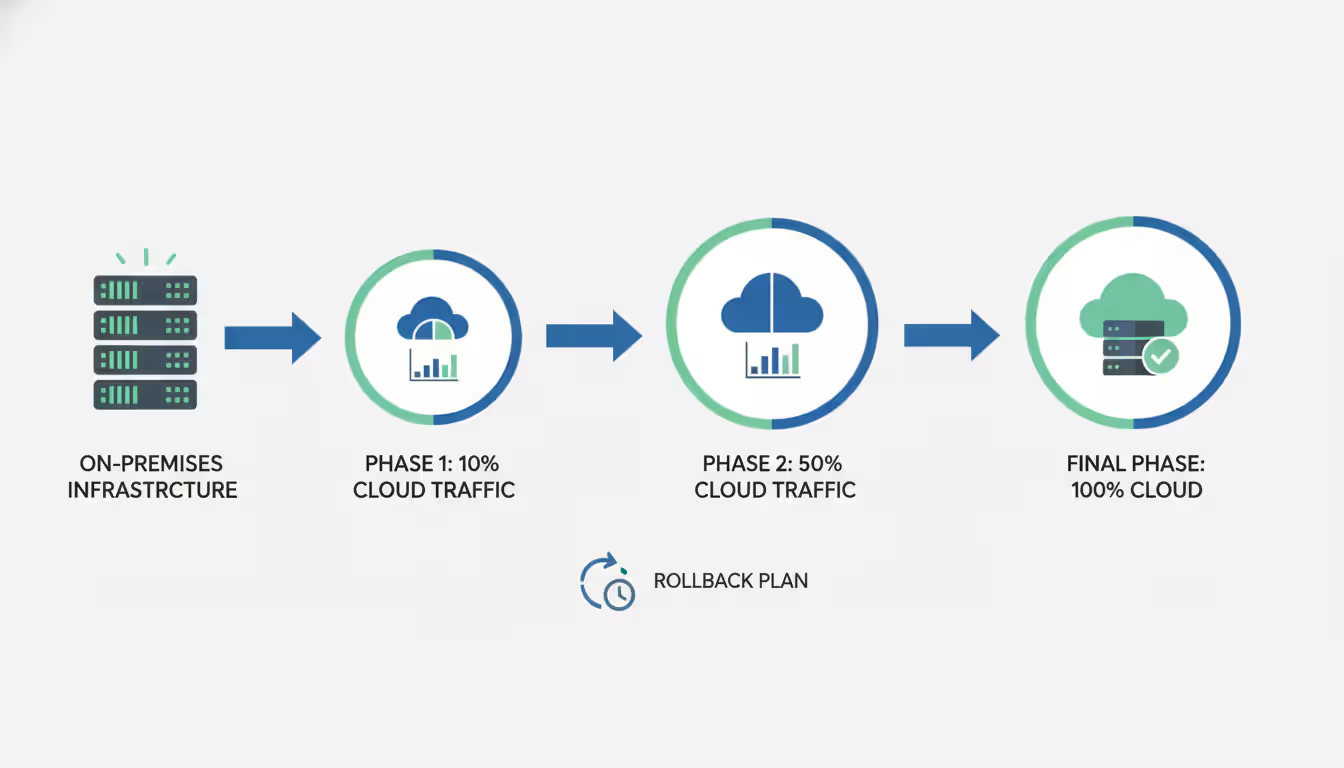

Phased approaches reduce risk dramatically. Move non-critical systems first. Your staging environment, internal wikis, development databases—break these and you've inconvenienced people, not lost customers. Build confidence and expertise before tackling production systems.

Keep legacy and cloud systems running together during transitions. Implement two-way synchronization so data changes flow both directions. Gradually shift traffic percentages—10% of users hit the cloud version while 90% stay on legacy. Monitor error rates and performance. Bump to 25%, then 50%, then 100% as confidence grows.

Rollback plans are insurance you hope to never use but need anyway. Document exact steps to revert each migration phase. Test rollbacks in non-production environments—you don't want to figure out the process during a 3 AM emergency. Keep backups of data and configurations until you're absolutely certain the migration succeeded.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Choosing the Best Cloud Platform for Your Integration Needs

Each major cloud provider approaches integration differently, reflecting their core strengths and historical focus.

| Cloud Platform | Native Integration Tools | API Support | Third-Party iPaaS Compatibility | Pricing Model | Best Use Case |

| AWS | EventBridge, Step Functions, AppFlow | Comprehensive REST APIs, AppSync for GraphQL | Excellent support from MuleSoft, Boomi, Workato | Per-execution pricing plus data transfer fees | Event-heavy architectures, complex multi-step workflows |

| Azure | Logic Apps, Service Bus, Event Grid | Full REST coverage with native Active Directory hooks | Strong Microsoft ecosystem partnerships | Choose consumption-based or fixed capacity plans | Organizations invested in Microsoft stack, hybrid deployments |

| Google Cloud | Pub/Sub, Cloud Functions, Workflows | RESTful APIs with excellent gRPC implementation | Growing partner network, improving coverage | Pay-per-use with sustained usage discounts | Data pipeline focus, ML-heavy workloads |

| Oracle Cloud | Integration Cloud, SOA Suite | REST and SOAP API support | Moderate partner ecosystem, Oracle-focused | Subscription bundles with other Oracle products | Heavy Oracle application users, database-centric operations |

AWS dominates the event-driven space. EventBridge processes millions of events per second without breaking a sweat. Step Functions provides visual workflow design that makes complex orchestrations manageable—you can literally see the logic flow. AppFlow bridges SaaS applications like Salesforce without writing custom connectors. The partner ecosystem is mature, meaning if you need to integrate with an obscure B2B platform, someone probably built a connector already.

Azure shines for Microsoft shops. Logic Apps offers low-code workflow design with hundreds of pre-built connectors. The authentication story is particularly smooth—if you're already using Active Directory, everything just works. Service Bus provides enterprise messaging with exactly-once delivery guarantees. Hybrid scenarios connecting on-premises data centers with cloud resources are Azure's sweet spot, using ExpressRoute for dedicated network connections.

Google Cloud built its integration tools around data movement and analytics. Pub/Sub excels at ingesting massive event streams—IoT sensor data, application logs, user activity tracking. Cloud Functions scales automatically to process those events. The real power emerges when feeding data into BigQuery for analysis or Vertex AI for machine learning. If your integration strategy centers on deriving insights from data, Google's tools align naturally.

Oracle Cloud targets a specific audience: organizations with significant Oracle software investments. Integration Cloud provides specialized adapters for Oracle ERP, HCM, and database products that understand Oracle's data structures natively. If you're running Oracle applications and gradually moving to the cloud, these purpose-built integrations smooth the transition considerably.

Selection depends on your starting point more than any objective "best." A company standardized on Microsoft 365, Azure AD, and .NET applications gains tremendous efficiency from Azure. A startup building from scratch might choose AWS for breadth. A data science team leveraging TensorFlow and BigQuery naturally gravitates toward Google Cloud.

Best Cloud Platform for Machine Learning Integration

Machine learning creates unique integration headaches because of data volumes, transformation complexity, and the need to serve predictions quickly.

Training models requires pulling data from everywhere. Transaction databases, clickstream logs, data lakes, third-party APIs, IoT sensors—anything that might contain useful signals. These sources rarely share common formats or update frequencies. Your pipeline needs to handle schema changes gracefully when source systems add fields or change data types.

Feature engineering transforms raw inputs into model-ready data. This often demands distributed processing—calculating rolling averages across billions of transactions won't fit on one machine. The transformation logic gets complicated quickly: aggregations, joins, window functions, custom calculations.

Deploying trained models creates different integration challenges. Real-time scoring might require millisecond response times. A fraud detection model sitting in the transaction approval flow can't take five seconds to respond. Batch prediction scenarios process millions of records overnight, requiring integration with data warehouses and reporting tools.

Google Cloud offers the most integrated ML experience today. Vertex AI combines data prep, training, deployment, and monitoring in one platform. You can visualize lineage showing exactly how data flowed from source databases through transformations into training datasets. Dataflow pipelines handle both streaming and batch processing. BigQuery serves as the central data repository with built-in ML capabilities—you can train models using SQL queries.

AWS provides maximum flexibility through SageMaker. You're not locked into specific tools—bring any framework, any algorithm, any custom processing. SageMaker Pipelines orchestrates workflows connecting data preprocessing, training, and deployment. EventBridge triggers retraining automatically when new data arrives. Lambda functions handle lightweight inference, or deploy to dedicated endpoints for high-throughput scenarios. The learning curve is steeper but the ceiling is higher.

Azure Machine Learning integrates tightly with Microsoft's data stack. Data Factory pipelines move data from on-premises SQL Server, Azure SQL Database, and dozens of other sources. Synapse Analytics combines data warehousing with Apache Spark for transformations that need serious compute power. Models deploy to Azure Kubernetes Service for scalable serving or Azure Functions for serverless execution.

The fatal mistake companies make with ML integration is treating it like a science fair project.They train a model, deploy it, and walk away. Real-world data drifts constantly. Your integration architecture needs continuous monitoring, automated retraining pipelines, and zero-downtime model updates. Otherwise, you're making predictions based on yesterday's patterns

— Dr. Elena Vasquez

Feature stores address a painful problem: keeping training and serving consistent. During training, you calculate features using batch jobs processing historical data. During serving, you need those same features computed in real-time from live data. Inconsistencies cause "training-serving skew" where models perform great in testing but fail in production.

Google Vertex AI Feature Store, AWS SageMaker Feature Store, and Azure Machine Learning Feature Store solve this by centralizing feature definitions. You write the transformation logic once, and the platform handles serving features consistently for both training and inference. They manage the complex synchronization between offline batch computation and online real-time serving.

Data governance integration isn't optional anymore. You need to track which datasets trained which models for regulatory compliance. Access controls prevent sensitive data from leaking into models inappropriately. Audit logs demonstrate due diligence when regulators come asking questions.

Common Cloud Integration Challenges and Solutions

Security worries keep integration teams awake at night. Data crossing system boundaries creates attack surfaces. Developers accidentally commit API keys to GitHub repositories. Unencrypted connections expose customer information to anyone sniffing network traffic.

Authentication and authorization form your first defense line. OAuth 2.0 handles delegated access without sharing passwords between systems. Service accounts get exactly the permissions they need and nothing more—following "principle of least privilege" means a compromised integration can't trash your entire database. Secrets management services like AWS Secrets Manager, Azure Key Vault, and Google Secret Manager automate credential rotation and centralize access control.

Encryption protects data moving between systems and sitting in storage. TLS 1.3 should be your minimum standard for API connections—anything less has known vulnerabilities. Field-level encryption adds a second layer for particularly sensitive data. Even if attackers compromise the broader system, the credit card numbers remain encrypted with keys they don't have access to.

Data synchronization creates fascinating problems. A customer updates their email address in your CRM. That change needs to propagate to your marketing platform, support ticketing system, and billing database. Sounds simple until network failures, processing delays, and concurrent updates complicate everything.

Event-driven approaches reduce synchronization complexity significantly. When the CRM receives an email update, it publishes an "email changed" event to a message broker. Interested systems subscribe to these events and update their local copies independently. This decouples systems—the CRM doesn't need to know which systems care about email changes. Messages queue during outages and get processed once subscribers recover.

Conflict resolution handles concurrent updates gracefully. "Last write wins" is simple but loses data when two systems update simultaneously. Vector clocks track causality to identify true conflicts. Application-specific logic might merge changes intelligently or flag conflicts for humans to resolve.

Latency problems affect time-sensitive integrations. An e-commerce checkout calling inventory checks, payment processing, tax calculation, and fraud detection accumulates delays from each call. Network distance between regions adds milliseconds that compound quickly.

Caching reduces calls to slow services. API gateways cache product catalog data that changes infrequently. Time-to-live settings balance freshness requirements against performance gains. Cache invalidation on updates maintains consistency without sacrificing speed.

Parallel execution cuts total latency when operations don't depend on each other. Instead of calling inventory, then payment, then fraud sequentially—waiting for each to finish—invoke all three simultaneously and wait only for the slowest response.

Circuit breakers prevent cascade failures. When a service becomes unresponsive, the circuit breaker stops sending requests after a failure threshold. This gives the struggling service breathing room to recover instead of drowning under retry attempts. Calling systems get fast failures instead of timing out slowly.

Author: Vanessa Norwood;

Source: clatsopcountygensoc.com

Vendor lock-in sneaks up gradually. You use AWS Step Functions for orchestration, Lambda for processing, and S3 for storage. Everything works great until you want to switch providers and realize the entire architecture assumes AWS-specific services.

Abstraction layers create portability. Your application interacts with a storage interface rather than calling S3 APIs directly. The implementation can swap between S3, Azure Blob Storage, or Google Cloud Storage without touching application code. You're trading some complexity upfront for flexibility later.

Open standards provide escape hatches. REST APIs work everywhere. Containerized applications run on any Kubernetes cluster—EKS, AKS, or GKE. Terraform scripts describe infrastructure for multiple providers with similar syntax.

Multi-cloud strategies sound appealing but multiply operational complexity. Managing integrations across AWS, Azure, and Google Cloud requires deep expertise in all three. Unified monitoring spans multiple platforms. Security policies need consistent implementation despite different tools.

Cloud Integration Tools and Methods

Integration Platform as a Service (iPaaS) solutions bundle pre-built connectors, visual designers, and managed infrastructure. MuleSoft Anypoint Platform provides extensive connector libraries covering enterprise applications, databases, and cloud services. Dell Boomi emphasizes drag-and-drop workflow design targeting non-developers. Workato calls its automation templates "recipes"—emphasizing simplicity for business users.

iPaaS advantages include speed and reduced infrastructure burden. Pre-built connectors mean you're not writing Salesforce API integration code from scratch. Managed infrastructure eliminates server provisioning and scaling concerns. Centralized monitoring provides visibility across all integrations from one dashboard.

Disadvantages center on cost and flexibility. iPaaS pricing often scales with transaction volume or connection count, becoming expensive at high volumes. Customization limitations mean complex data transformations might require awkward workarounds. Vendor dependency creates friction if you outgrow the platform's capabilities or want to switch providers.

Native cloud tools integrate seamlessly within their ecosystems. AWS Step Functions orchestrates workflows using Lambda, ECS, SNS, and other AWS services with minimal configuration. Azure Logic Apps connects Microsoft services almost magically. Google Cloud Workflows coordinates Google Cloud resources efficiently.

Native tools excel at intra-platform integrations. They leverage platform-specific features like IAM roles for authentication and CloudWatch for monitoring. Pricing follows consumption models aligned with other platform services—you're not paying extra for integration capabilities.

Limitations surface when integrating across cloud providers or with on-premises systems. Native tools often lack connectors for third-party SaaS applications. Cross-cloud integrations require custom code or additional middleware to bridge the gap.

Custom API development delivers maximum control. You build exactly the integration logic required without platform constraints. Performance optimization targets specific bottlenecks in your workflow. Proprietary business logic stays protected in code you control completely.

Custom development demands more investment upfront. Teams implement error handling, retry logic, authentication, monitoring, and documentation from scratch. Ongoing maintenance falls on internal staff—no vendor support when things break. Knowledge transfer becomes critical as team members change roles.

Hybrid approaches combine methods based on each integration's characteristics. High-volume integrations between cloud services leverage native tools for efficiency and cost. B2B integrations with external partners use iPaaS connectors to accelerate development. Unique business processes with specialized logic justify custom development investment.

API management platforms like Apigee, Kong, and AWS API Gateway sit in front of integration endpoints. They handle authentication, rate limiting, analytics, and documentation without cluttering your integration code. Versioning support enables gradual migration to new API contracts. Developer portals help external partners understand and consume your APIs effectively.

Frequently Asked Questions About Cloud Platform Integration

Successful cloud platform integration rewards careful planning more than heroic execution. Start by defining clear requirements—which systems must connect, what data flows between them, what performance expectations exist, and what compliance constraints apply. These answers guide every subsequent decision about architecture and tooling.

Avoid one-size-fits-all thinking. Native cloud tools work beautifully for same-platform integrations. iPaaS solutions accelerate standard patterns. Custom development makes sense for unique requirements or performance-critical scenarios. Mix approaches based on each integration's specific needs.

Embed security from day one rather than bolting it on later. Encryption, authentication, authorization, and monitoring protect sensitive data throughout its journey. Regular security reviews address emerging threats and evolving compliance requirements.

Design for change because requirements never stay static. Businesses evolve, systems get replaced, data formats shift. Integration architectures using abstraction layers, versioned APIs, and event-driven designs accommodate updates without requiring complete rebuilds.

Start small and build momentum. Prove your approach with pilot projects before rolling out enterprise-wide. Learn from early implementations to refine processes, validate tool choices, and develop team expertise. Treat cloud platform integration as an ongoing capability you're building, not a one-time project you're completing. The organizations that succeed view integration as continuous improvement rather than a checkbox to mark done.

Related Stories

Read more

Read more

The content on this website is provided for general informational and educational purposes related to cloud computing, network infrastructure, and IT solutions. It is not intended to constitute professional technical, engineering, or consulting advice.

All information, tools, and explanations presented on this website are for general reference only. Network environments, system configurations, and business requirements may vary, and results may differ depending on specific use cases and infrastructure.

This website is not responsible for any errors or omissions, or for actions taken based on the information, tools, or technical recommendations presented.